Data-Driven Crowd Motion Control with Multi-Touch Gestures

Yijun Shen, Joseph Henry, He Wang, Edmond S. L. Ho, Taku Komura and Hubert P. H. Shum

Computer Graphics Forum (CGF), 2018

Invited presentation at Eurographics 2019 Impact Factor: 2.9† Citation: 15#

Abstract

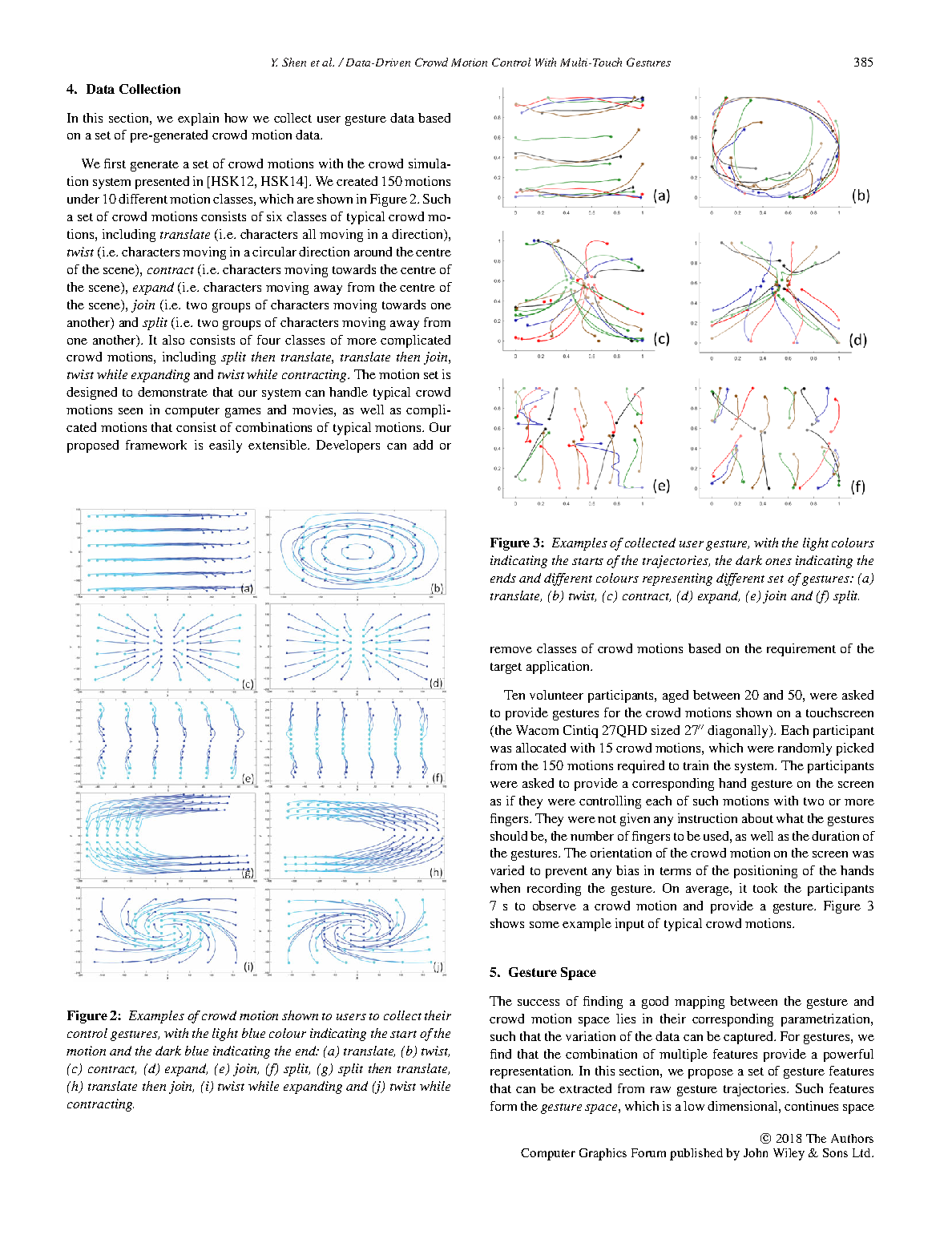

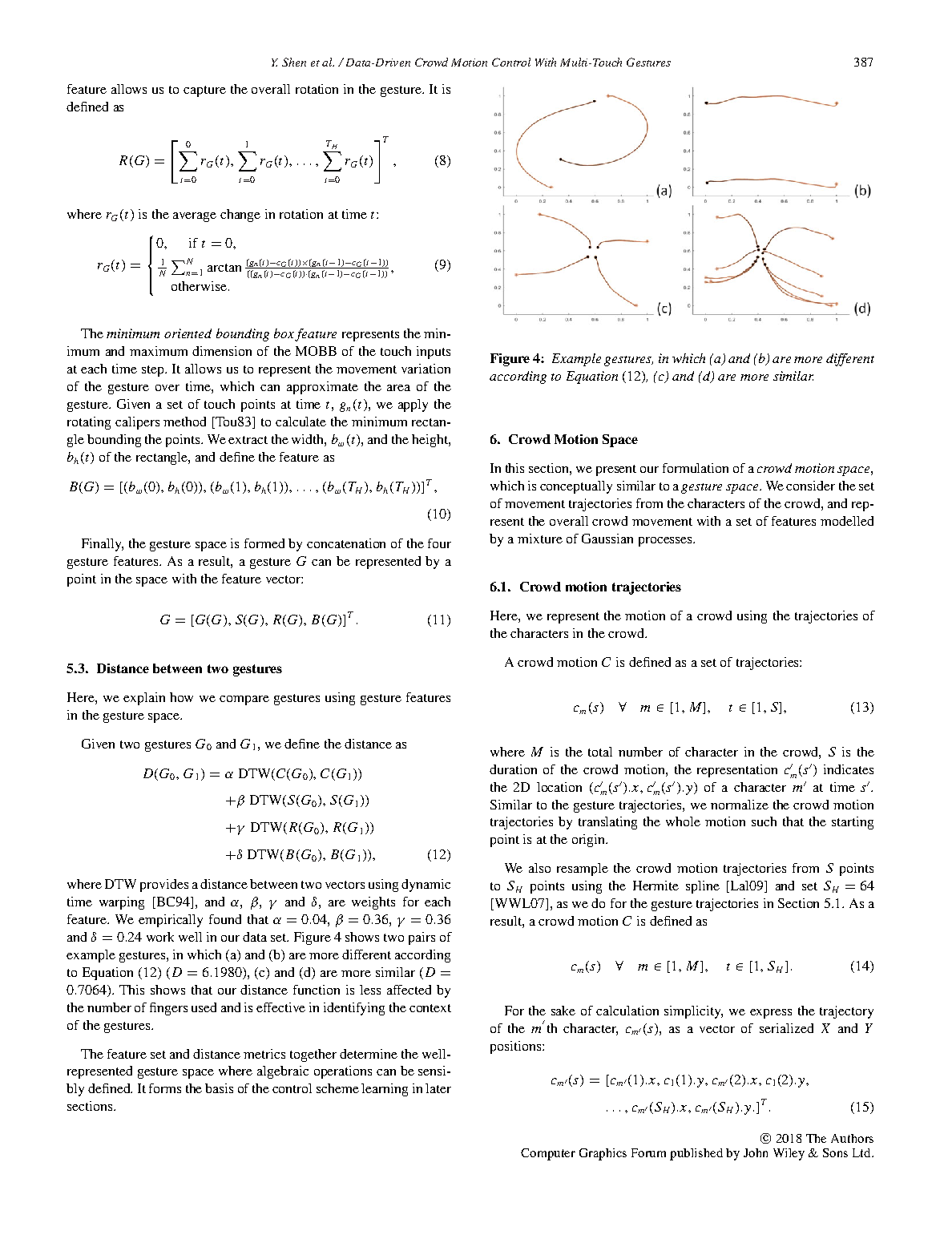

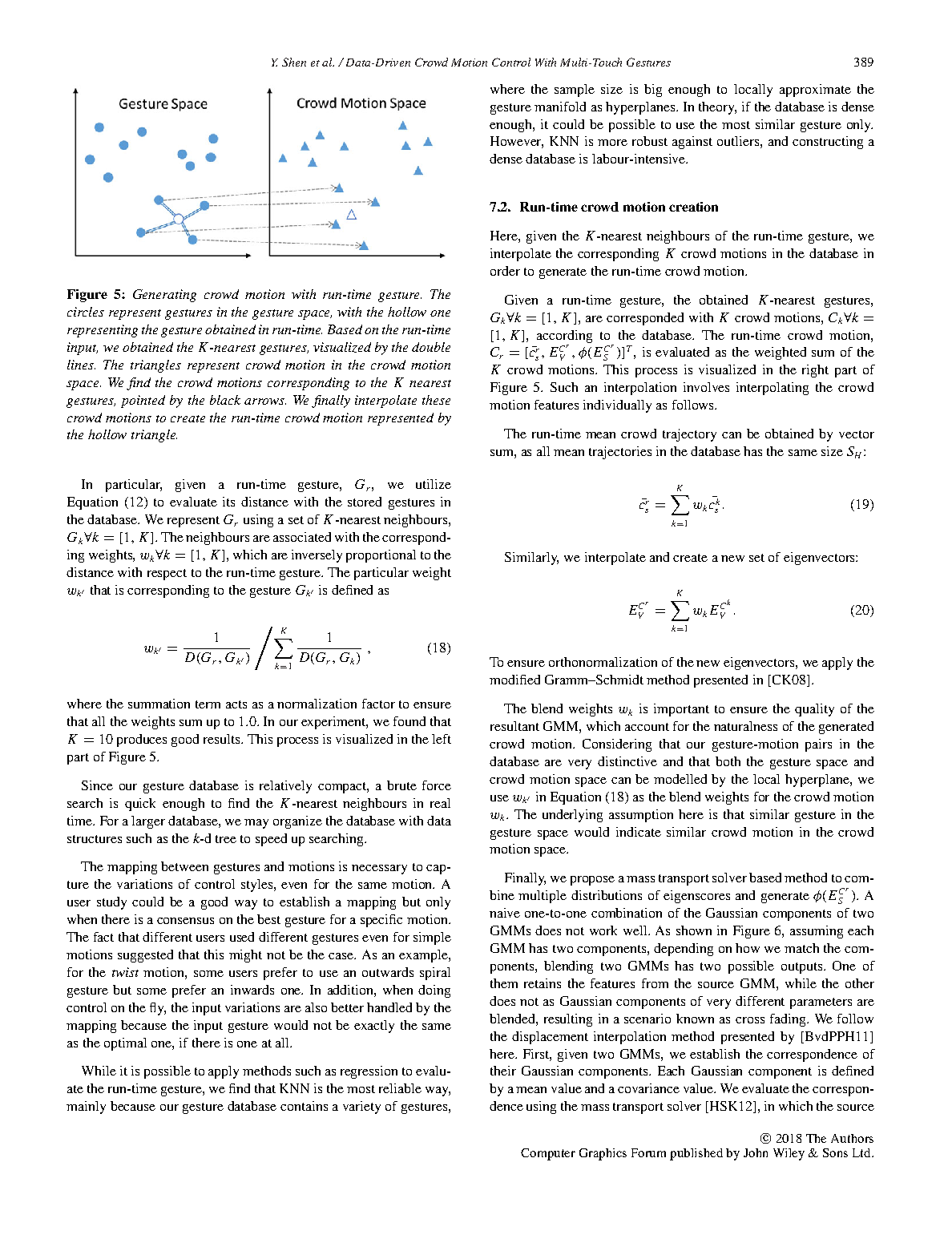

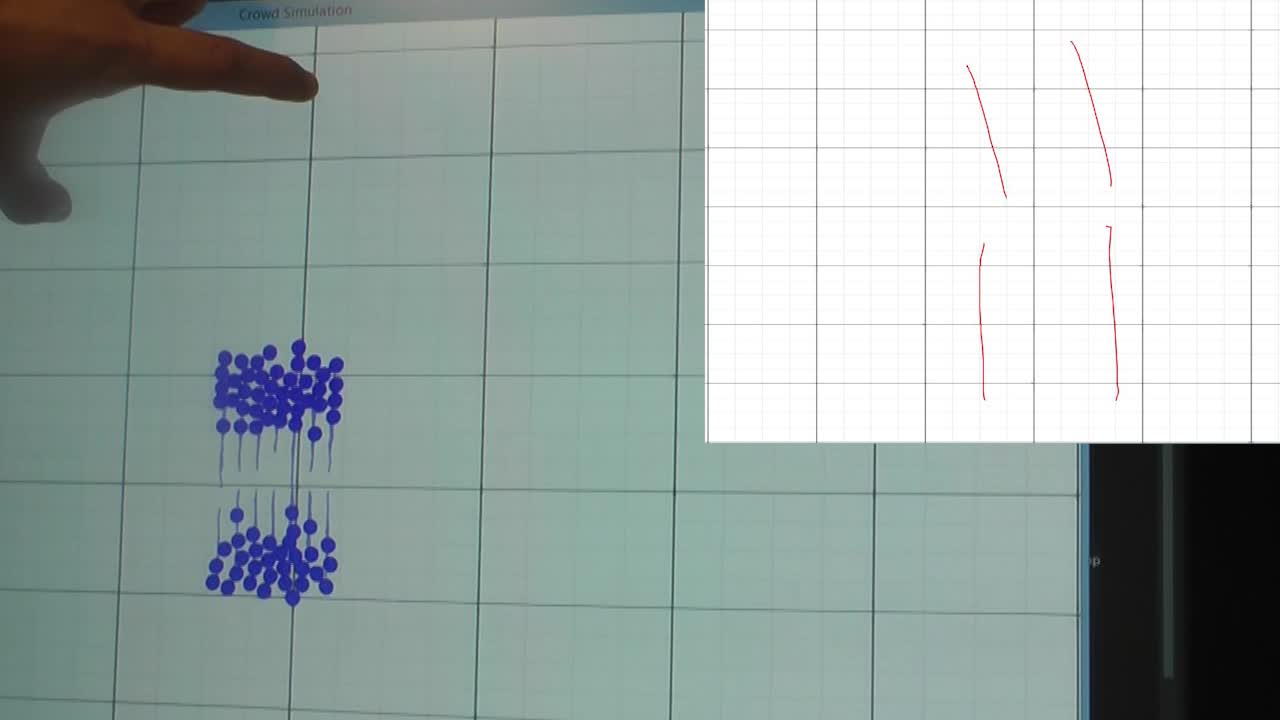

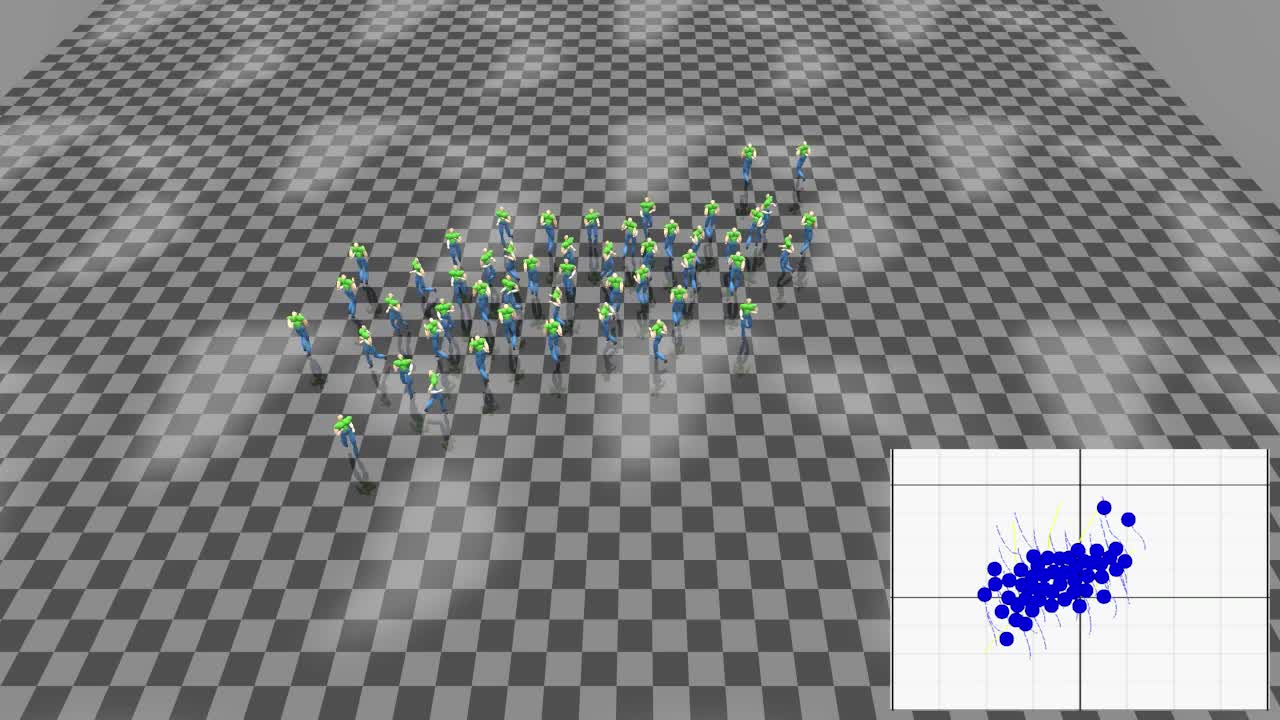

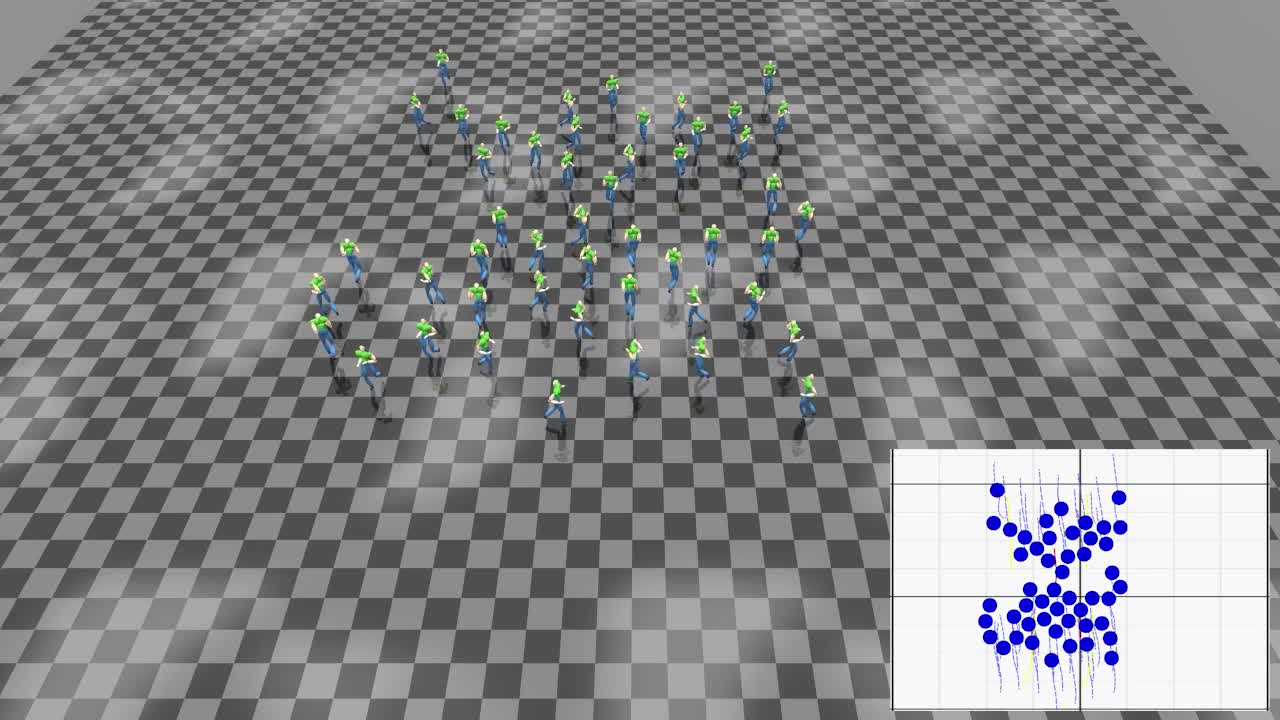

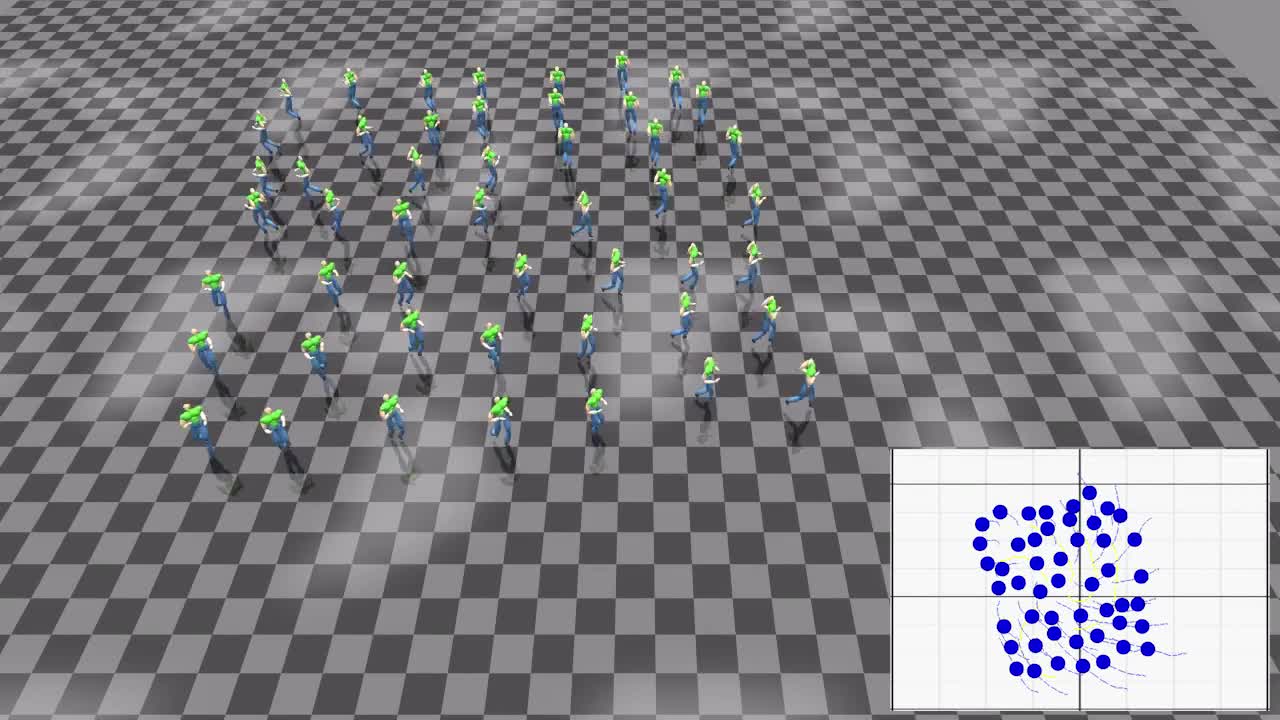

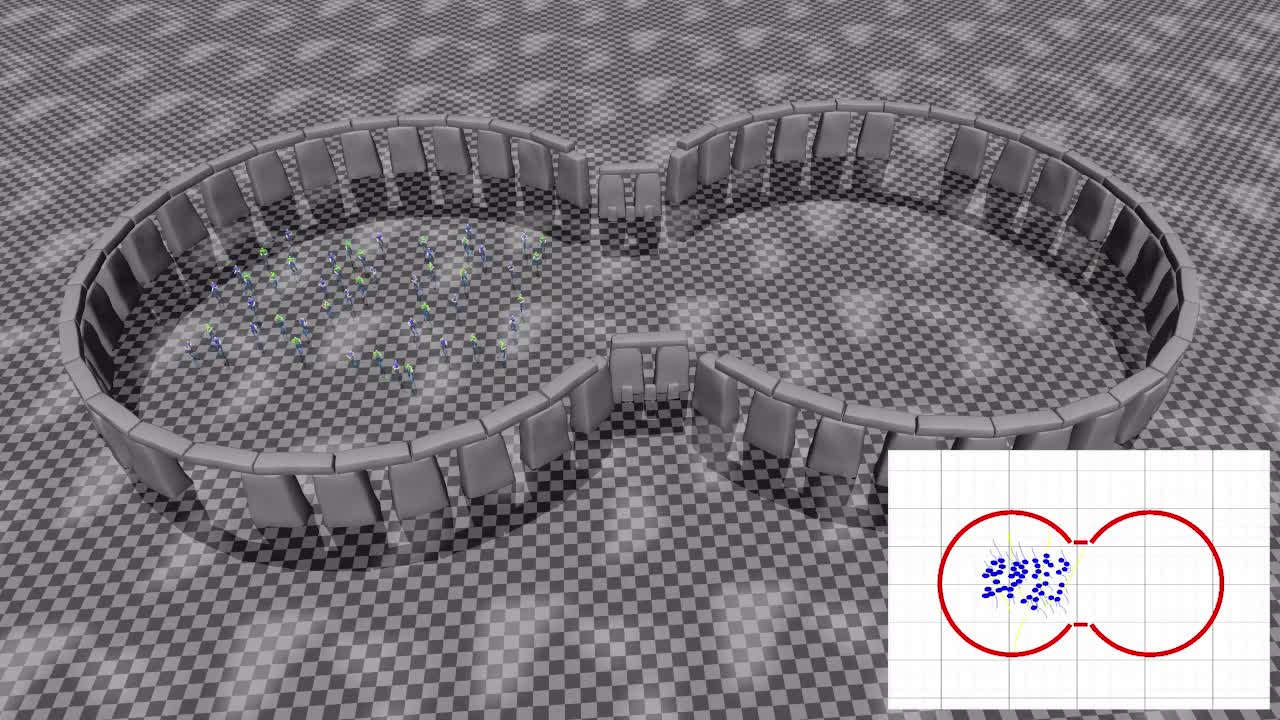

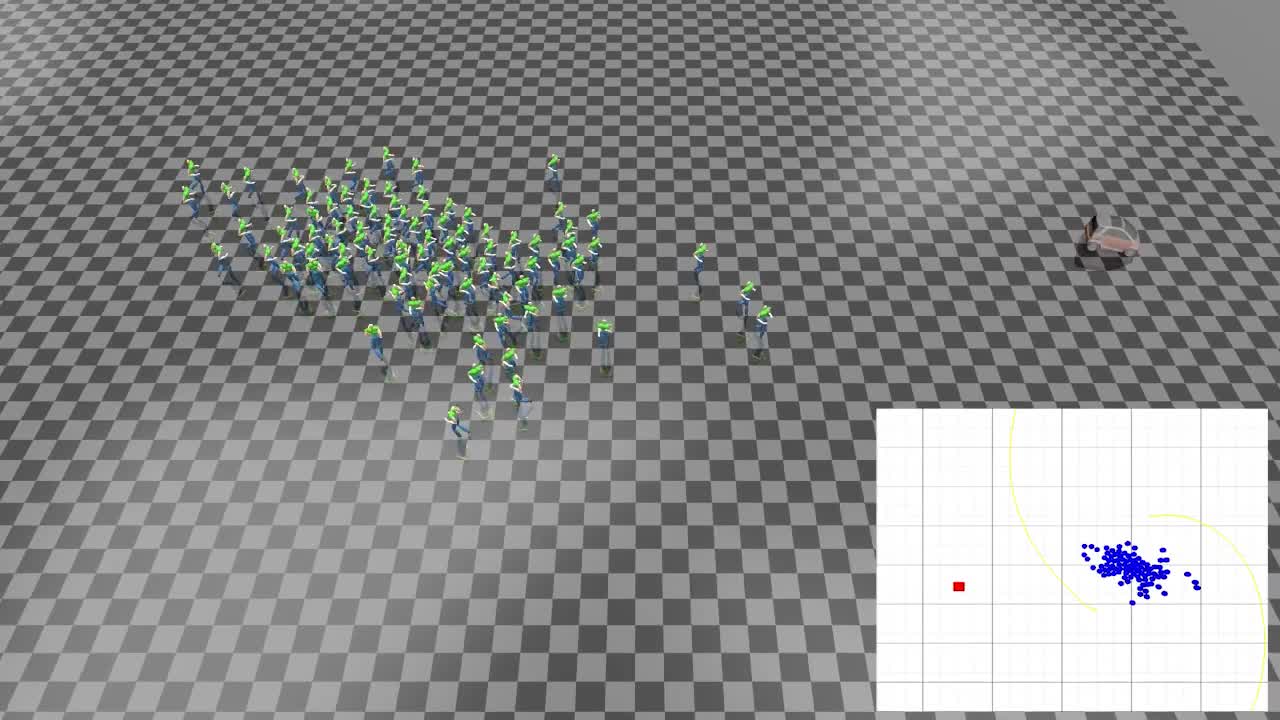

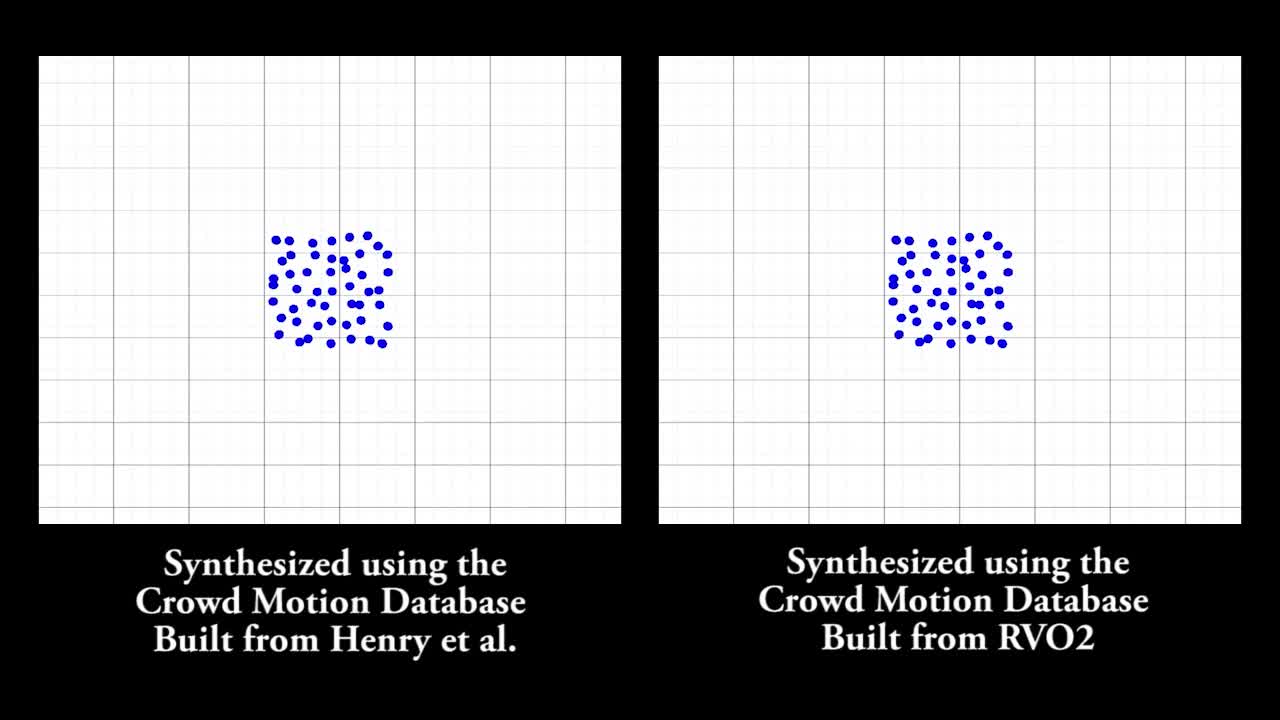

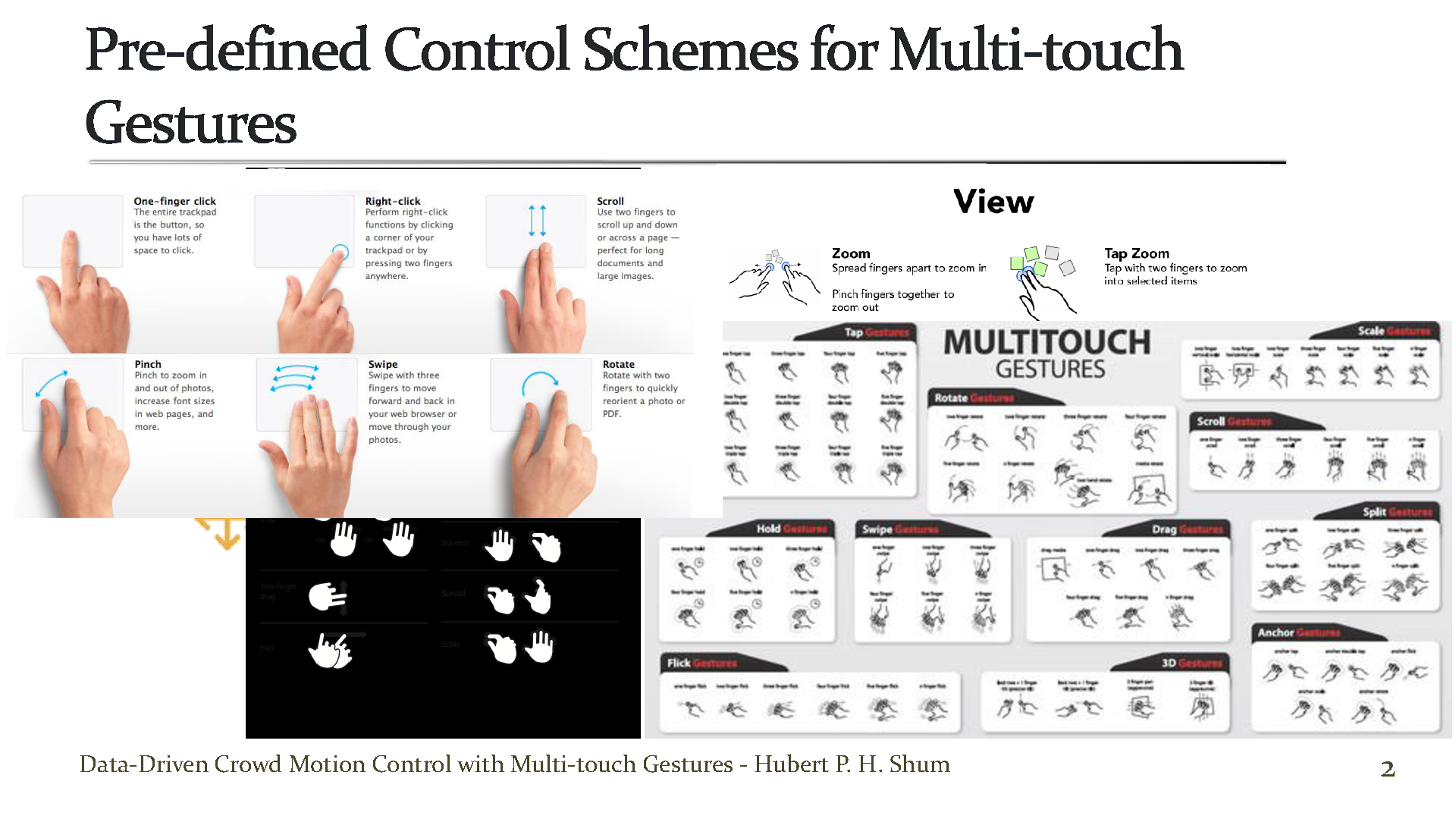

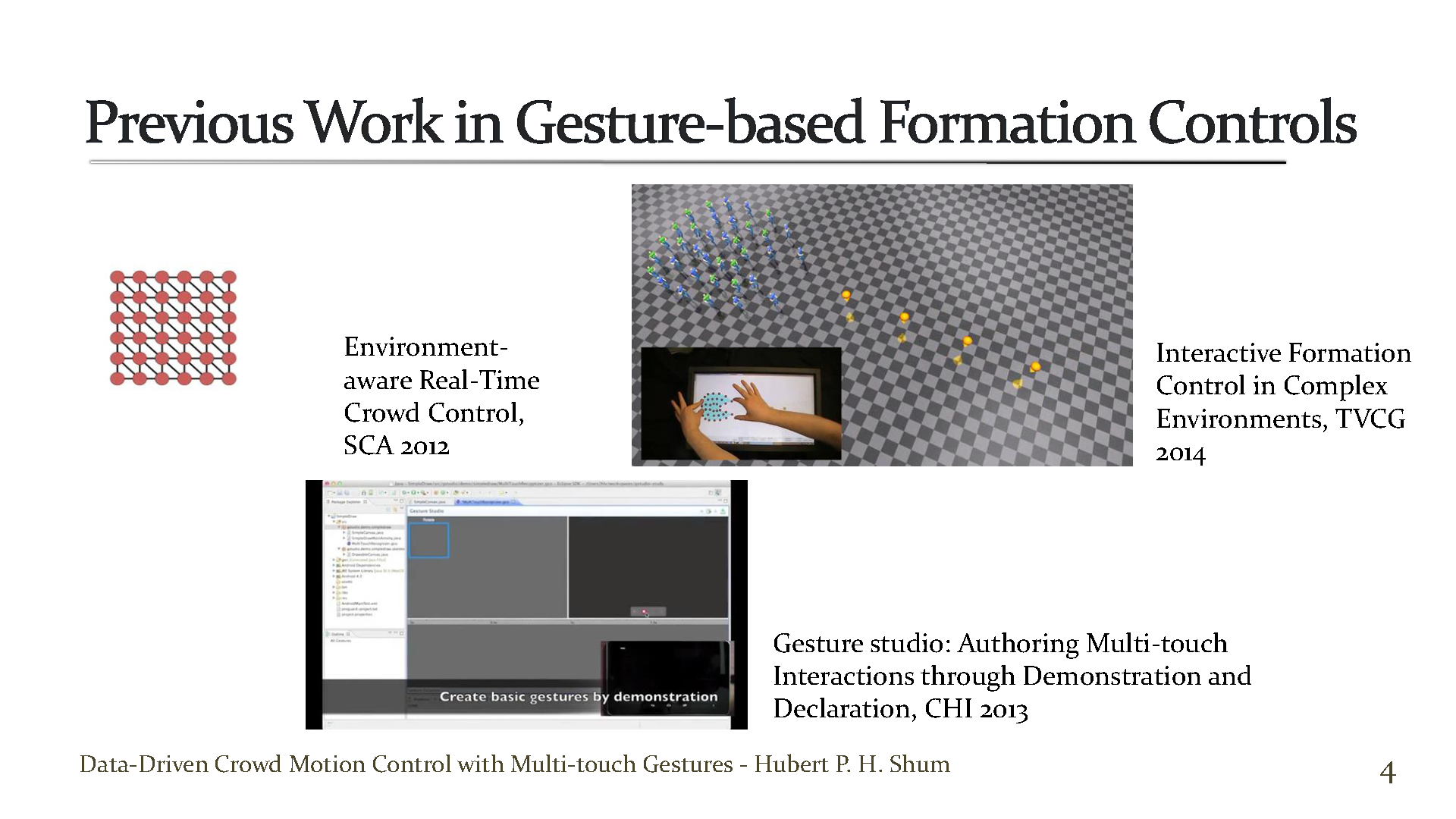

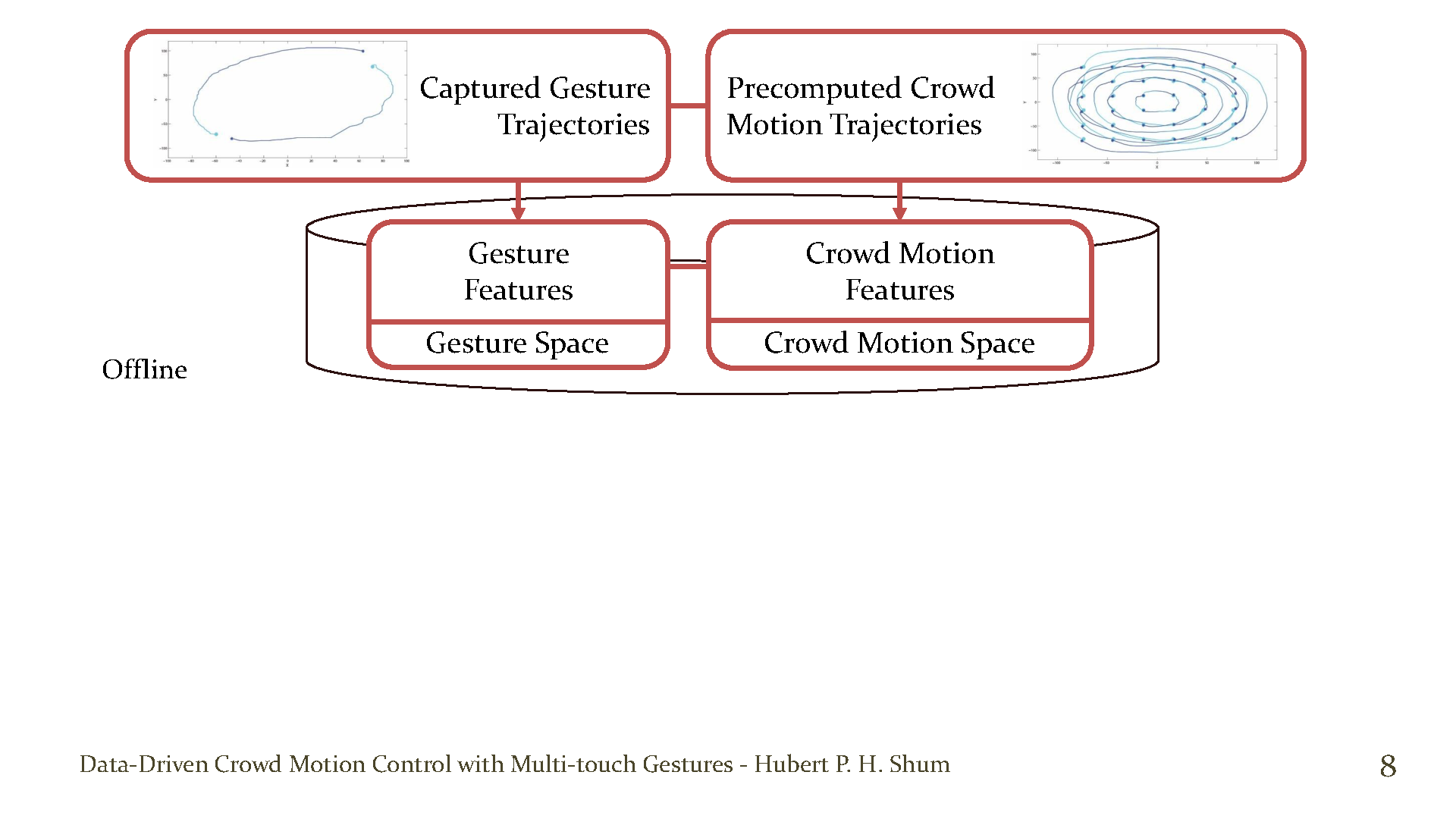

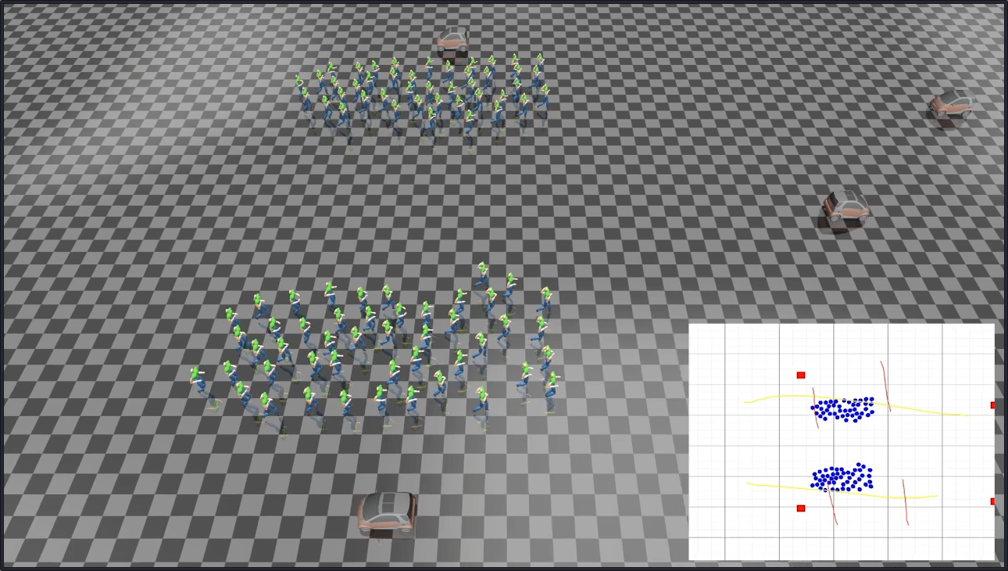

Controlling a crowd using multi-touch devices appeals to the computer games and animation industries, as such devices provide a high dimensional control signal that can effectively define the crowd formation and movement. However, existing works relying on pre-defined control schemes require the users to learn a scheme that may not be intuitive. We propose a data-driven gesture-based crowd control system, in which the control scheme is learned from example gestures provided by different users. In particular, we build a database with pairwise samples of gestures and crowd motions. To effectively generalize the gesture style of different users, such as the use of different numbers of fingers, we propose a set of gesture features for representing a set of hand gesture trajectories. Similarly, to represent crowd motion trajectories of different numbers of characters over time, we propose a set of crowd motion features that are extracted from a Gaussian mixture model. Given a run-time gesture, our system extracts the K nearest gestures from the database and interpolates the corresponding crowd motions in order to generate the run-time control. Our system is accurate and efficient, making it suitable for real-time applications such as real-time strategy games and interactive animation controls.

YouTube

Cite This Research

Supporting Grants

Received from Northumbria University, UK, 2015-2018

Project Page