Resolving Hand-Object Occlusion for Mixed Reality with Joint Deep Learning and Model Optimization

Qi Feng, Hubert P. H. Shum and Shigeo Morishima

Computer Animation and Virtual Worlds (CAVW) - Proceedings of the 2020 International Conference on Computer Animation and Social Agents (CASA), 2020

Impact Factor: 1.7† Citation: 10#

Abstract

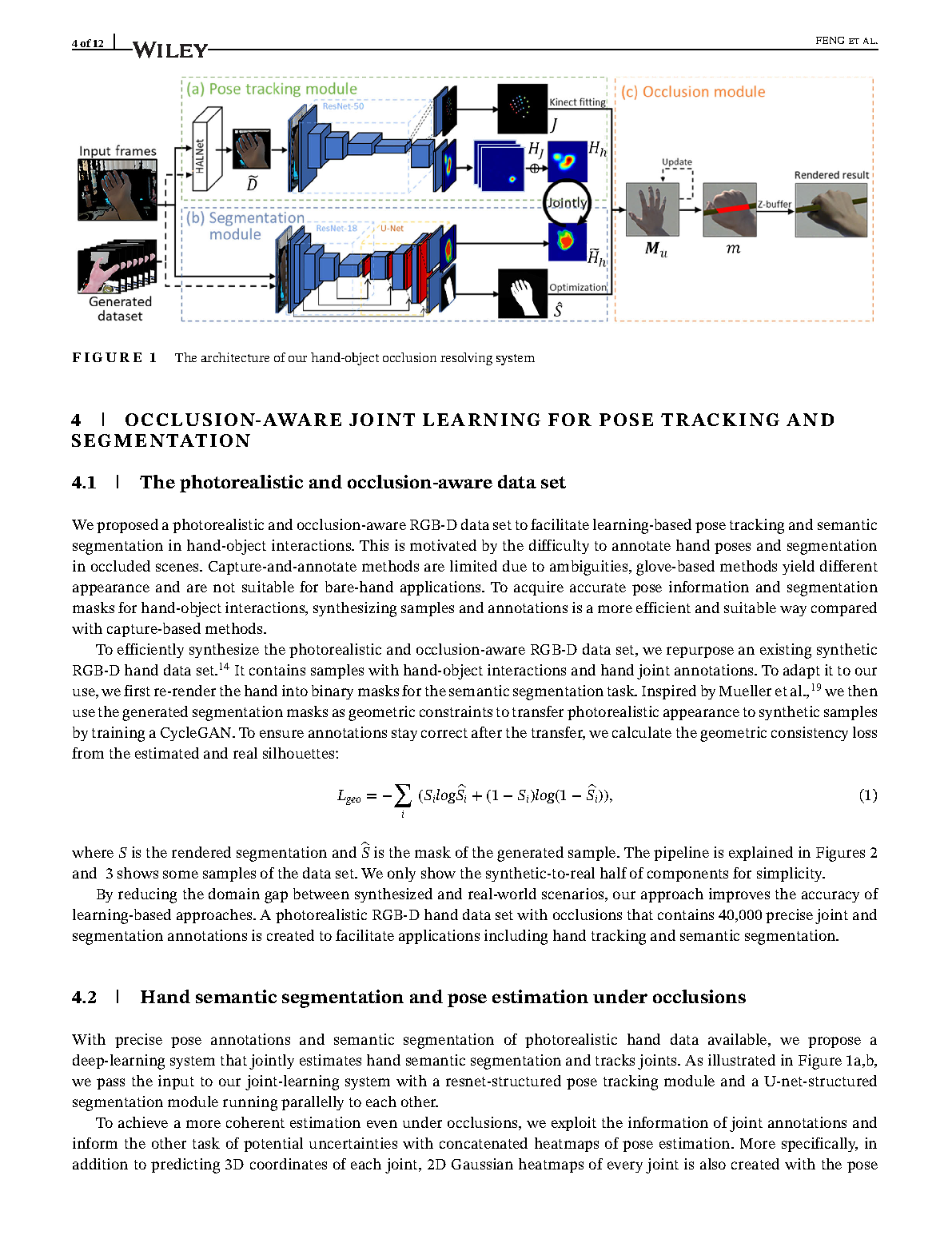

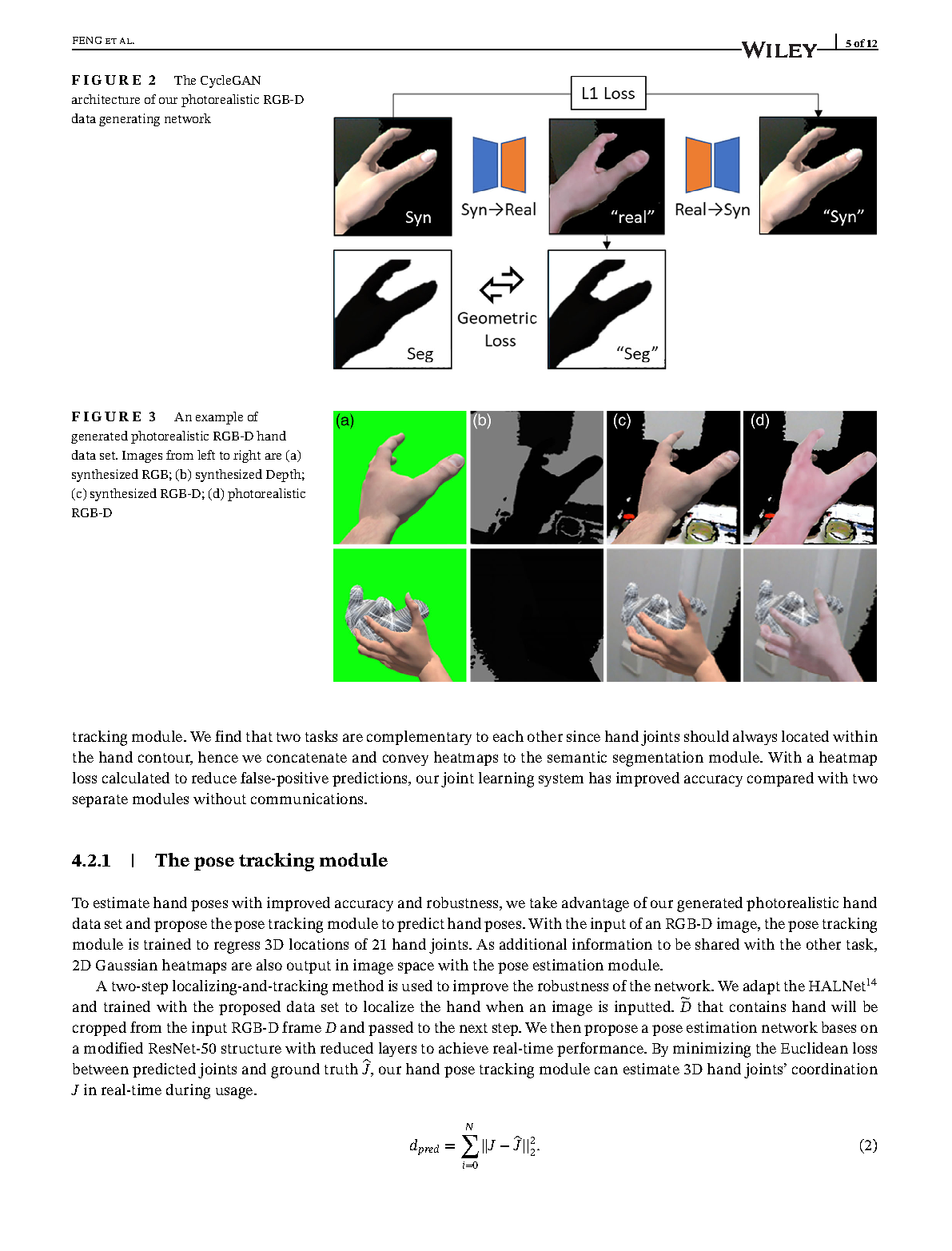

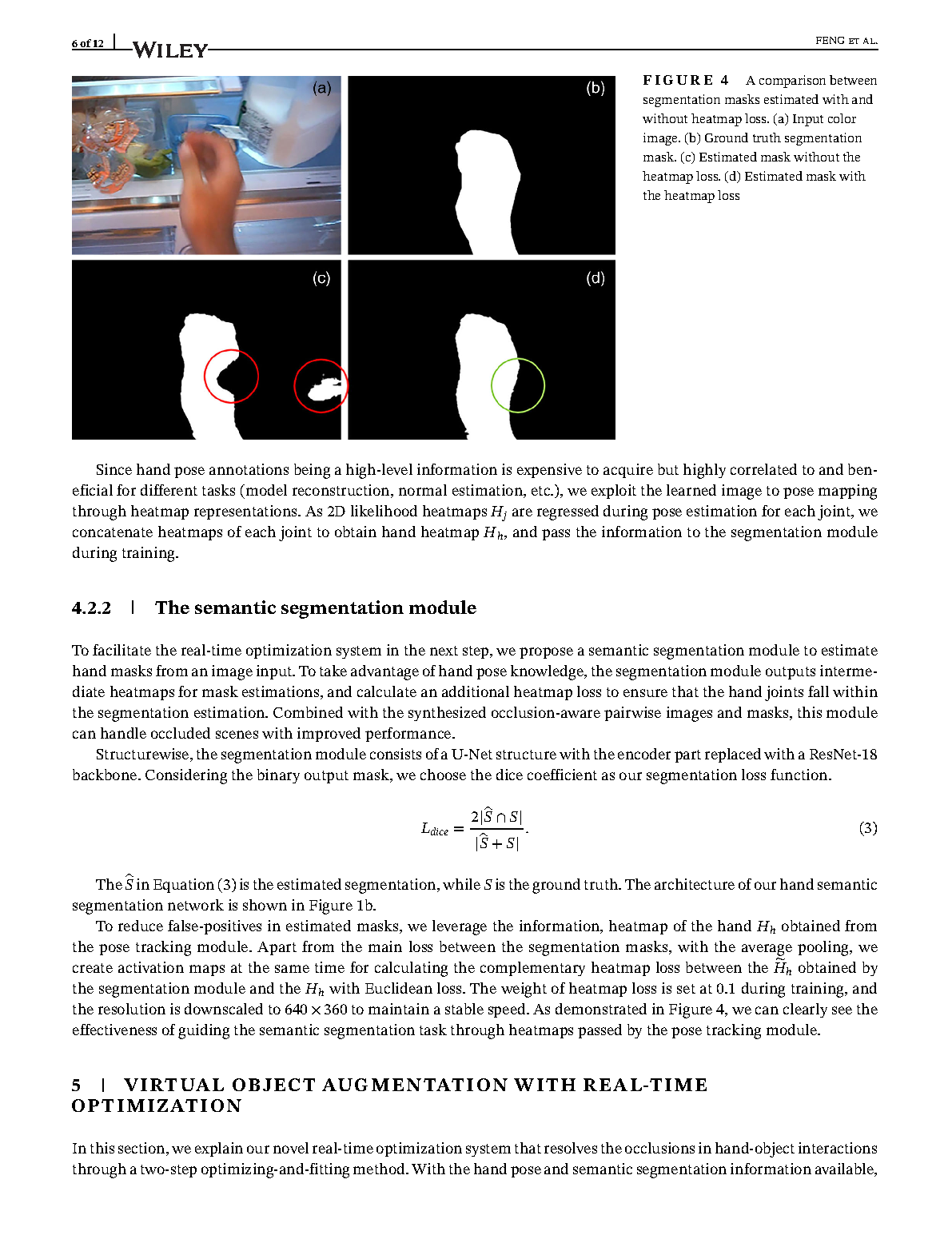

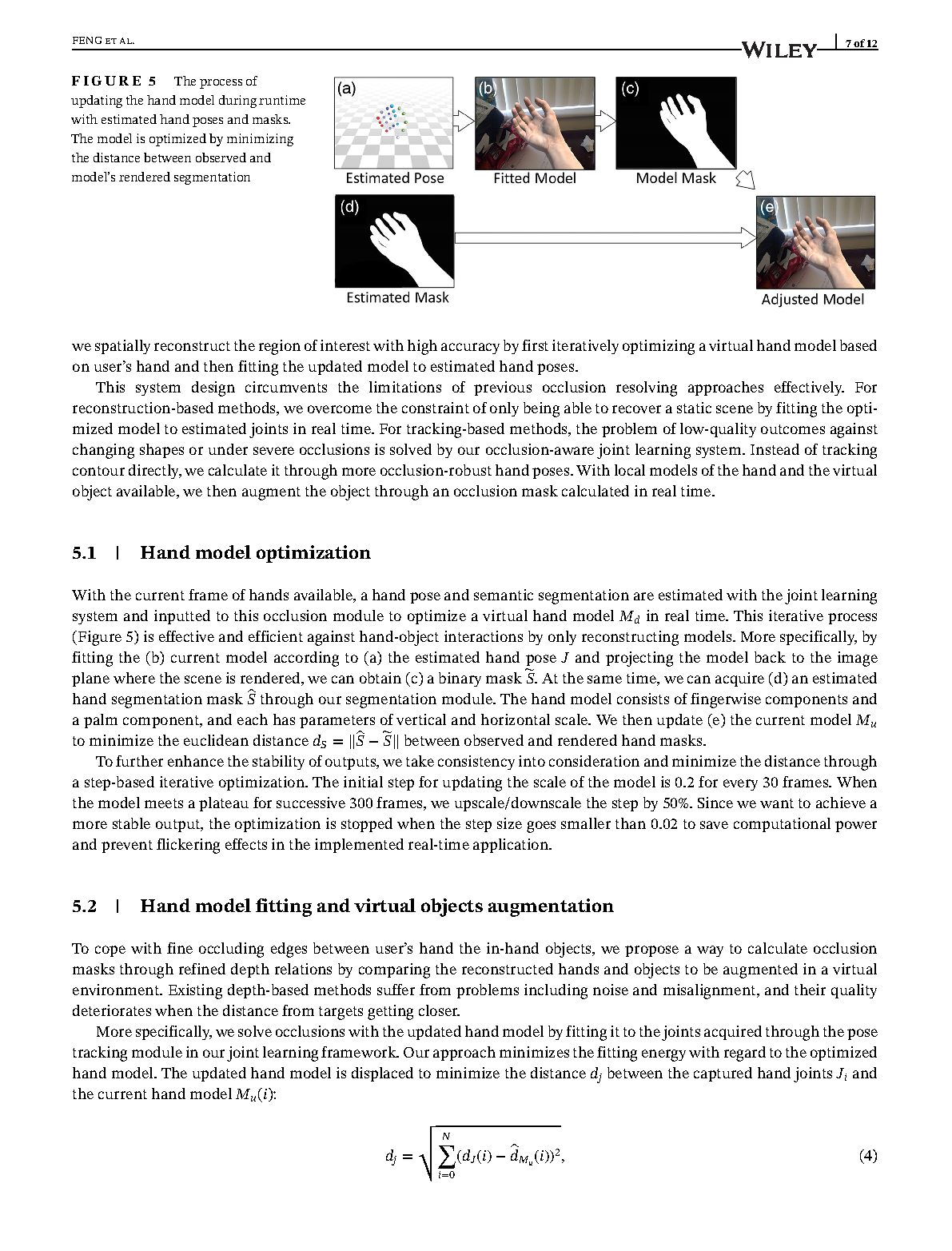

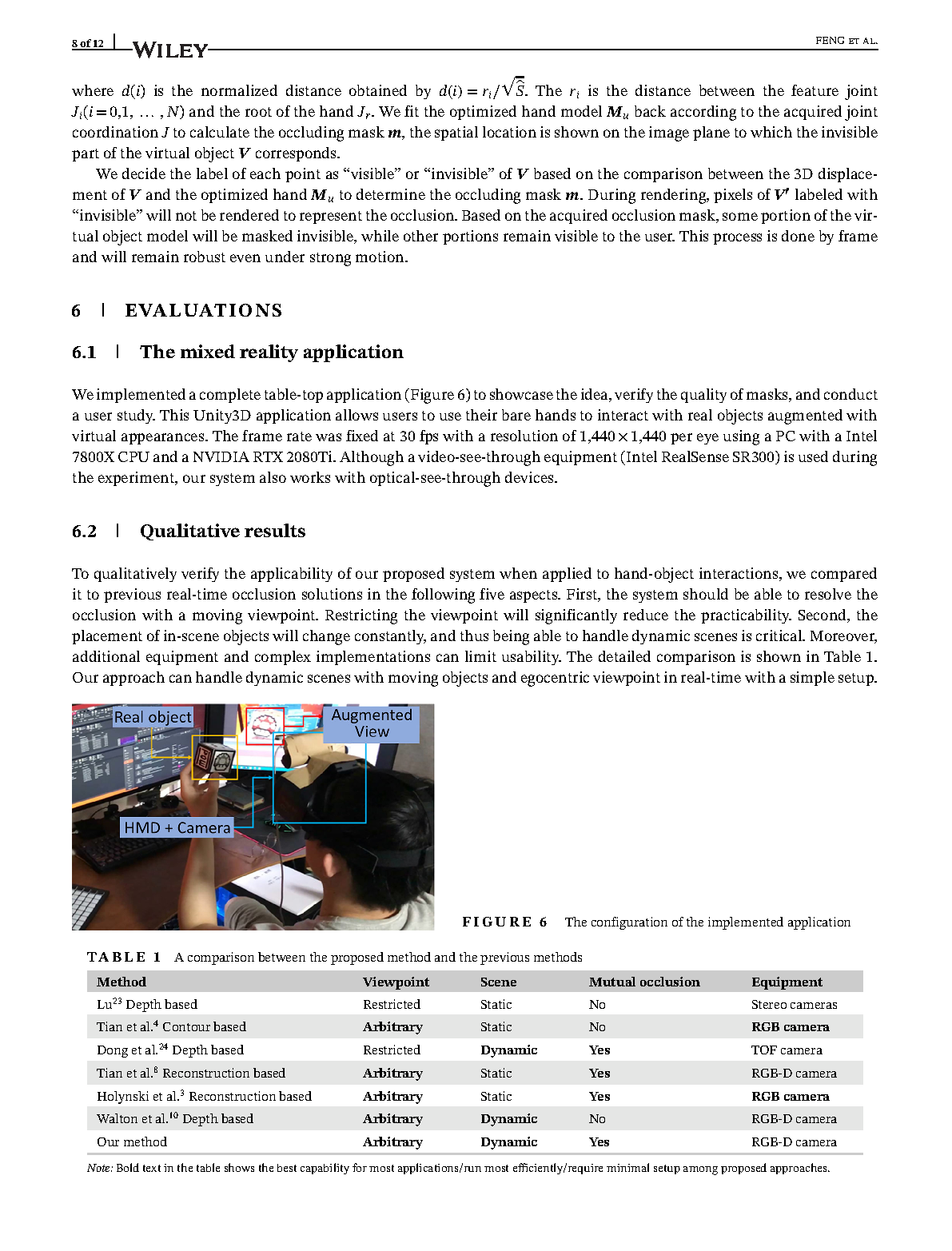

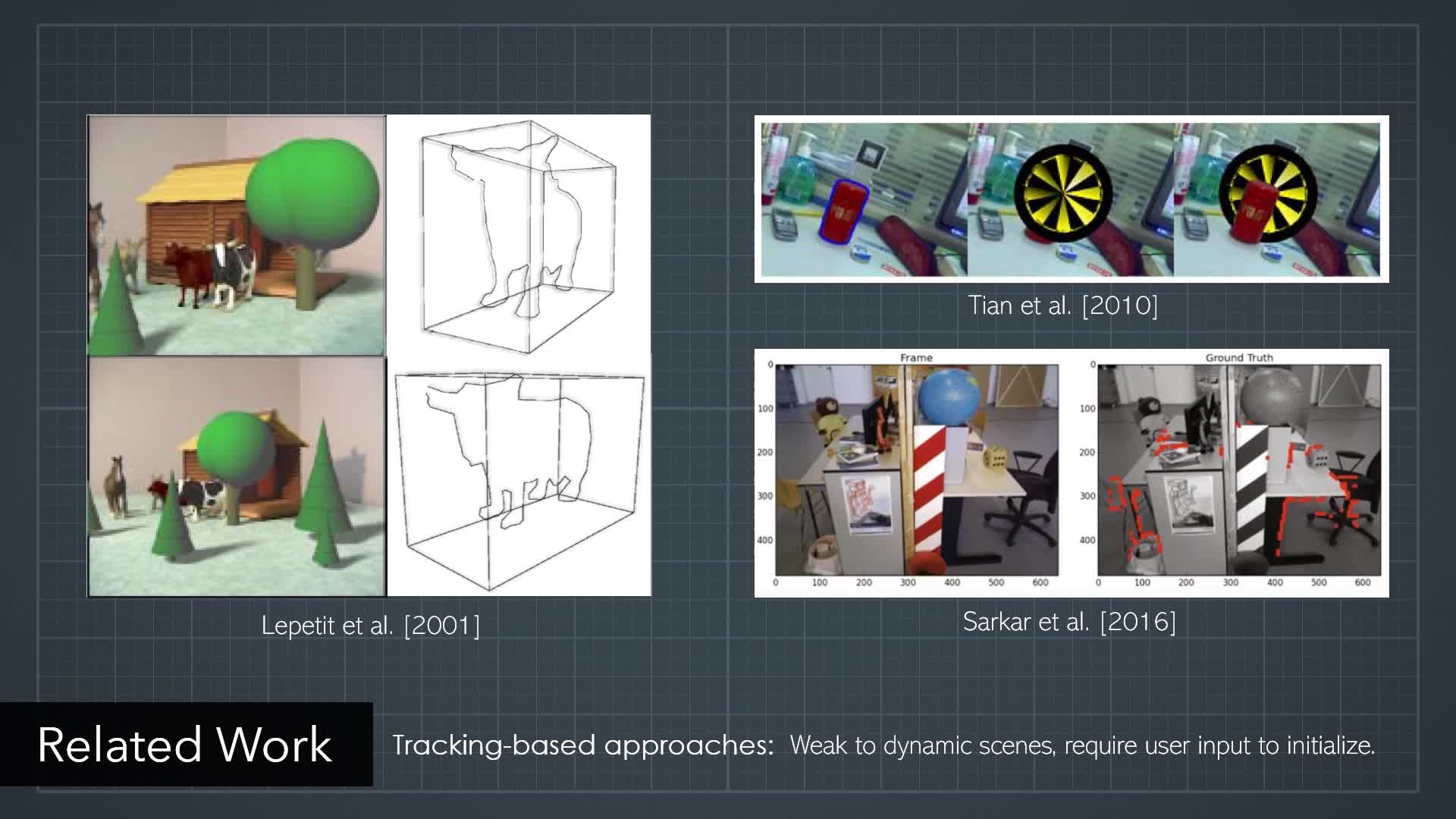

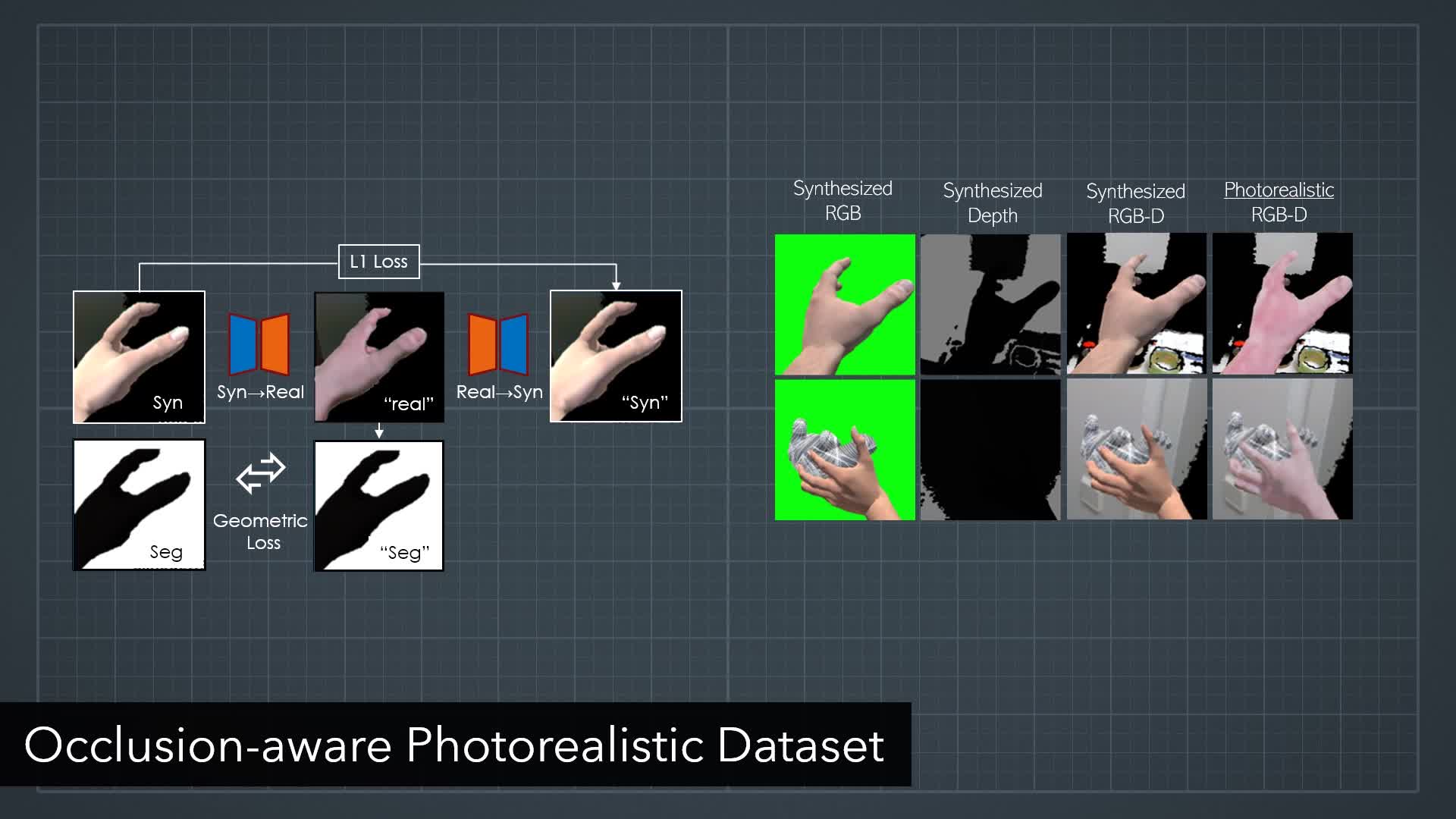

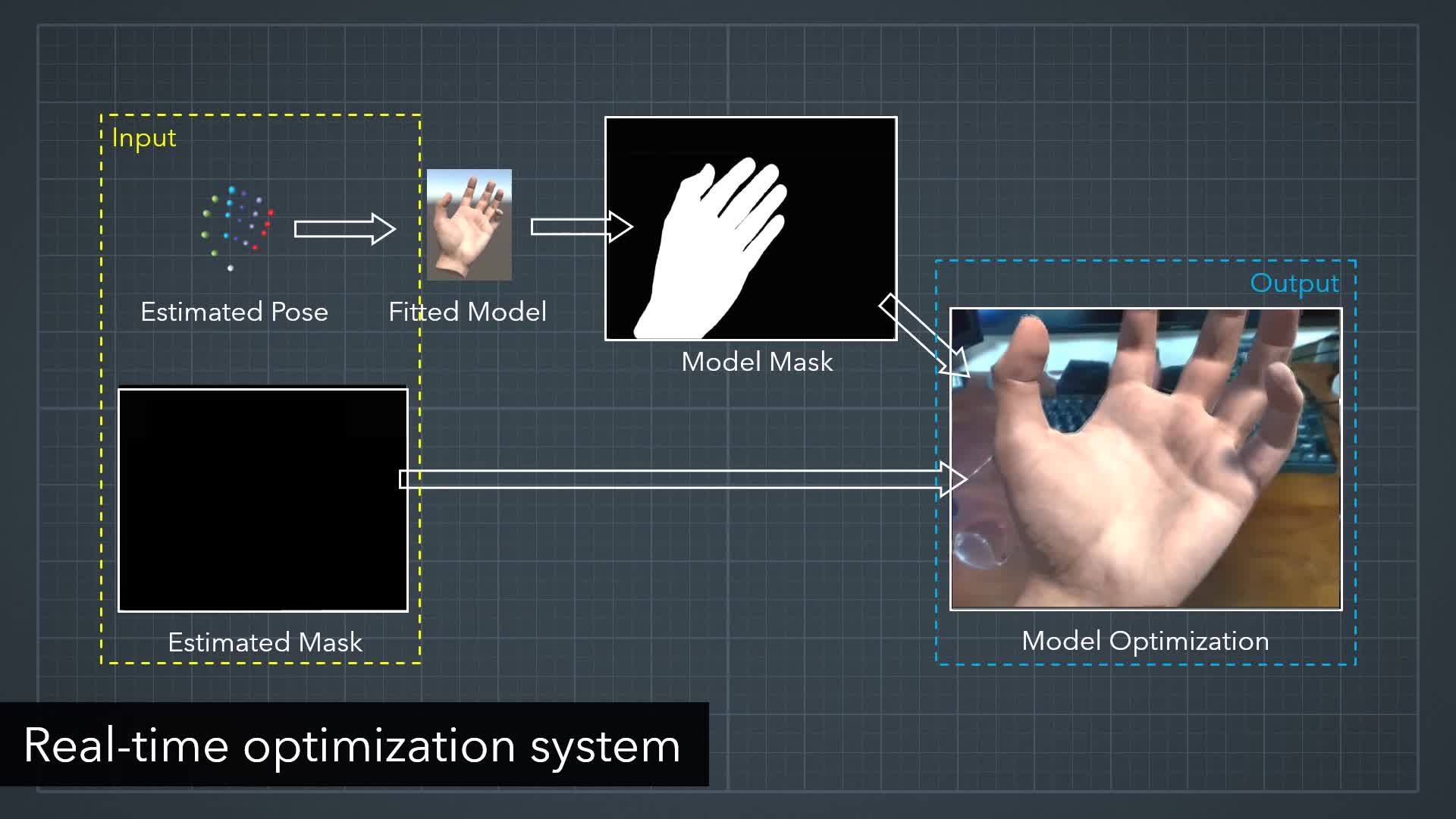

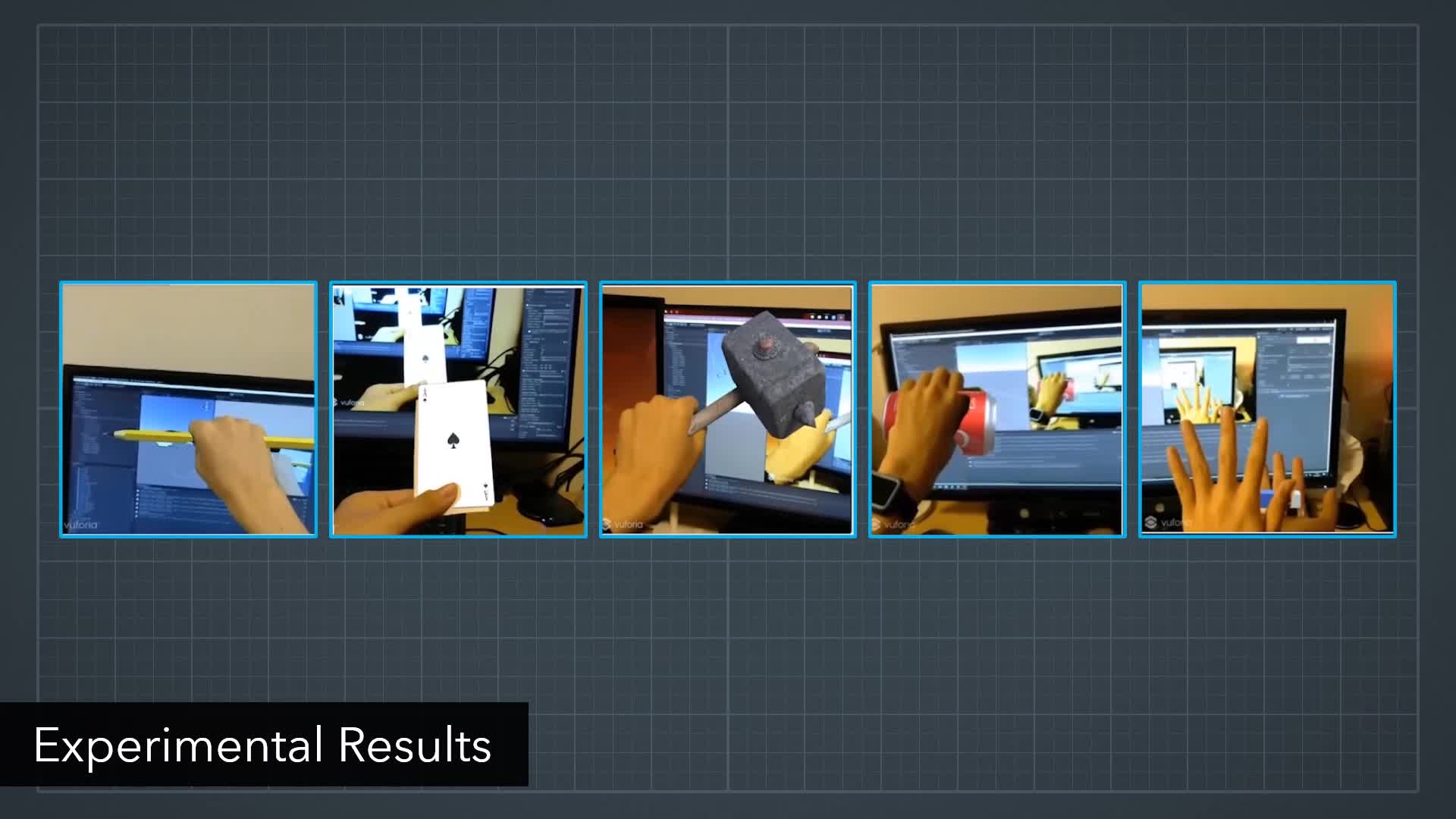

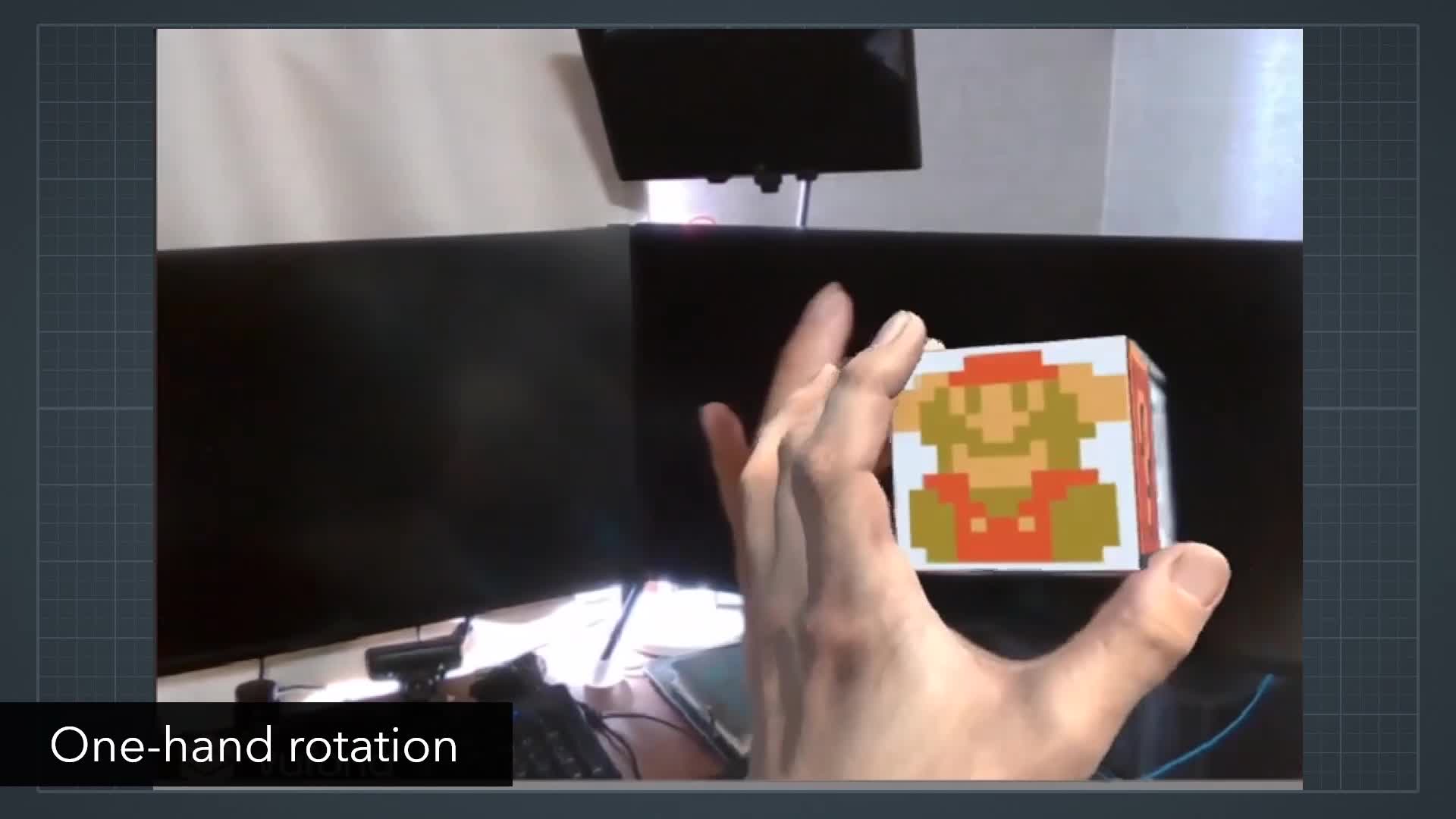

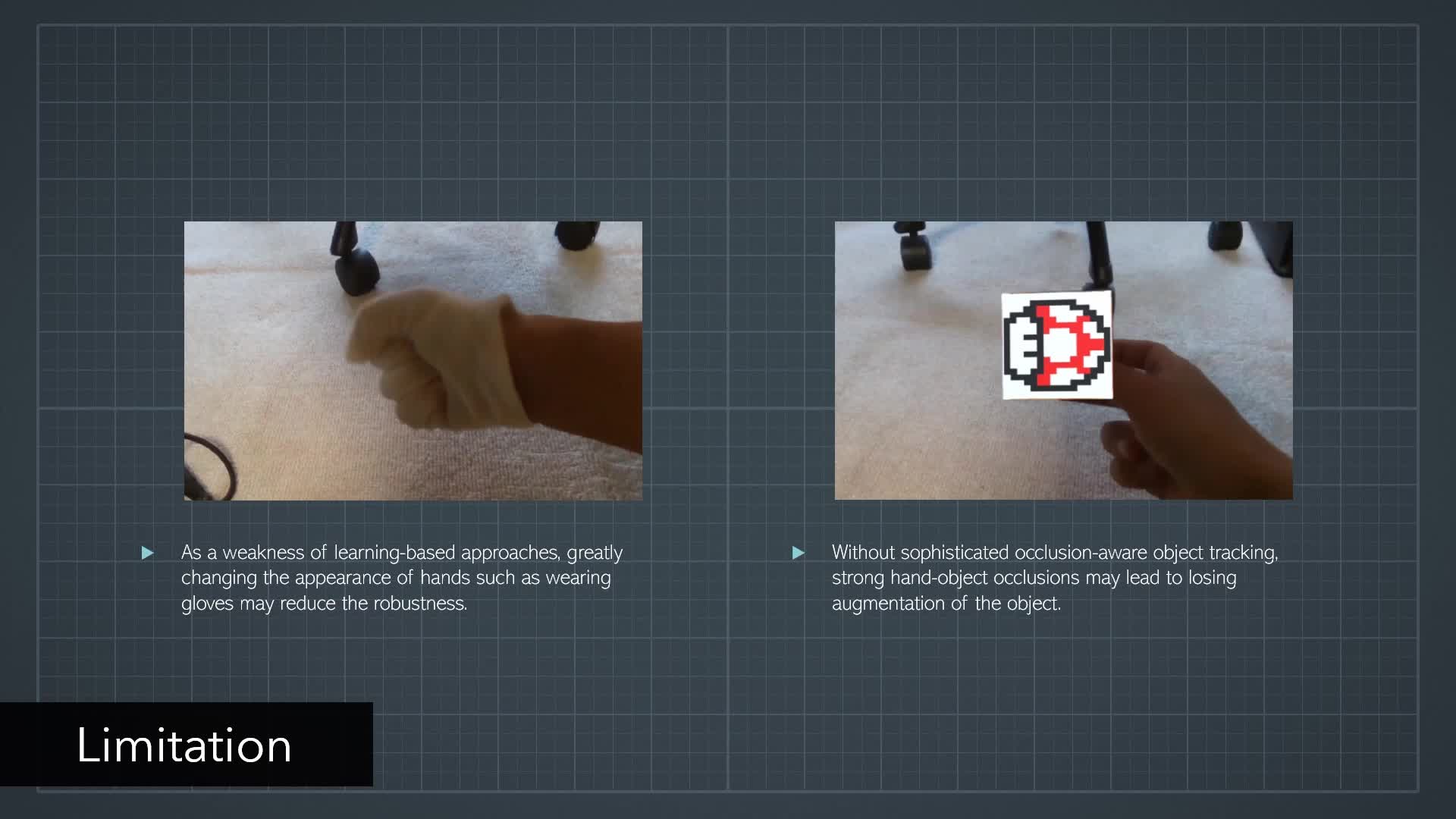

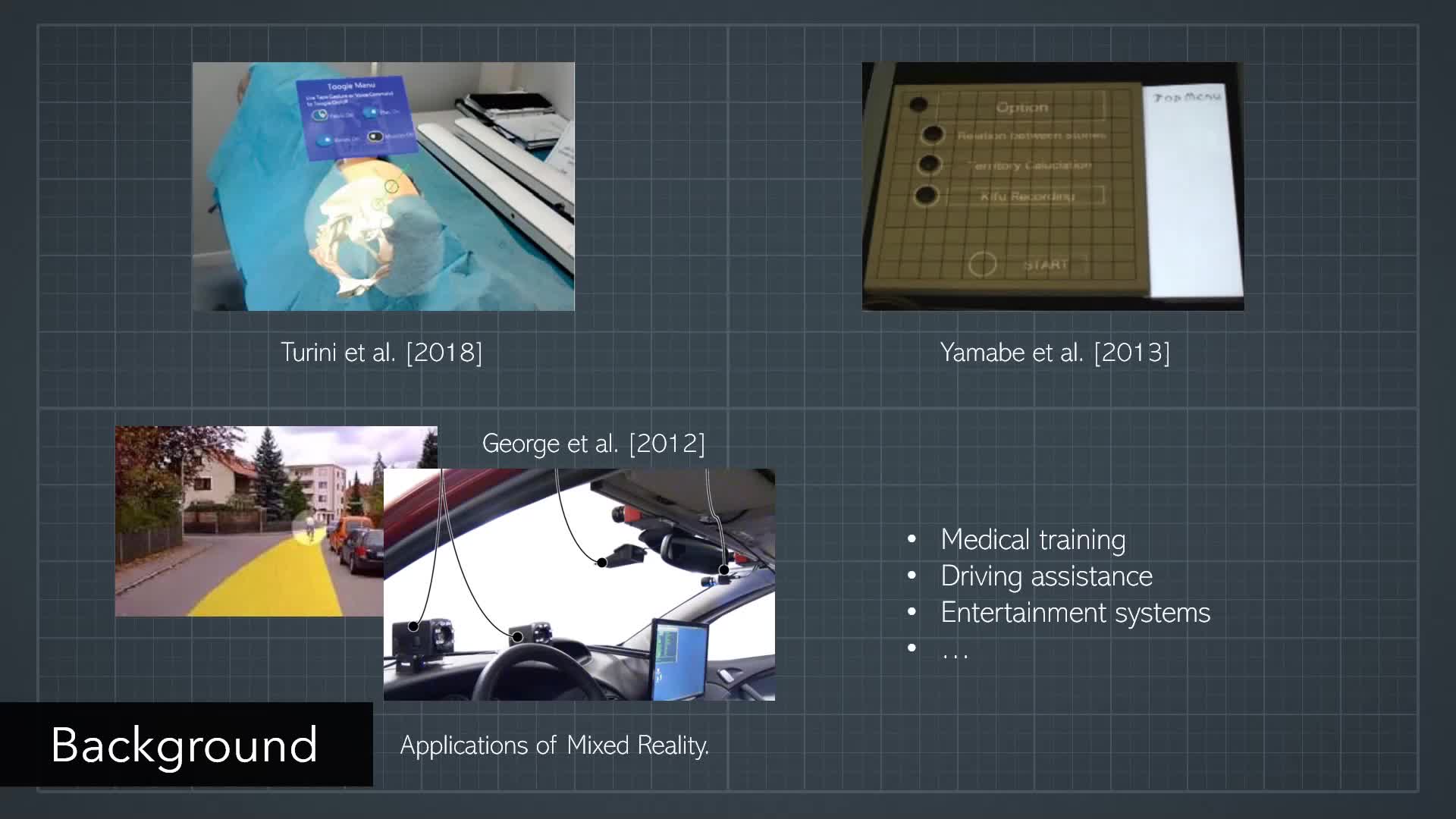

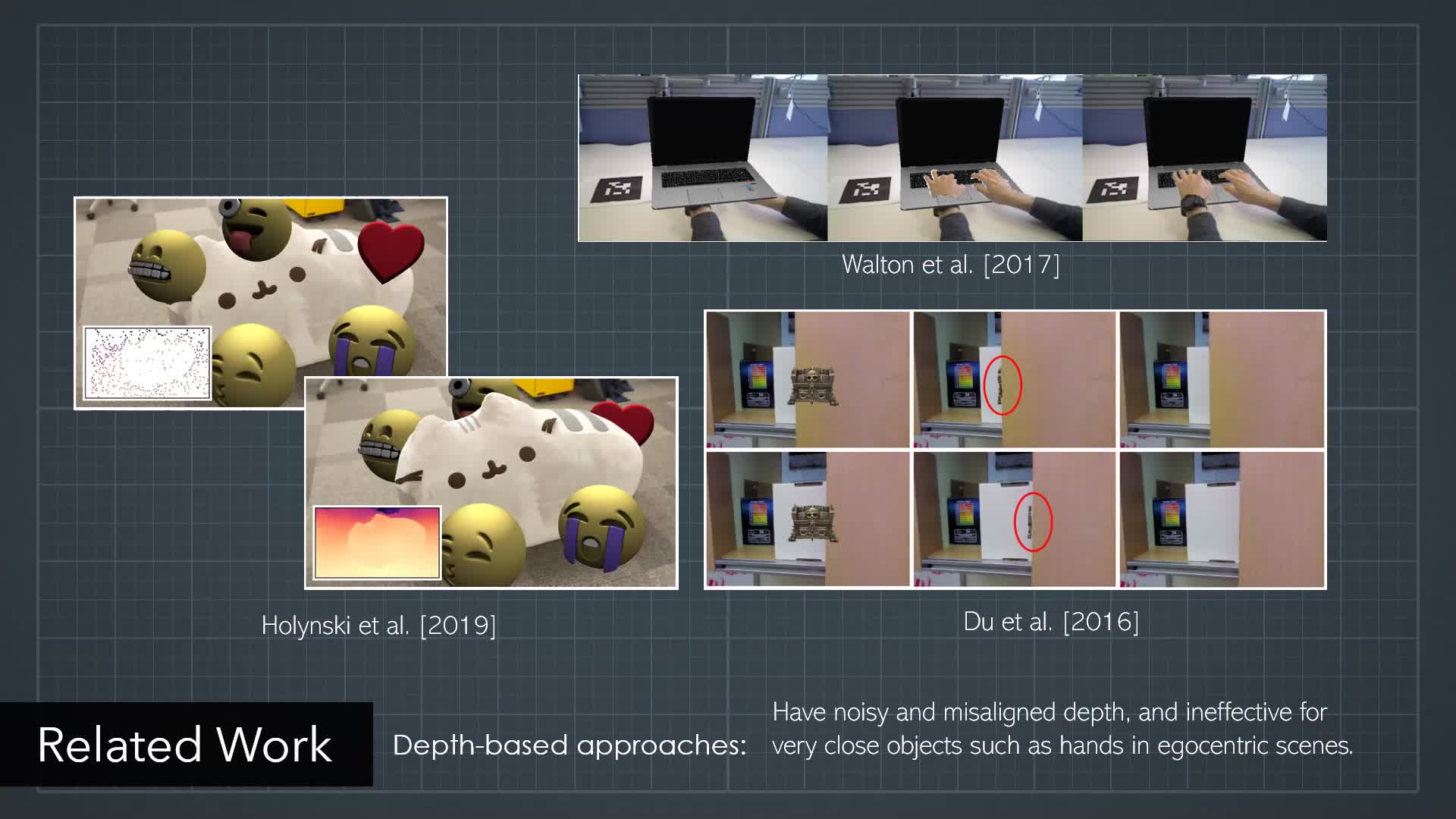

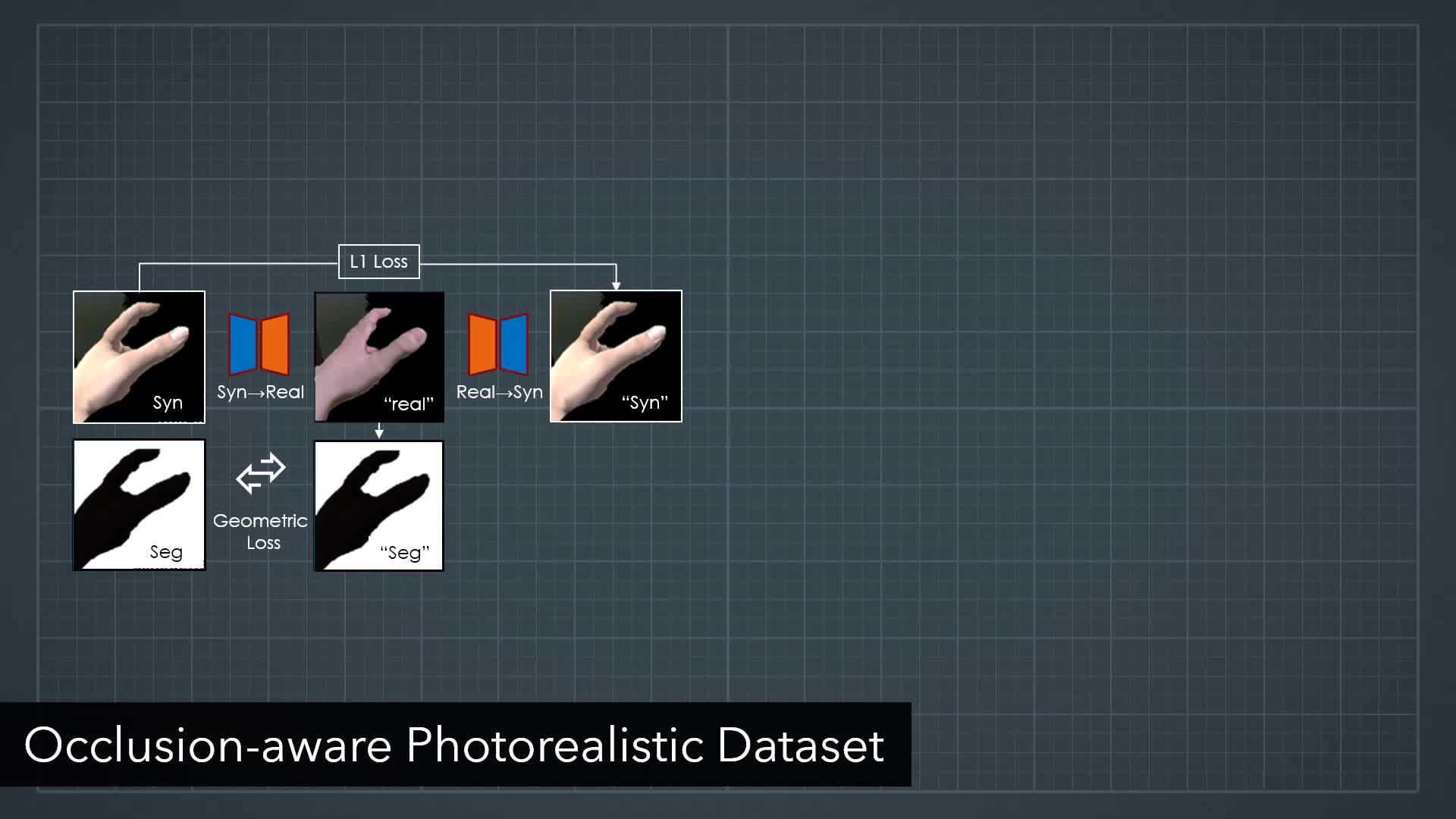

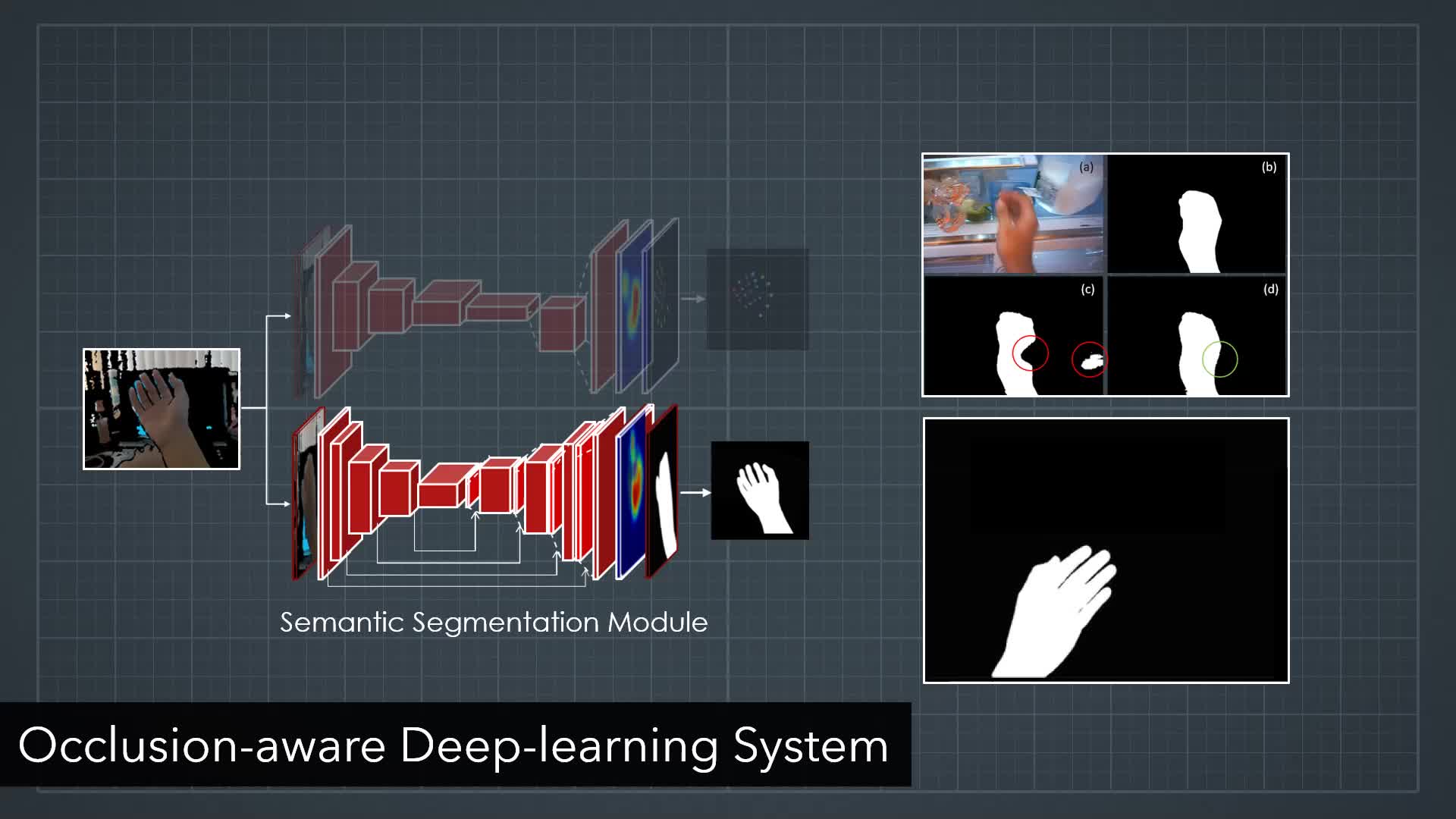

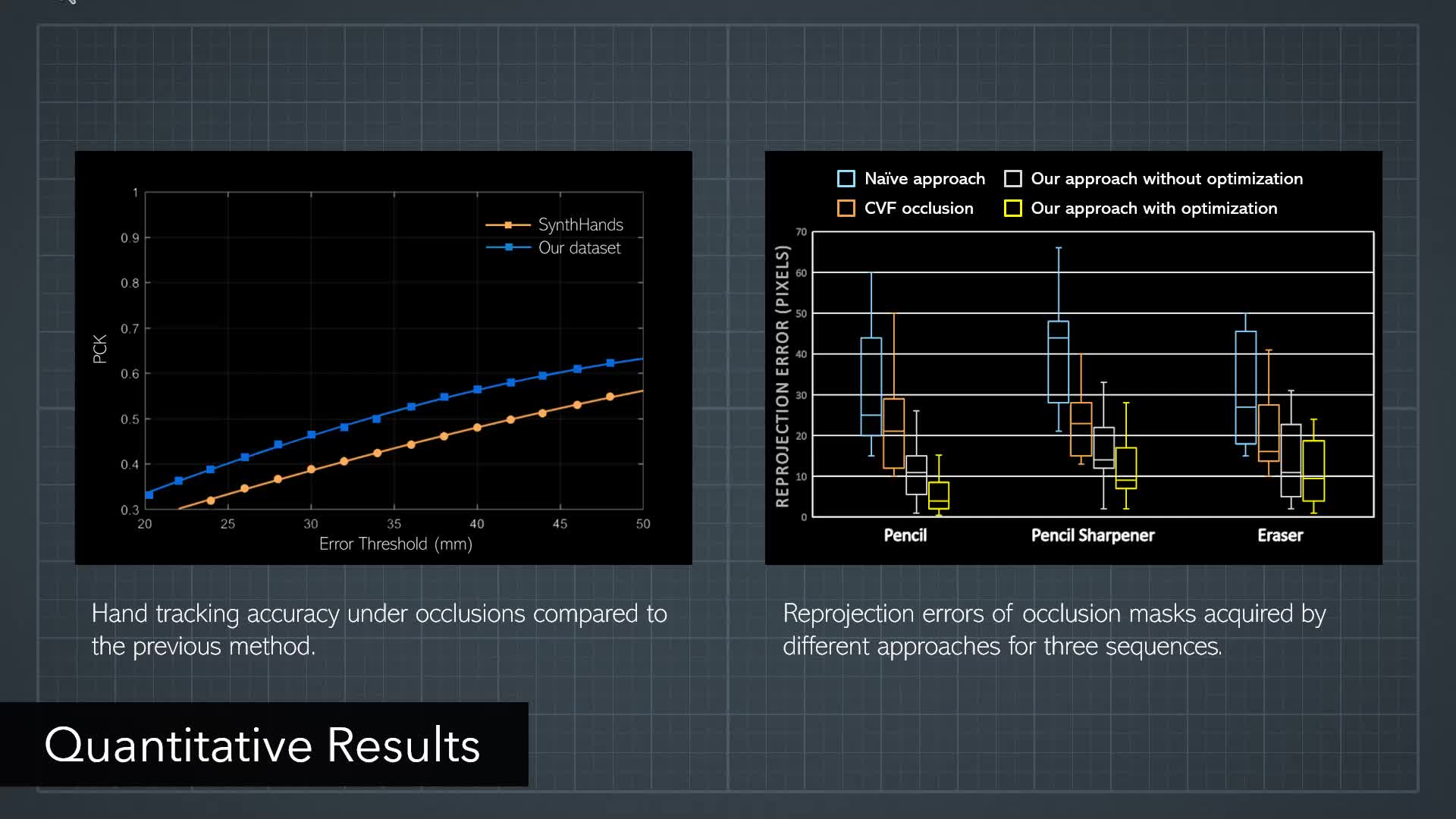

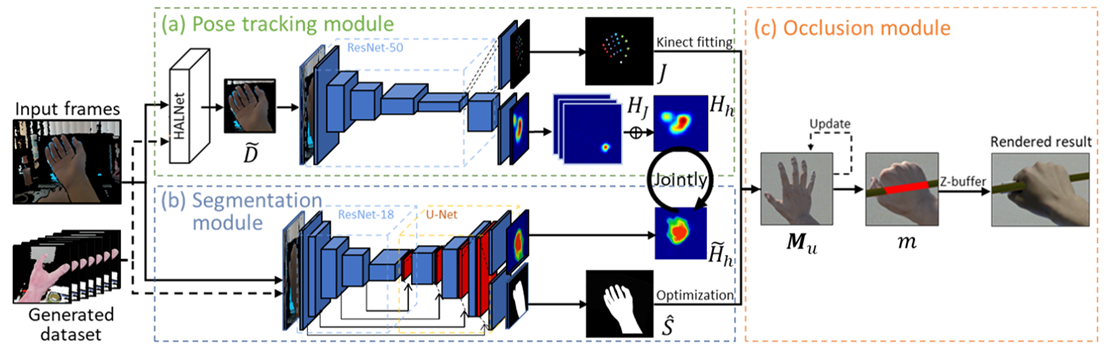

By overlaying virtual imagery onto the real world, mixed reality facilitates diverse applications and has drawn increasing attention. Enhancing physical in-hand objects with a virtual appearance is a key component for many applications that require users to interact with tools such as surgery simulations. However, due to complex hand articulations and severe hand-object occlusions, resolving occlusions in hand-object interactions is a challenging topic. Traditional tracking-based approaches are limited by strong ambiguities from occlusions and changing shapes, while reconstruction-based methods show poor capability of handling dynamic scenes. In this paper, we propose a novel real-time optimization system to resolve hand-object occlusions by spatially reconstructing the scene with estimated hand joints and masks. To acquire accurate results, we propose a joint learning process that shares information between two models and jointly estimates hand poses and semantic segmentation. To facilitate the joint learning system and improve its accuracy under occlusions, we propose an occlusion-aware RGB-D hand dataset that mitigates the ambiguity through precise annotations and photorealistic appearance. Evaluations show more consistent overlays compared to literature, and a user study verifies a more realistic experience.