STGAE: Spatial Temporal Graph Auto-Encoder for Hand Motion Denoising

Kanglei Zhou, Jiaying Chen, Hubert P. H. Shum, Frederick W. B. Li and Xiaohui Liang

Proceedings of the 2021 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2021

Core A* Conference‡ Citation: 21#

Abstract

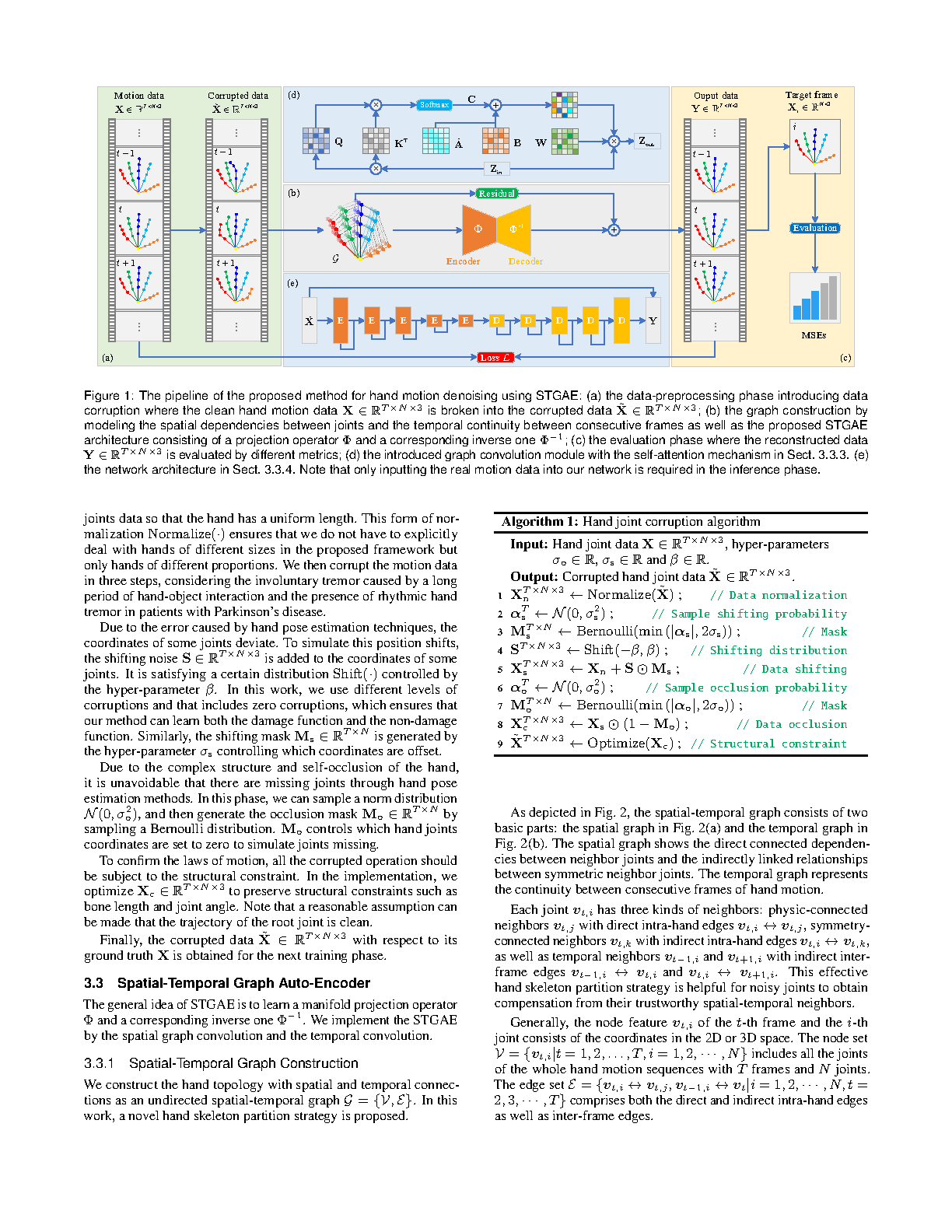

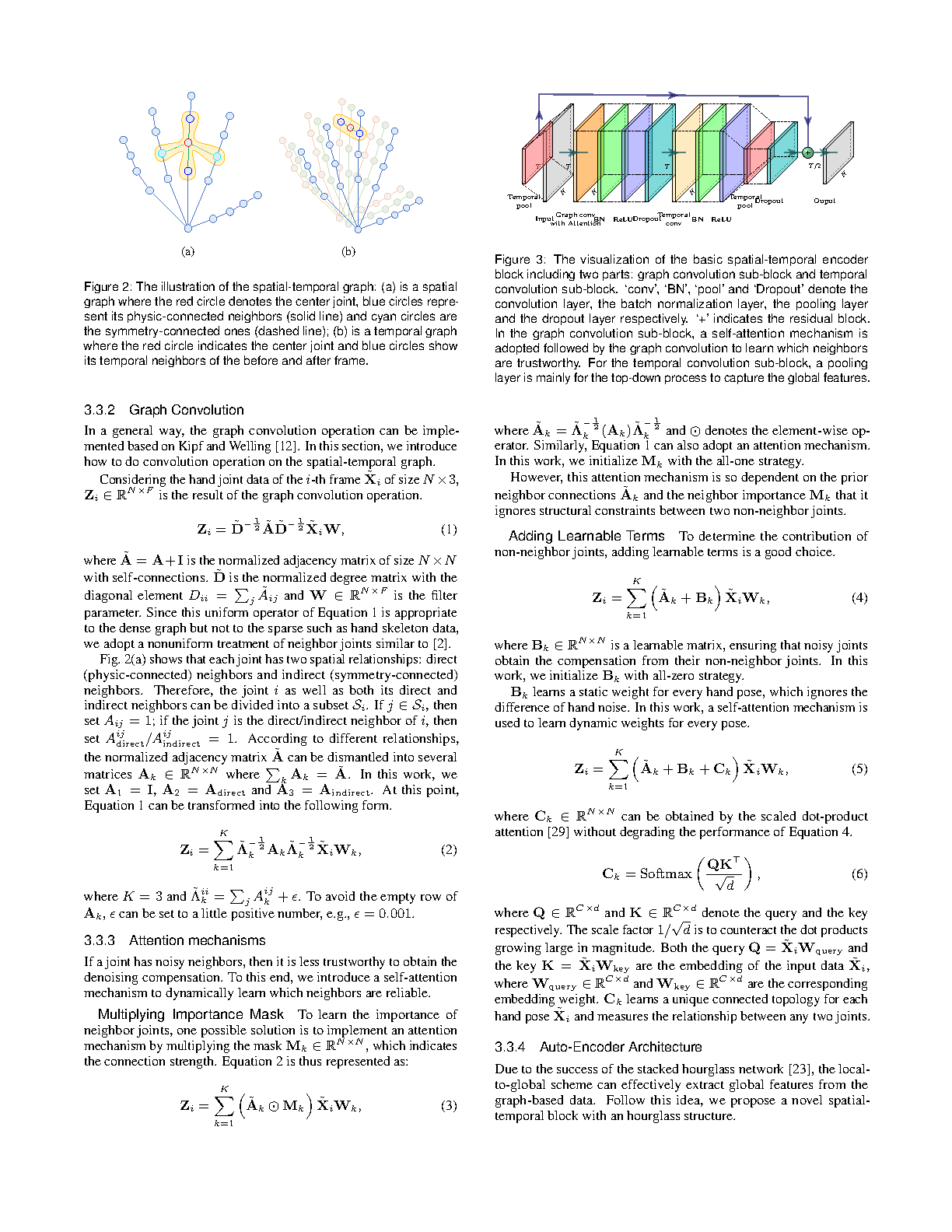

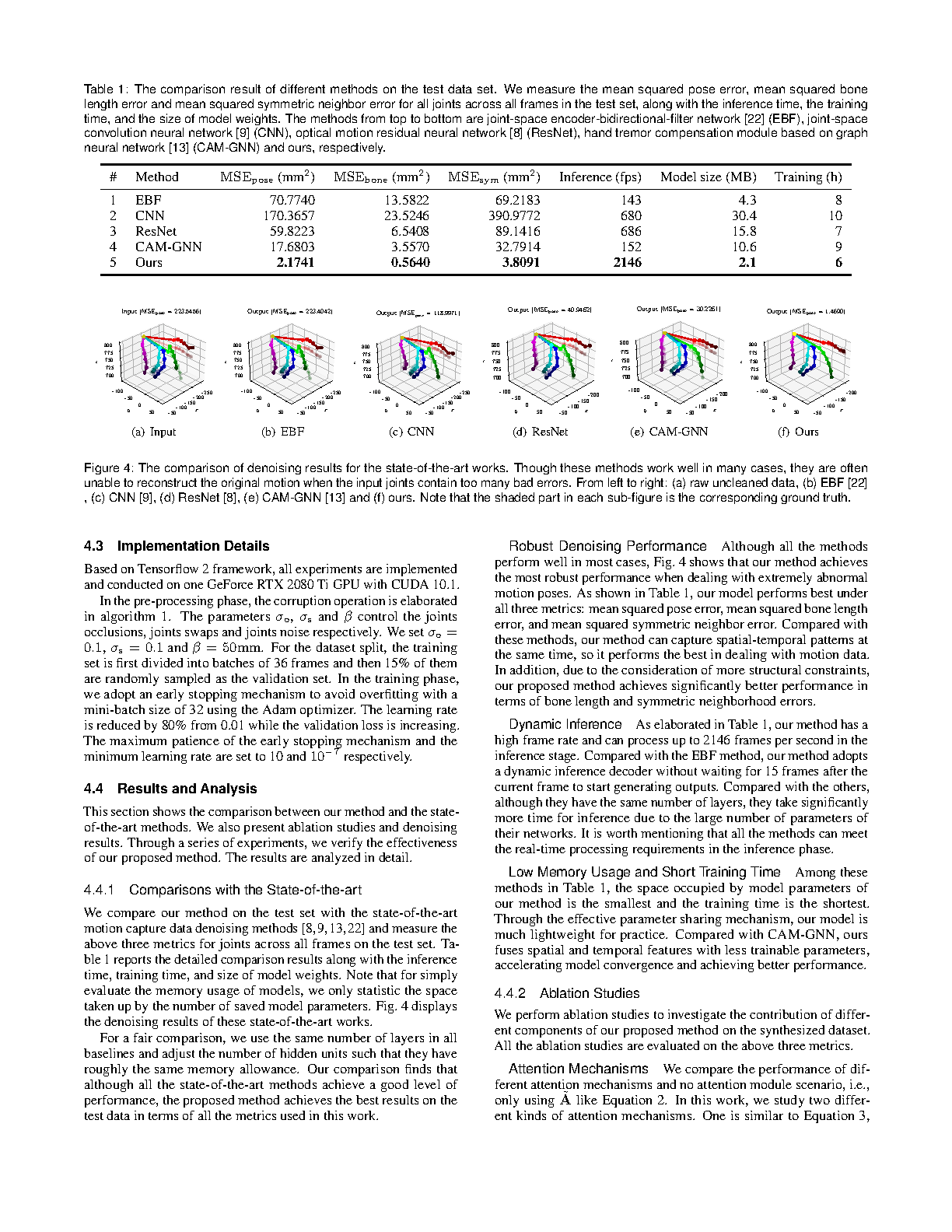

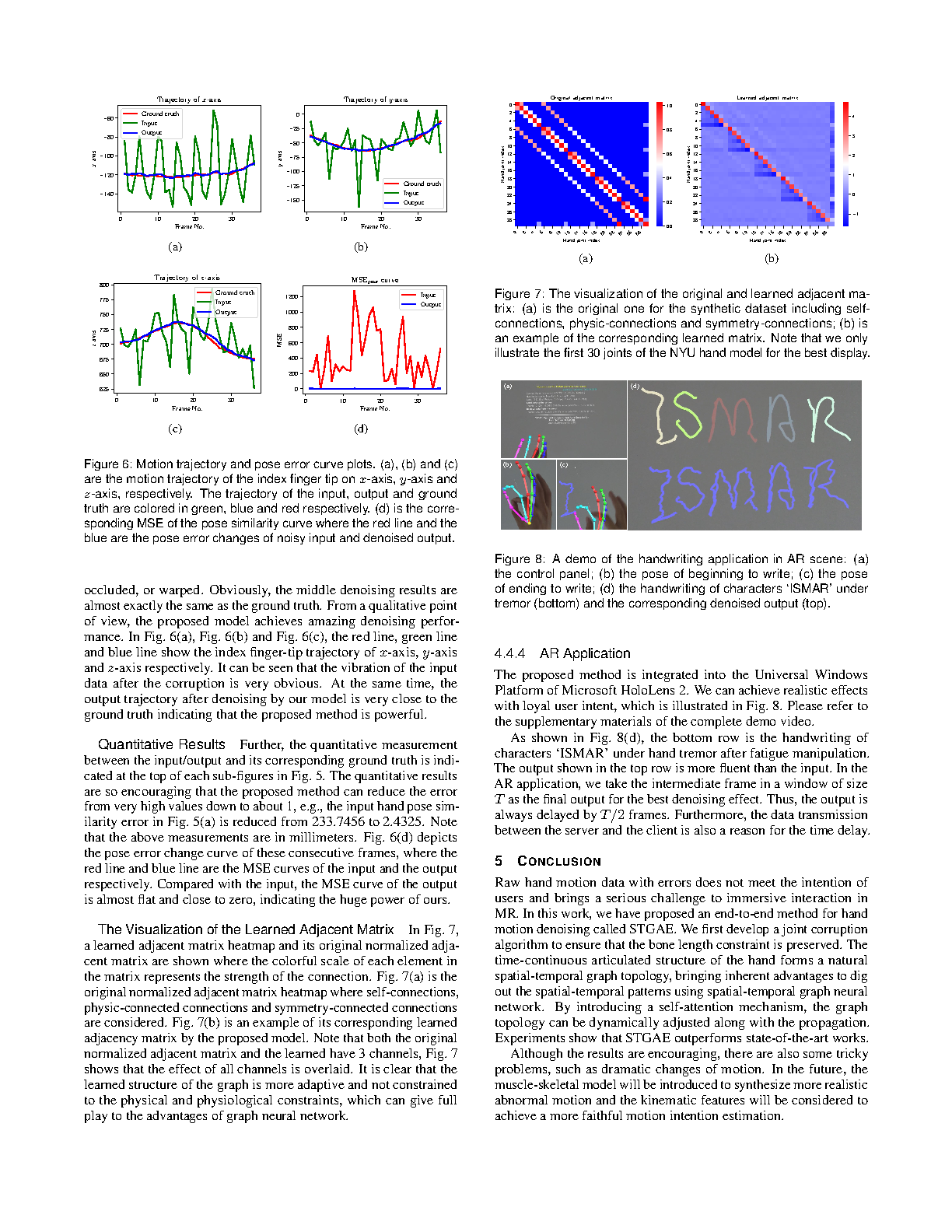

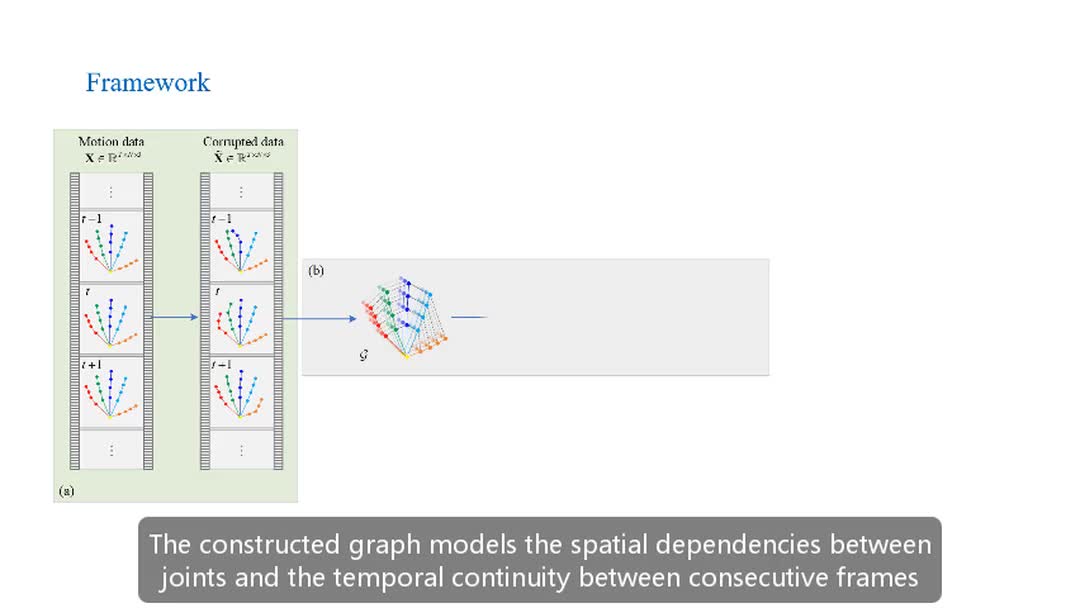

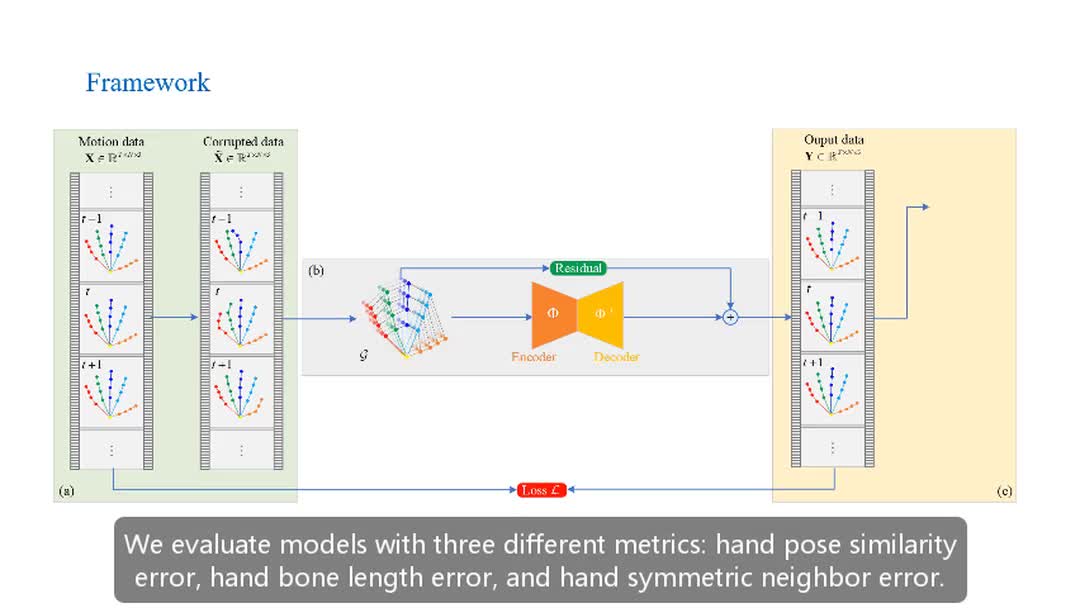

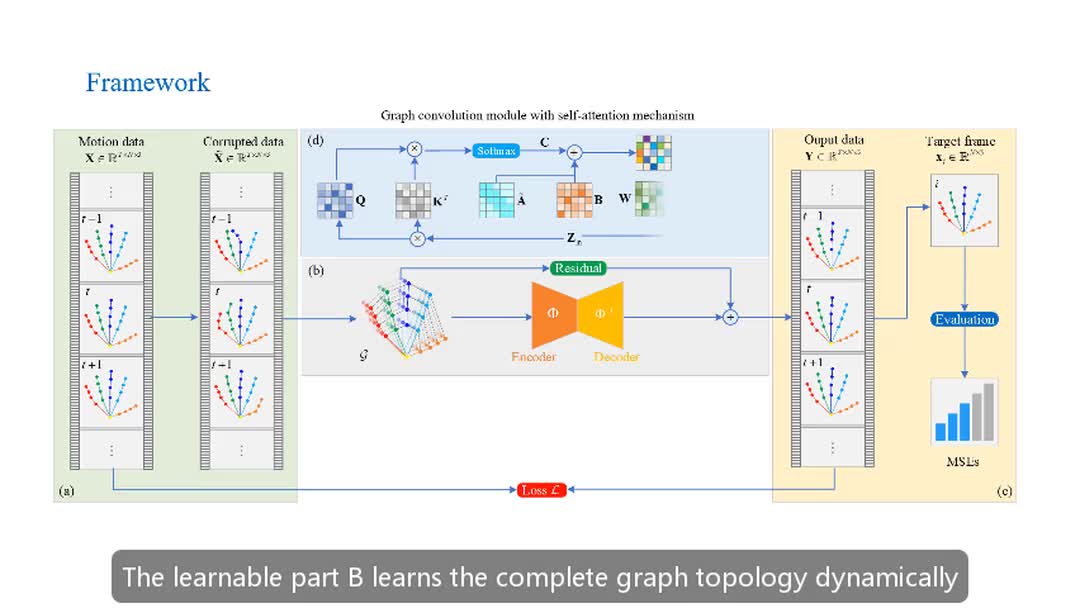

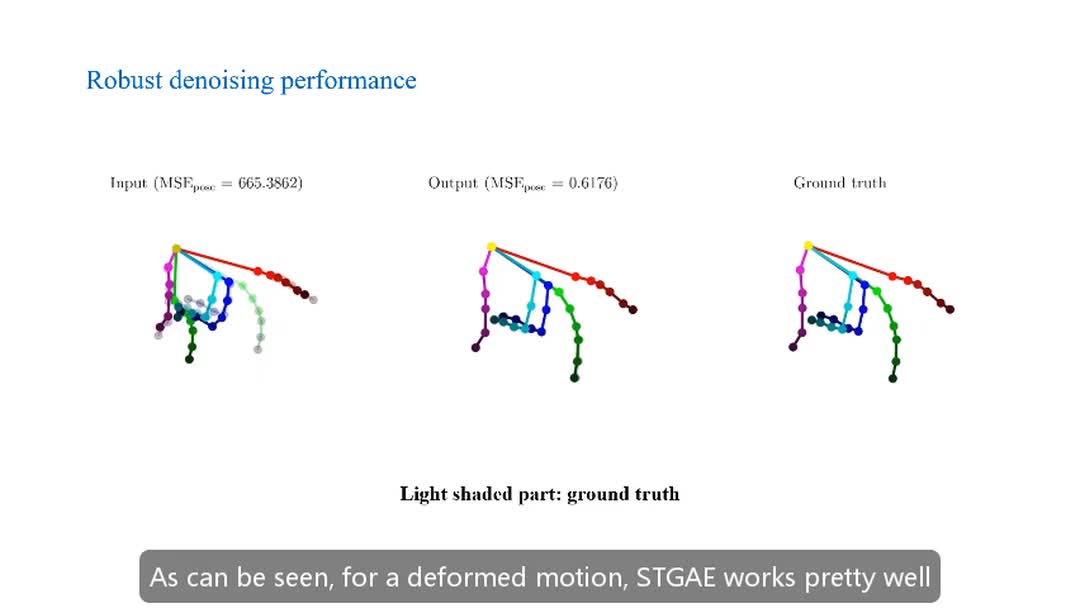

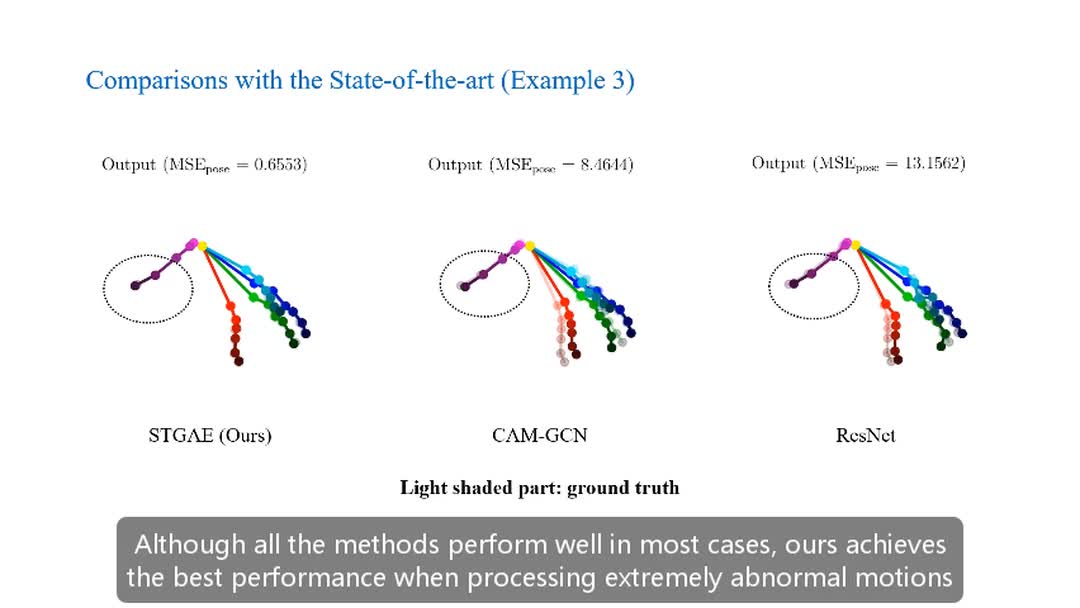

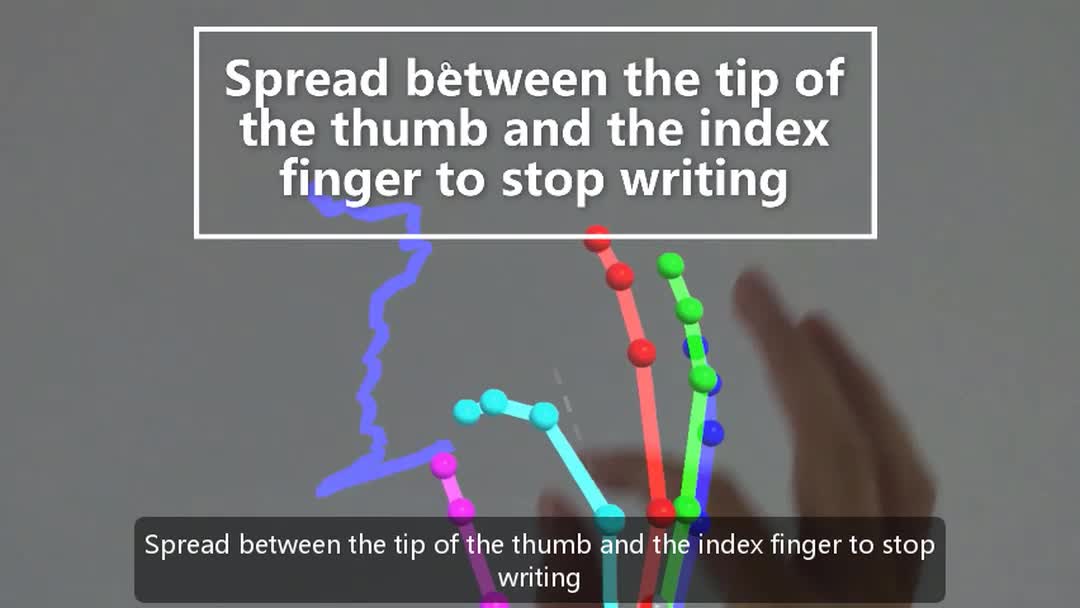

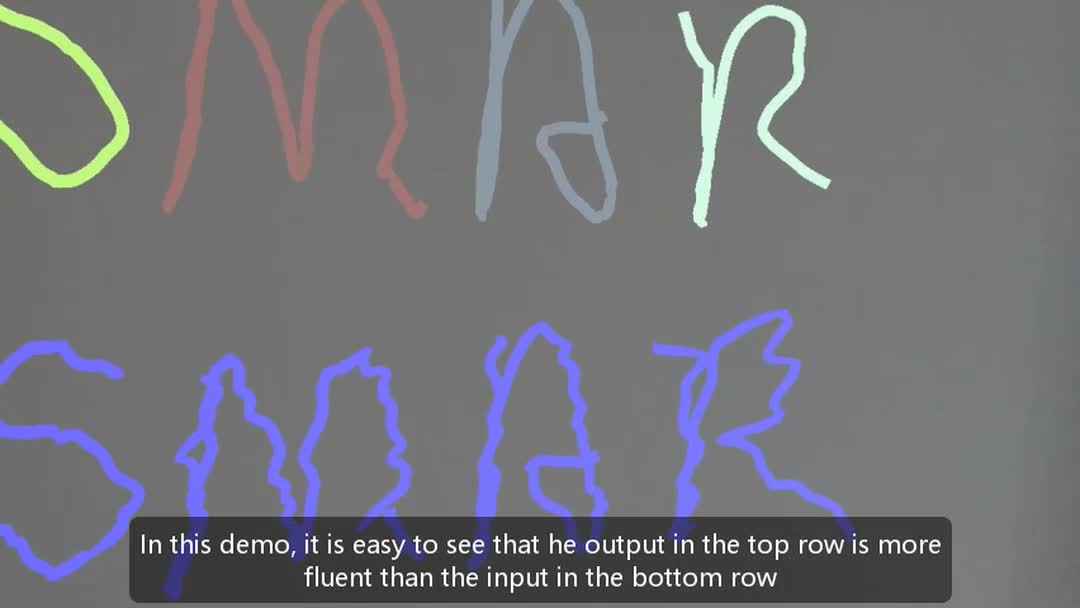

Hand object interaction in mixed reality (MR) relies on the accurate tracking and estimation of human hands, which provide users with a sense of immersion. However, raw captured hand motion data always contains errors such as joints occlusion, dislocation, high-frequency noise, and involuntary jitter. Denoising and obtaining the hand motion data consistent with the user’s intention are of the utmost importance to enhance the interactive experience in MR. To this end, we propose an end-to-end method for hand motion denoising using the spatial-temporal graph auto-encoder (STGAE). The spatial and temporal patterns are recognized simultaneously by constructing the consecutive hand joint sequence as a spatial-temporal graph. Considering the complexity of the articulated hand structure, a simple yet effective partition strategy is proposed to model the physic-connected and symmetry-connected relationships. Graph convolution is applied to extract structural constraints of the hand, and a self-attention mechanism is to adjust the graph topology dynamically. Combining graph convolution and temporal convolution, a fundamental graph encoder or decoder block is proposed. We finally establish the hourglass residual auto-encoder to learn a manifold projection operation and a corresponding inverse projection through stacking these blocks. In this work, the proposed framework has been successfully used in hand motion data denoising with preserving structural constraints between joints. Extensive quantitative and qualitative experiments show that the proposed method has achieved better performance than the state-of-the-art approaches.