Environment Capturing with Microsoft Kinect

Kevin Mackay, Hubert P. H. Shum and Taku Komura

Proceedings of the 2012 International Conference on Software, Knowledge, Information Management and Applications (SKIMA), 2012

Abstract

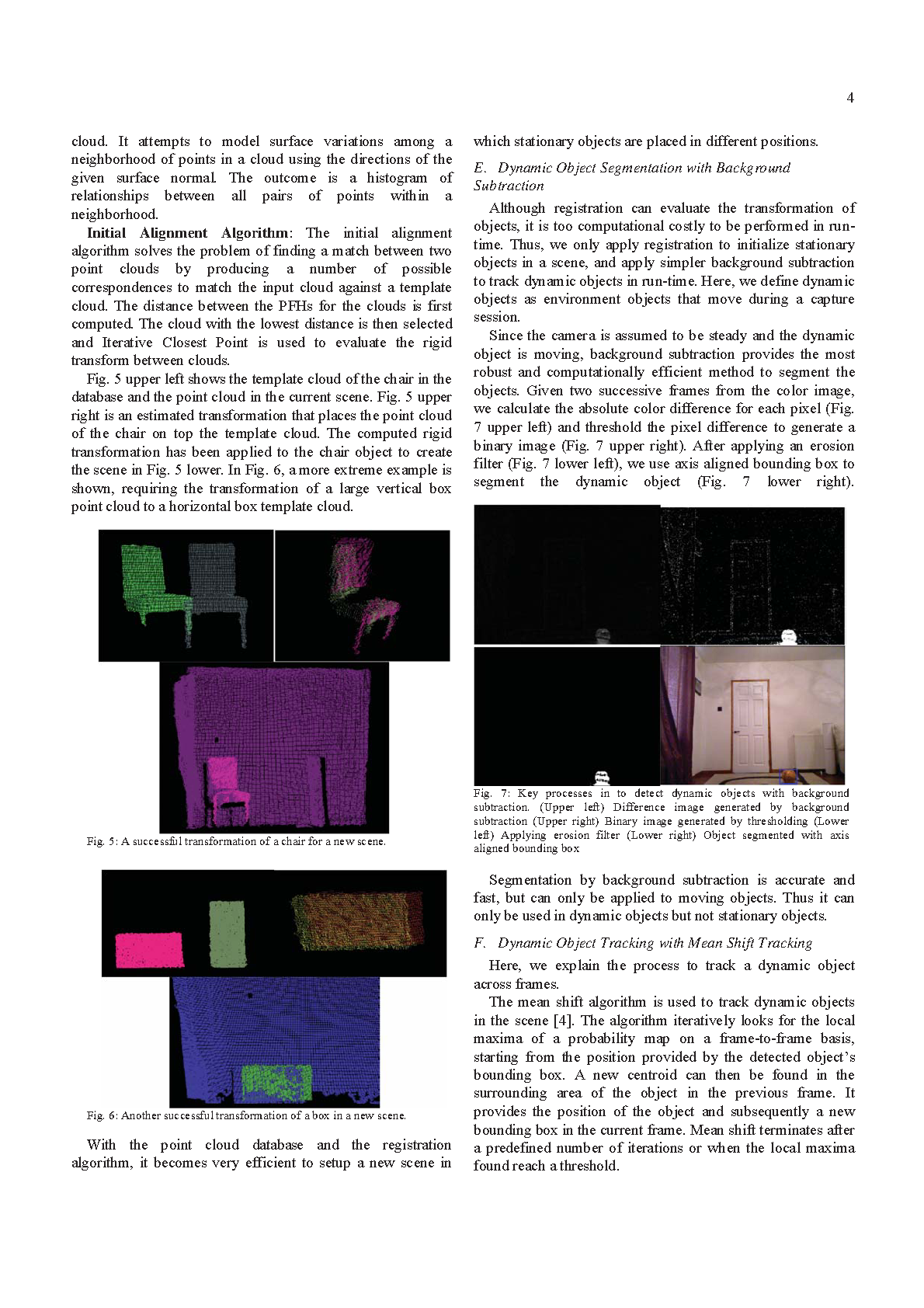

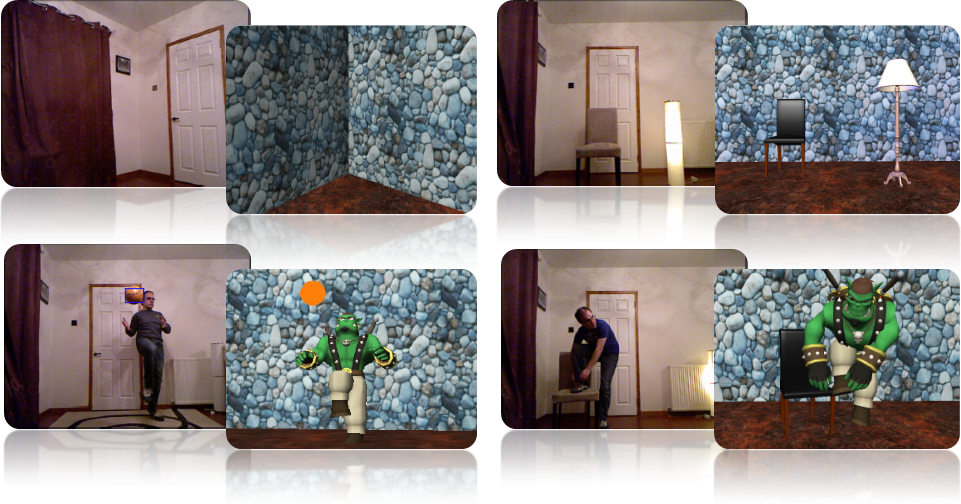

Constructing virtual scenes that incorporate human and object interaction has traditionally been a time consuming process in computer animation, whereby the motion of an actor is first recorded and any objects used in the scene are then intricately added by an animator. The Microsoft Kinect utilizes a synchronized RGBD stream to provide markerless skeletal tracking of humans, enabling efficient motion capture; however, the problem of capturing environment objects remains unsolved. In this paper, we propose a new framework to segment and track three major types of environment objects using Kinect, namely background planes, stationary objects and dynamic objects. We demonstrate that the motion of an actor and their surrounding environment can be obtained at the same time, saving considerable effort for the animators. Our proposed system is best to be applied to applications involving extensive human-object interactions, such as console games and animation designs.