Filtered Pose Graph for Efficient Kinect Pose Reconstruction

Pierre Plantard, Hubert P. H. Shum and Franck Multon

Multimedia Tools and Applications (MTAP), 2017

Abstract

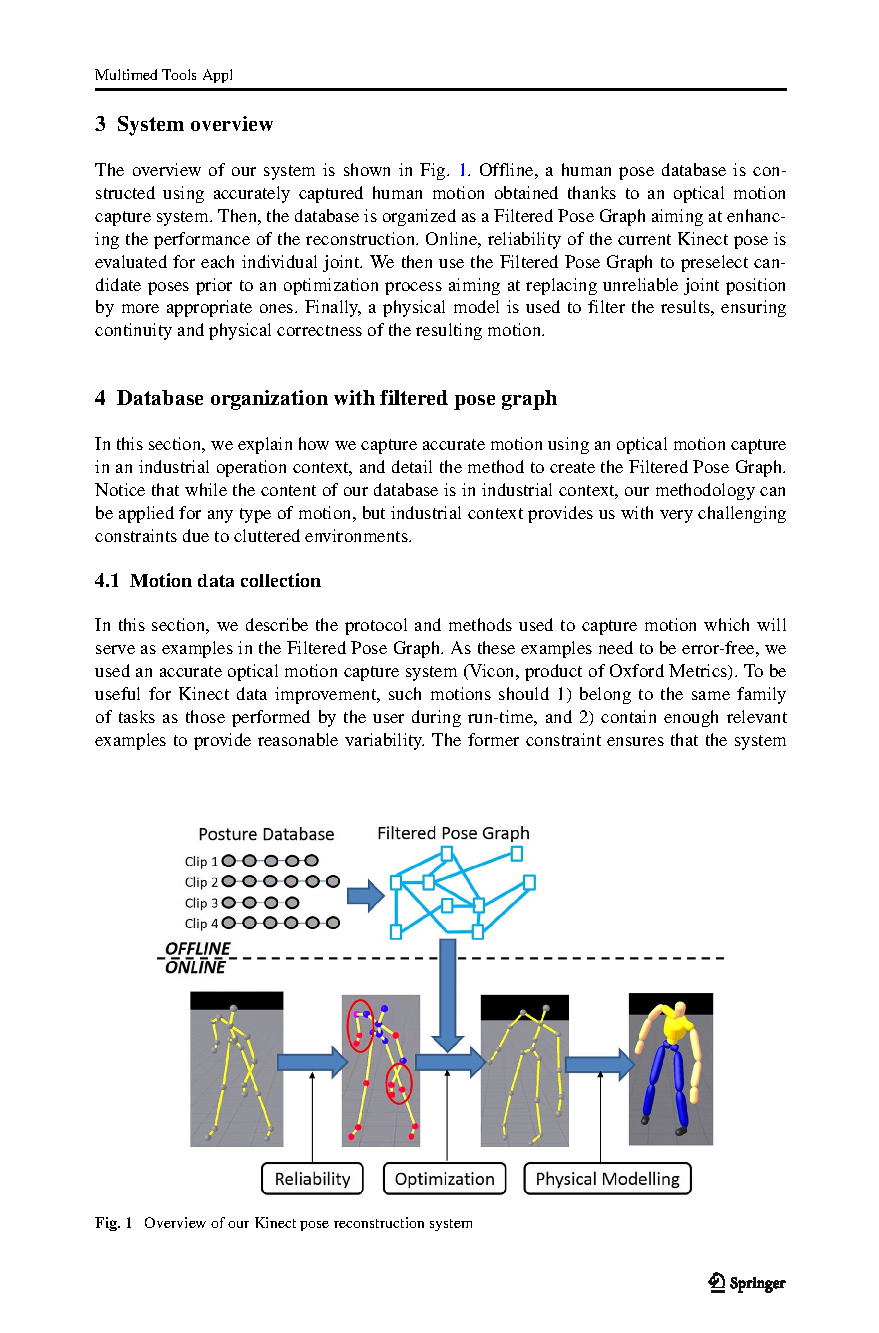

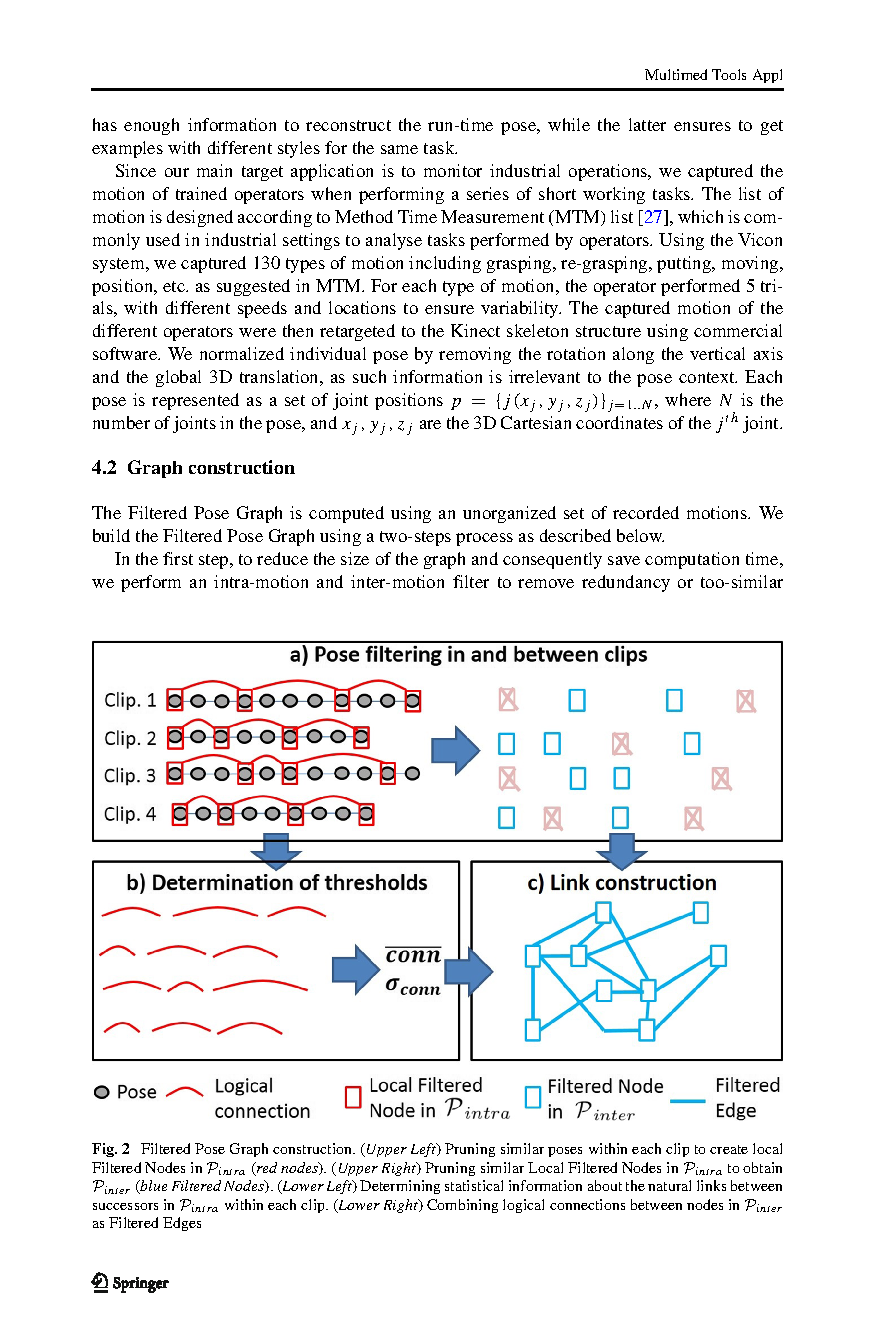

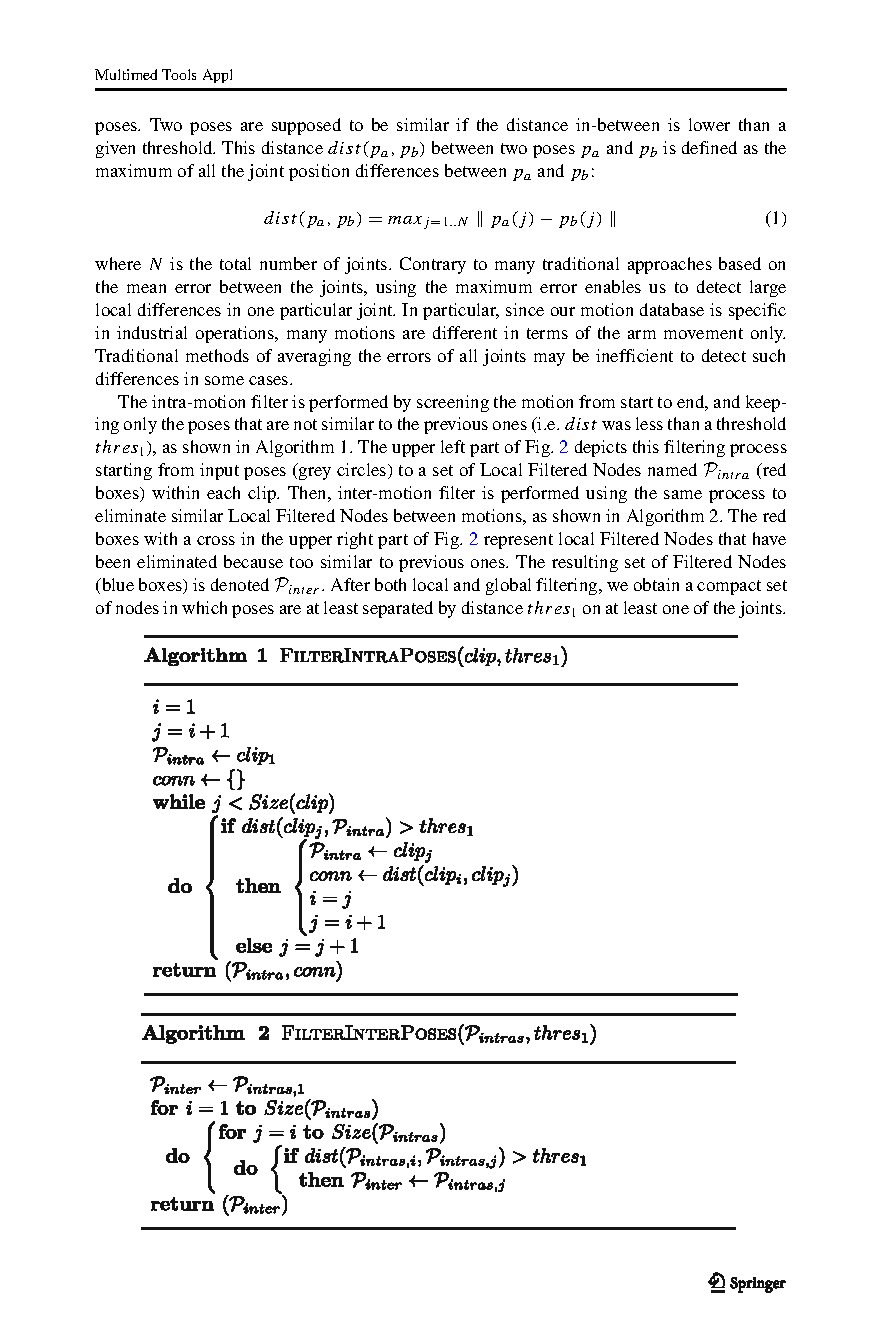

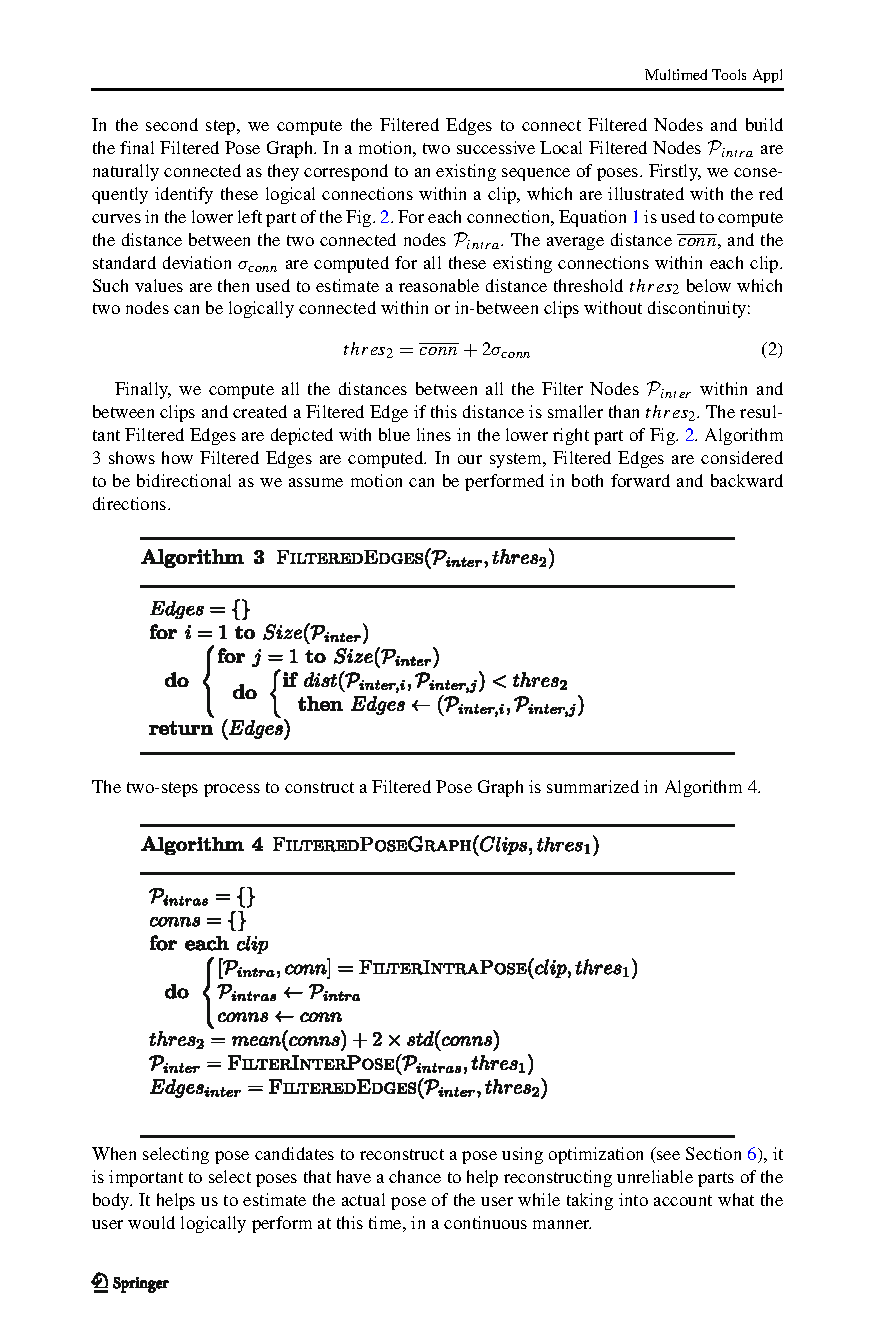

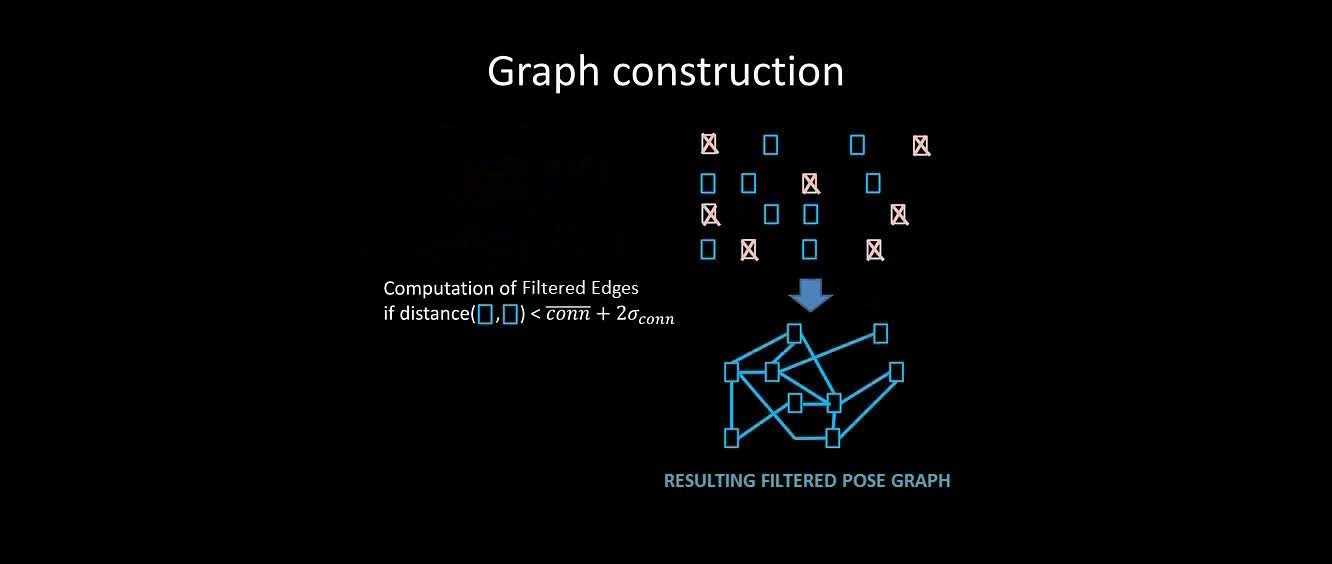

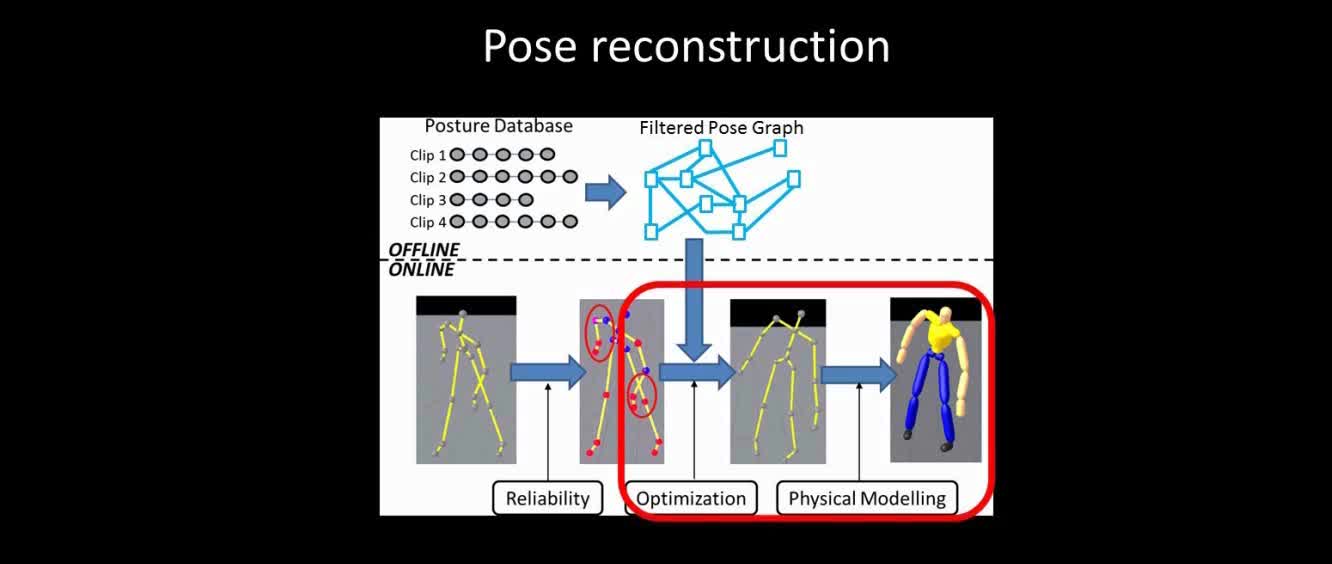

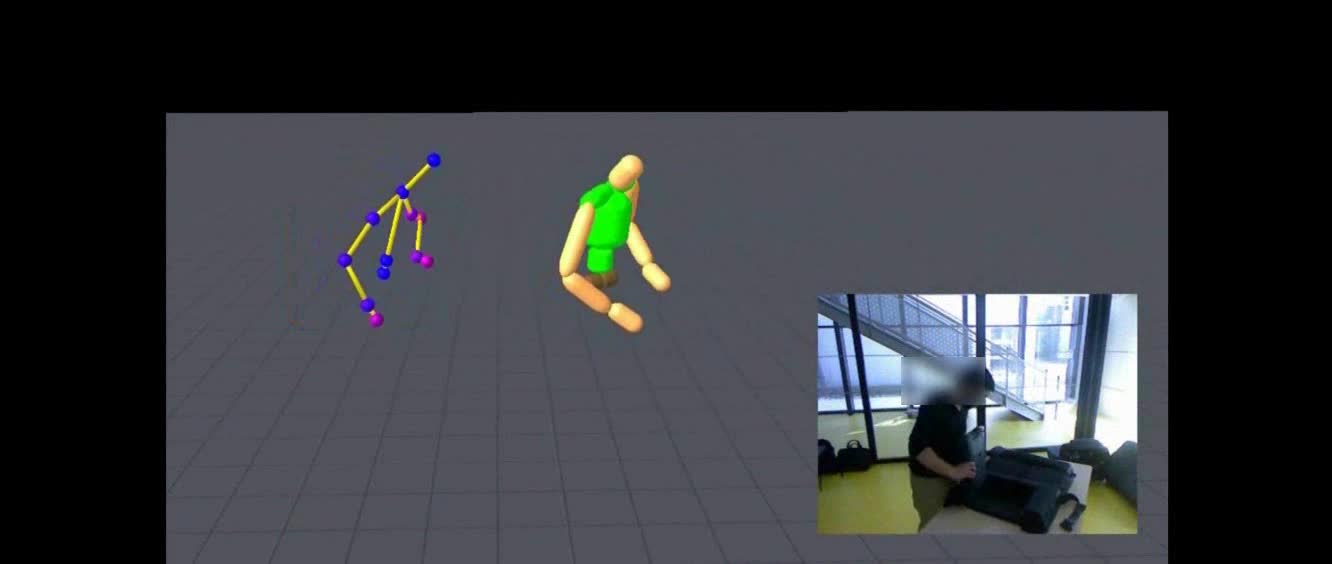

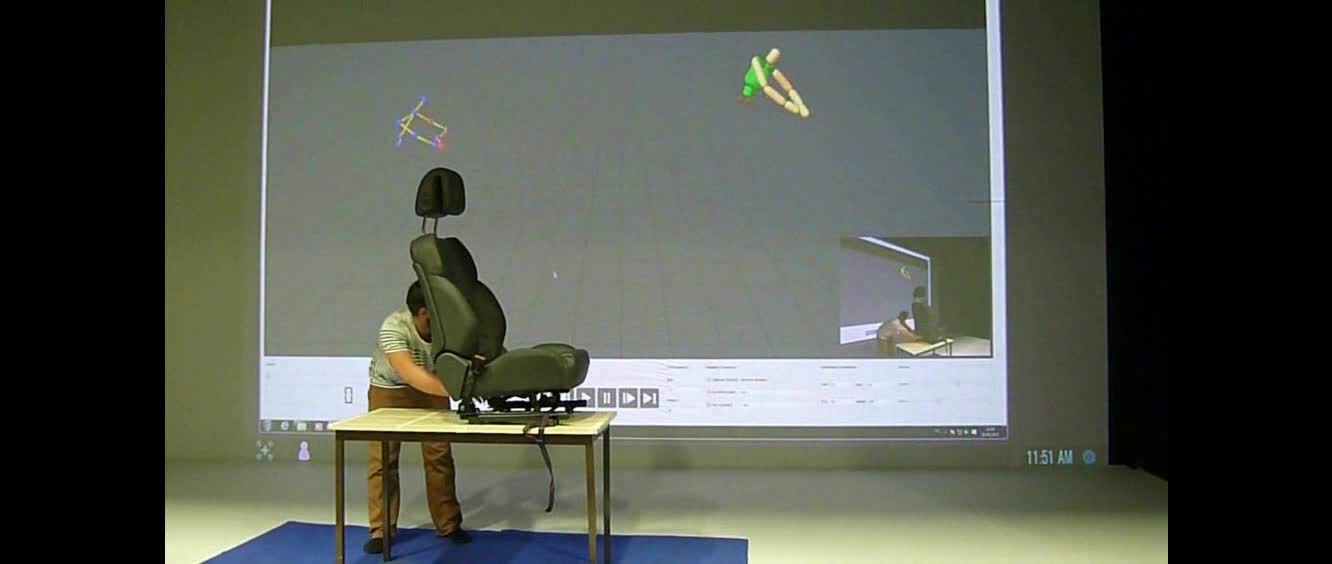

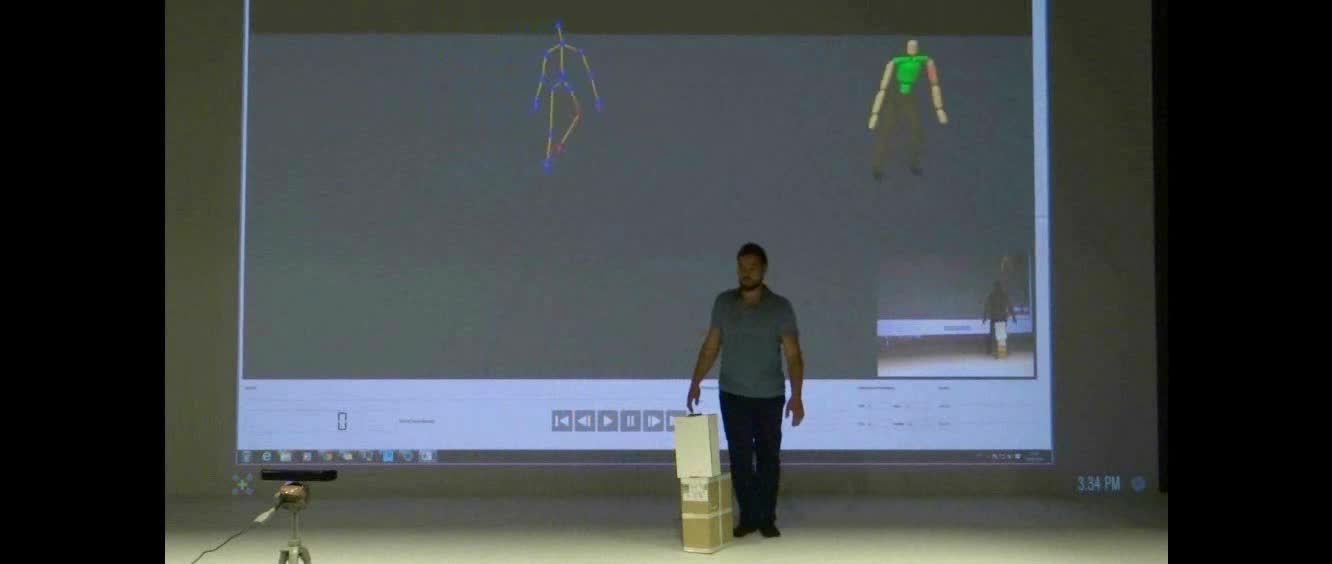

Being marker-free and calibration free, Microsoft Kinect is nowadays widely used in many motion-based applications, such as user training for complex industrial tasks and ergonomics pose evaluation. The major problem of Kinect is the placement requirement to obtain accurate poses, as well as its weakness against occlusions. To improve the robustness of Kinect in interactive motion-based applications, real-time data-driven pose reconstruction has been proposed. The idea is to utilize a database of accurately captured human poses as a prior to optimize the Kinect recognized ones, in order to estimate the true poses performed by the user. The key research problem is to identify the most relevant poses in the database for accurate and efficient reconstruction. In this paper, we propose a new pose reconstruction method based on modelling the pose database with a structure called Filtered Pose Graph, which indicates the intrinsic correspondence between poses. Such a graph not only speeds up the database poses selection process, but also improves the relevance of the selected poses for higher quality reconstruction. We apply the proposed method in a challenging environment of industrial context that involves sub-optimal Kinect placement and a large amount of occlusion. Experimental results show that our real-time system reconstructs Kinect poses more accurately than existing methods.