Automatic Dance Generation System Considering Sign Language Information

Wakana Asahina, Naoya Iwamoto, Hubert P. H. Shum and Shigeo Morishima

Proceedings of the 2016 ACM SIGGRAPH Posters, 2016

Abstract

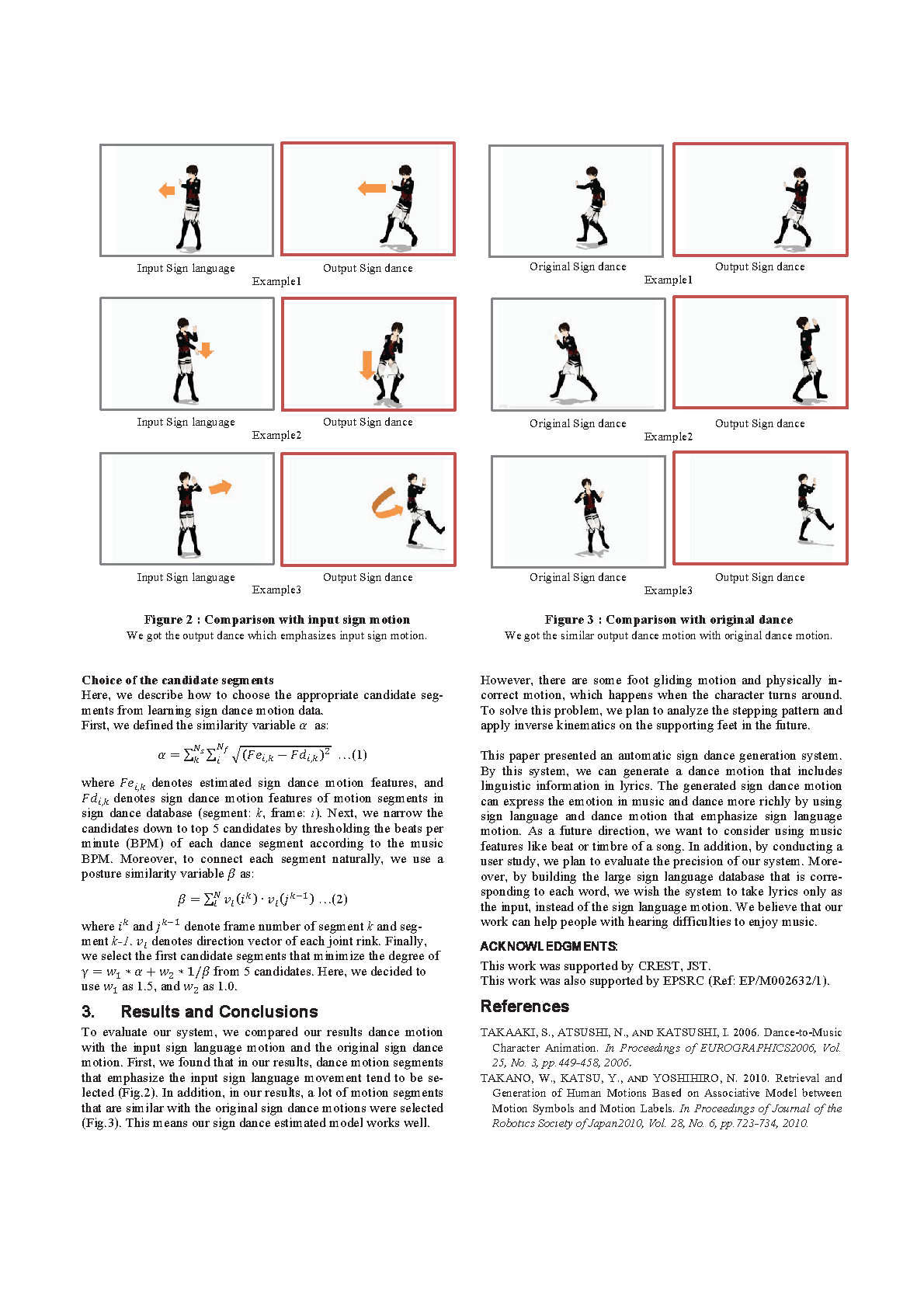

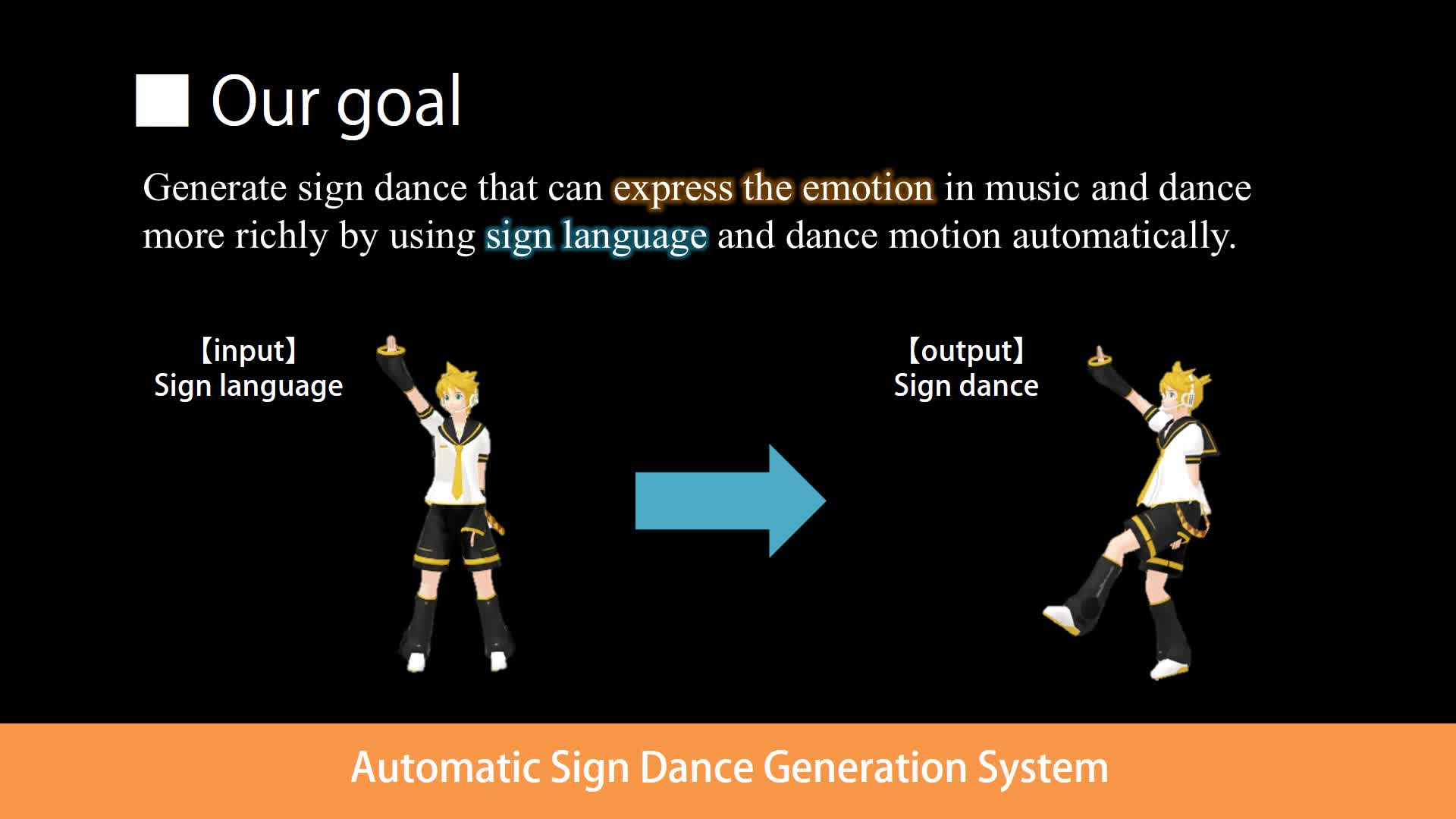

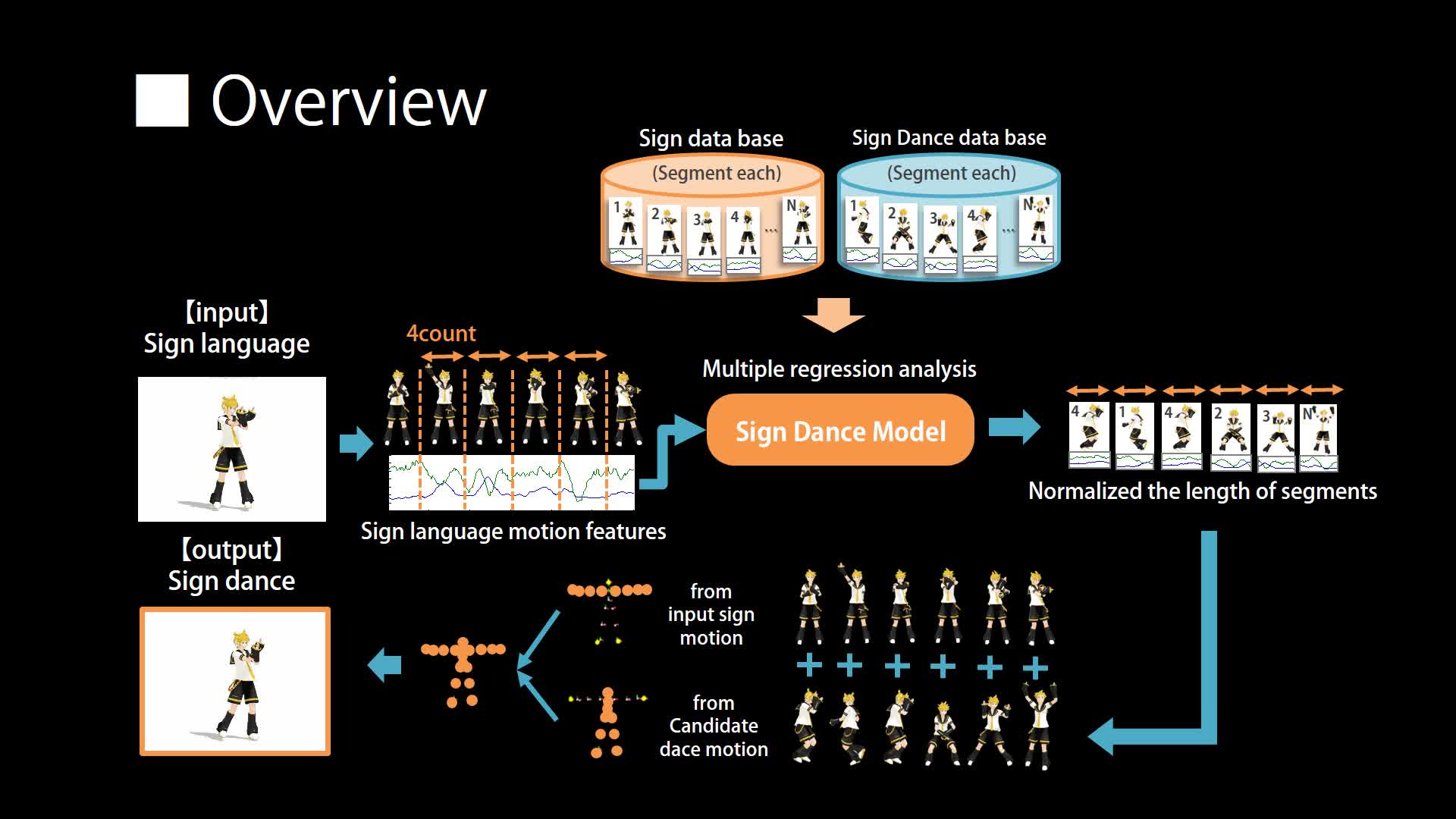

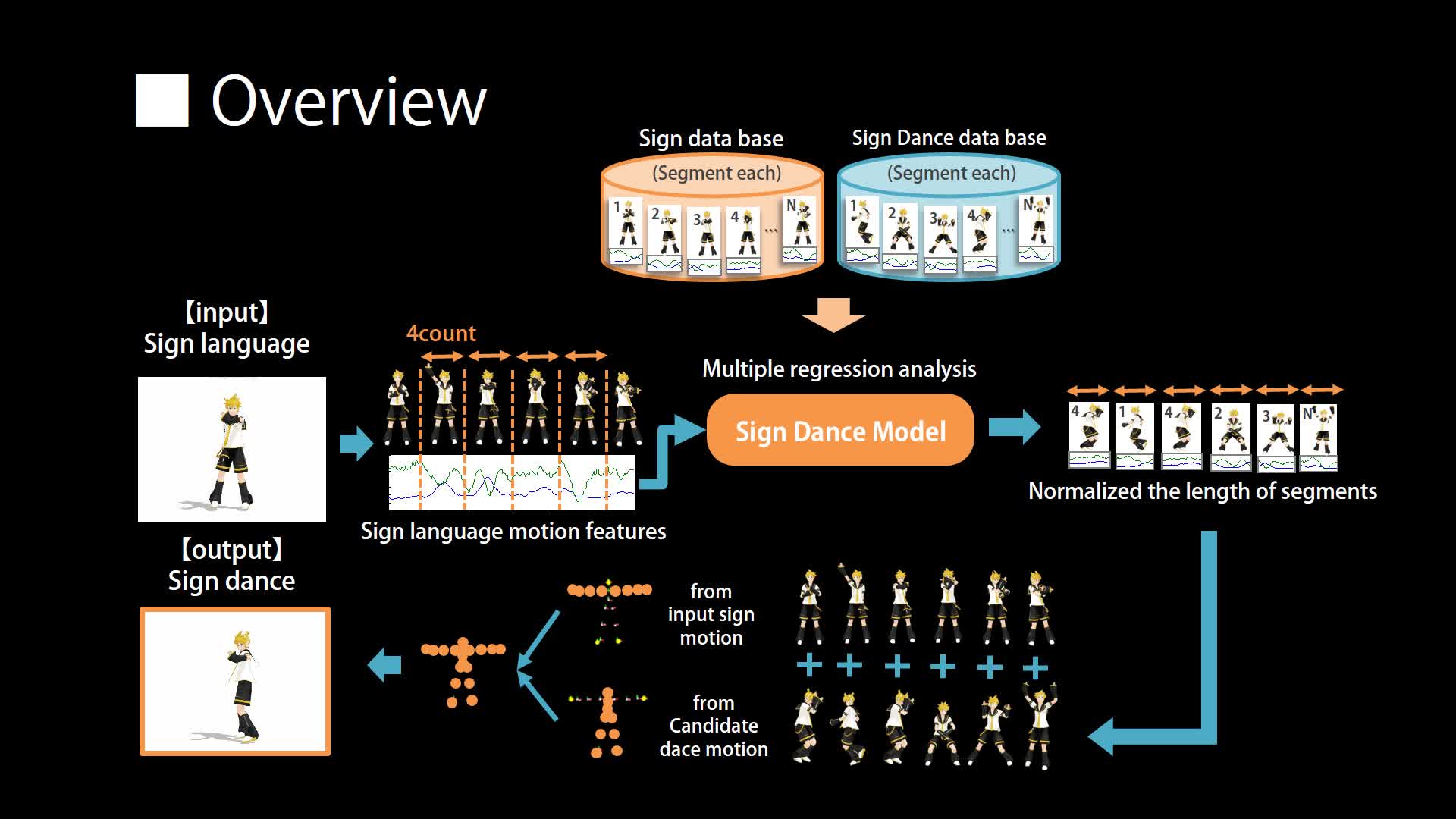

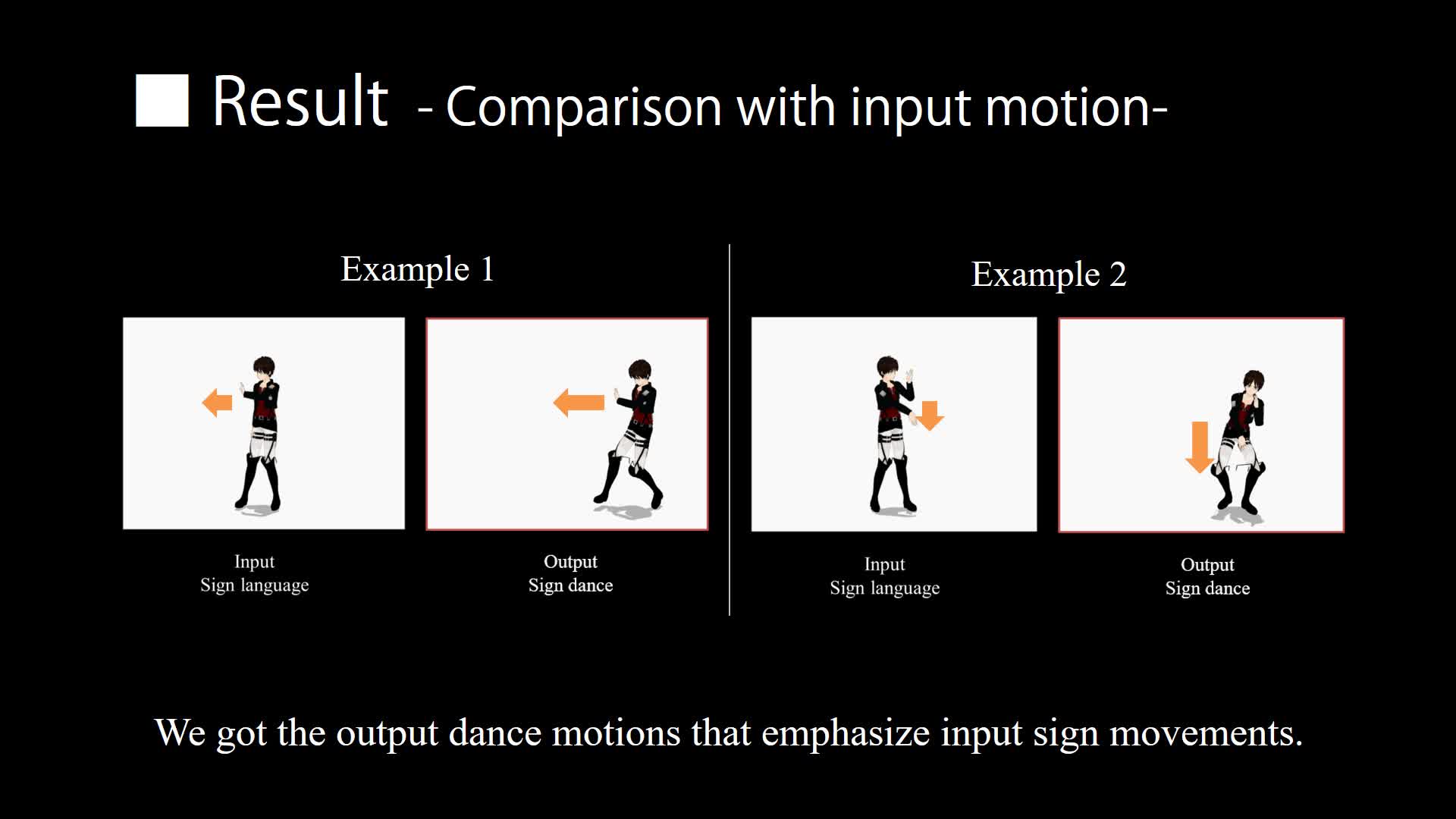

In recent years, thanks to the development of 3DCG animation editing tools (e.g. MikuMikuDance), a lot of 3D character dance animation movies are created by amateur users. However, it is very difficult to create choreography from scratch without any technical knowledge. Shiratori et al. [2006] produced the dance automatic generation system considering rhythm and intensity of dance motions. However, each segment is selected randomly from database, so the generated dance motion has no linguistic or emotional meanings. Takano et al. [2010] produced a human motion generation system considering motion labels. However, they use simple motion labels like 'running' or 'jump', so they cannot generate motions that express emotions. In reality, professional dancers make choreography based on music features or lyrics in music, and express emotion or how they feel in music. In our work, we aim at generating more emotional dance motion easily. Therefore, we use linguistic information in lyrics, and generate dance motion.

YouTube

Cite This Research

Supporting Grants

Received from The Ministry of Education, Culture, Sports, Science and Technology, 2015-2016

Project Page