Automatic Sign Dance Synthesis from Gesture-Based Sign Language

Naoya Iwamoto, Hubert P. H. Shum, Wakana Asahina and Shigeo Morishima

Proceedings of the 2019 ACM SIGGRAPH Conference on Motion, Interaction and Games (MIG), 2019

Abstract

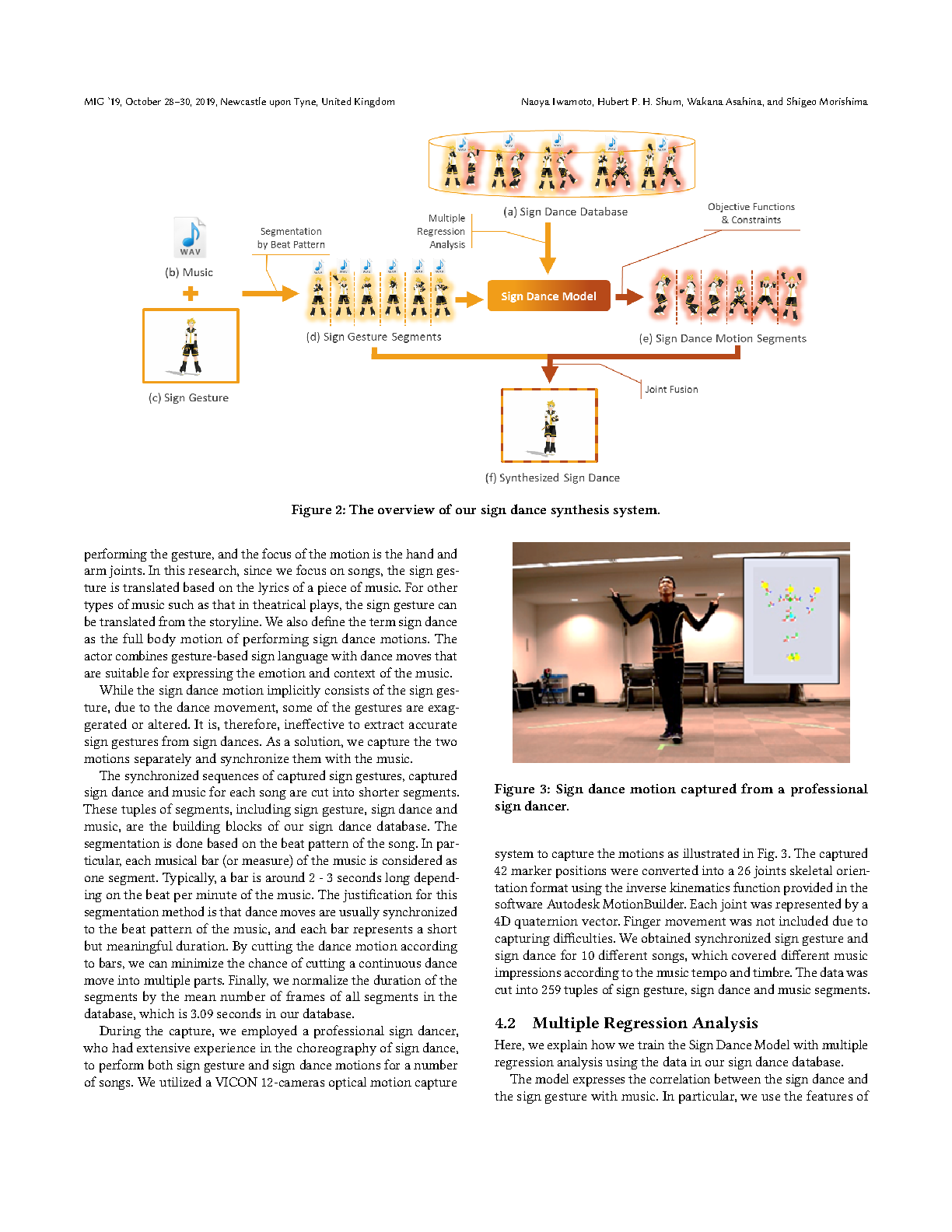

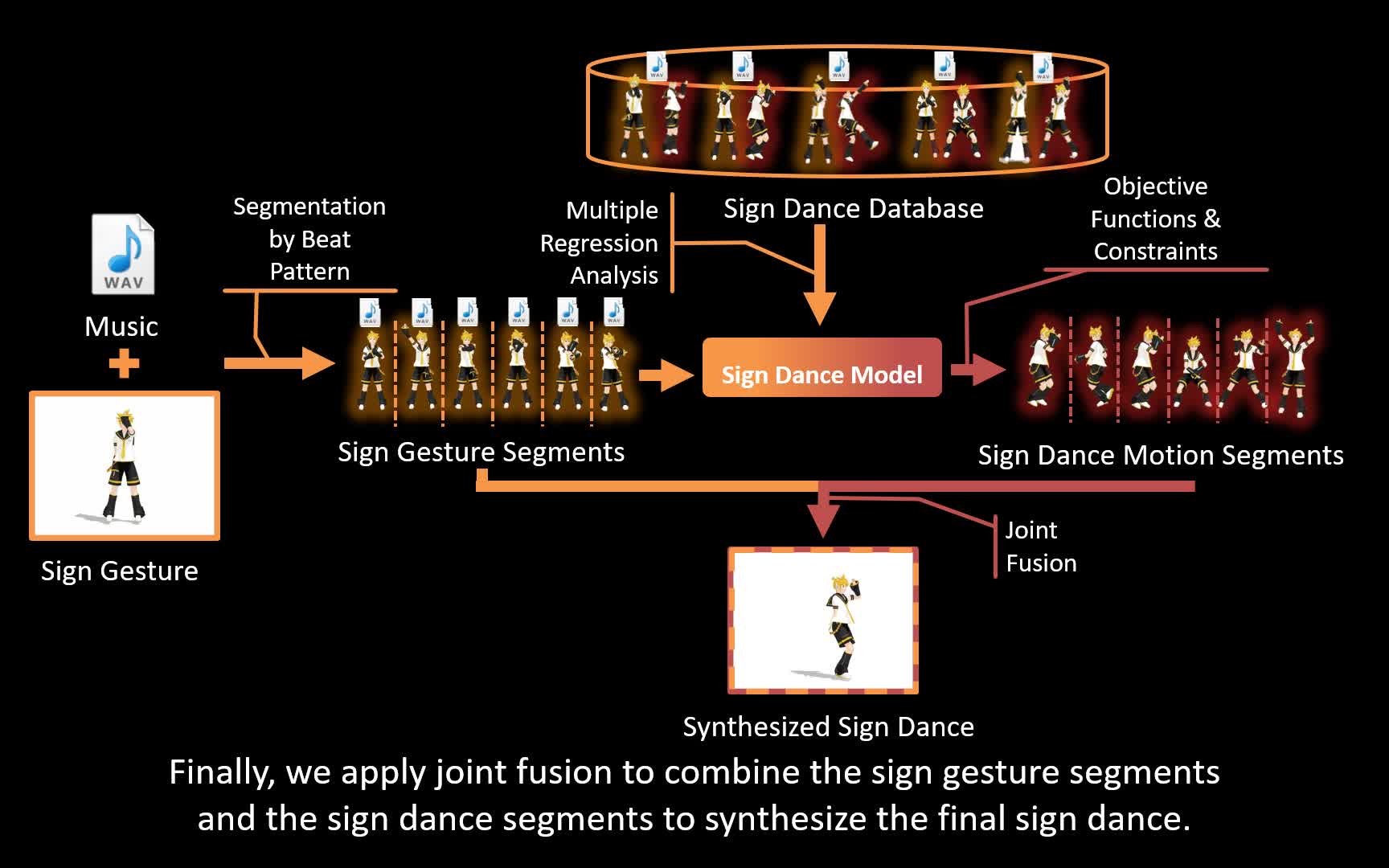

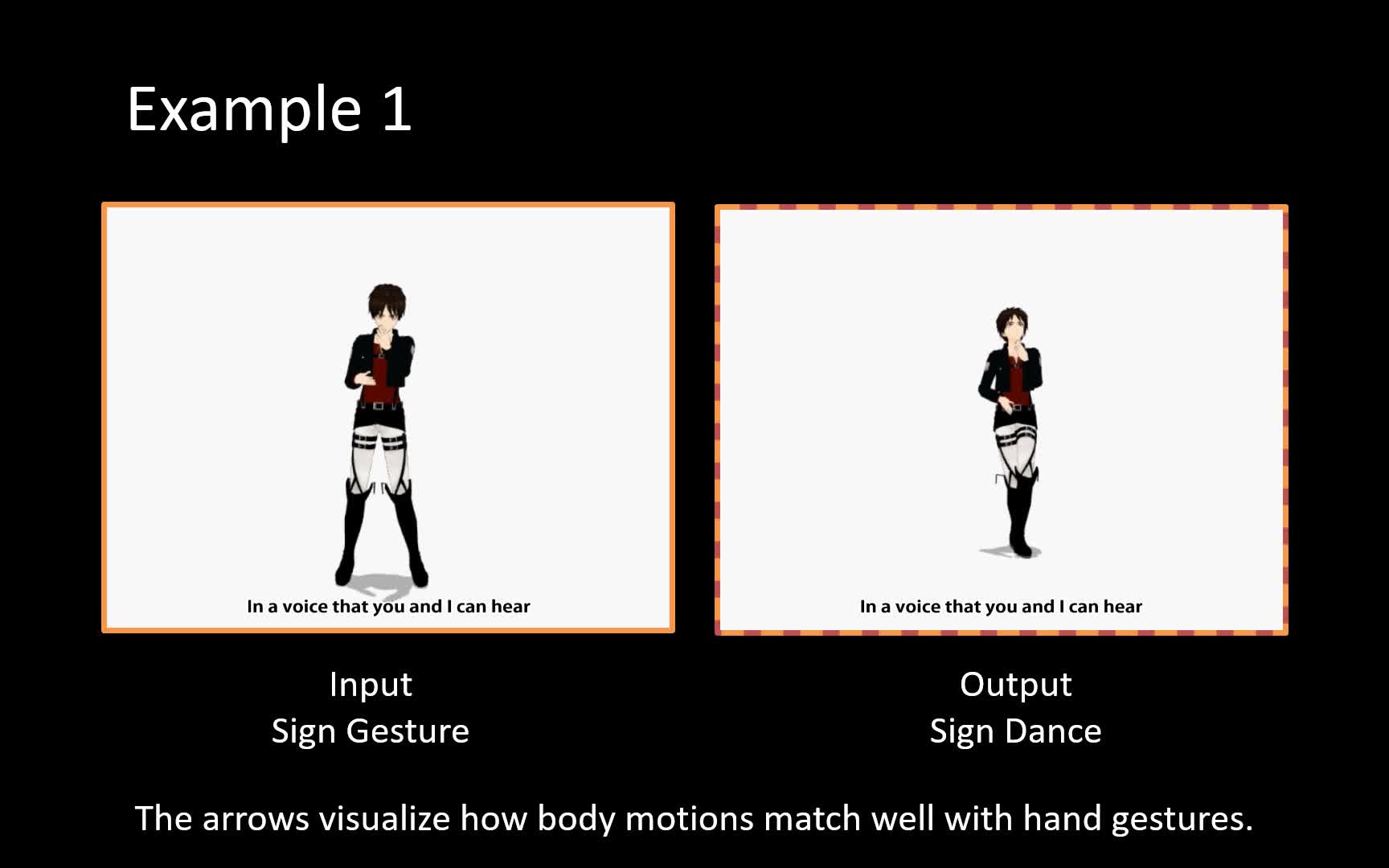

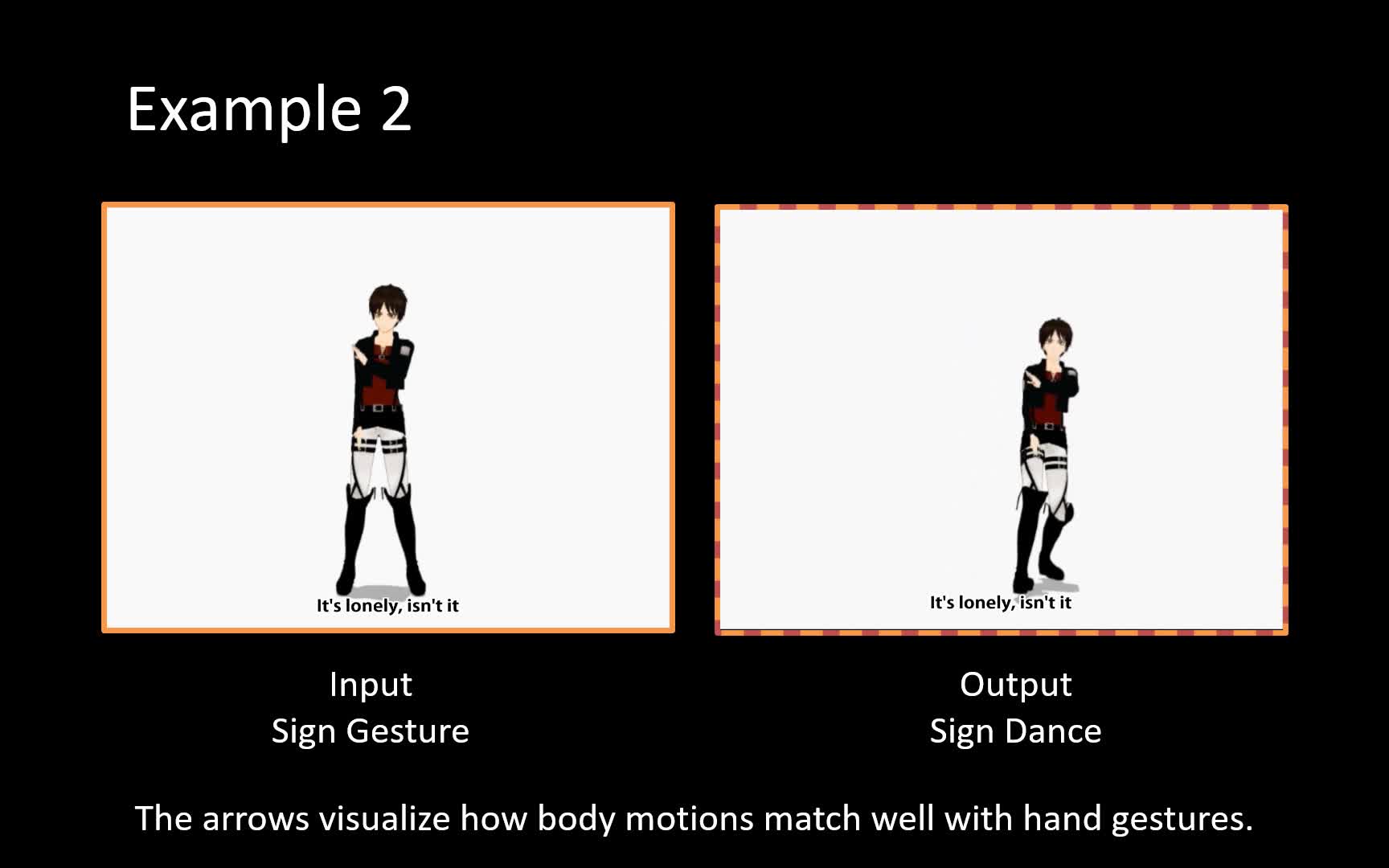

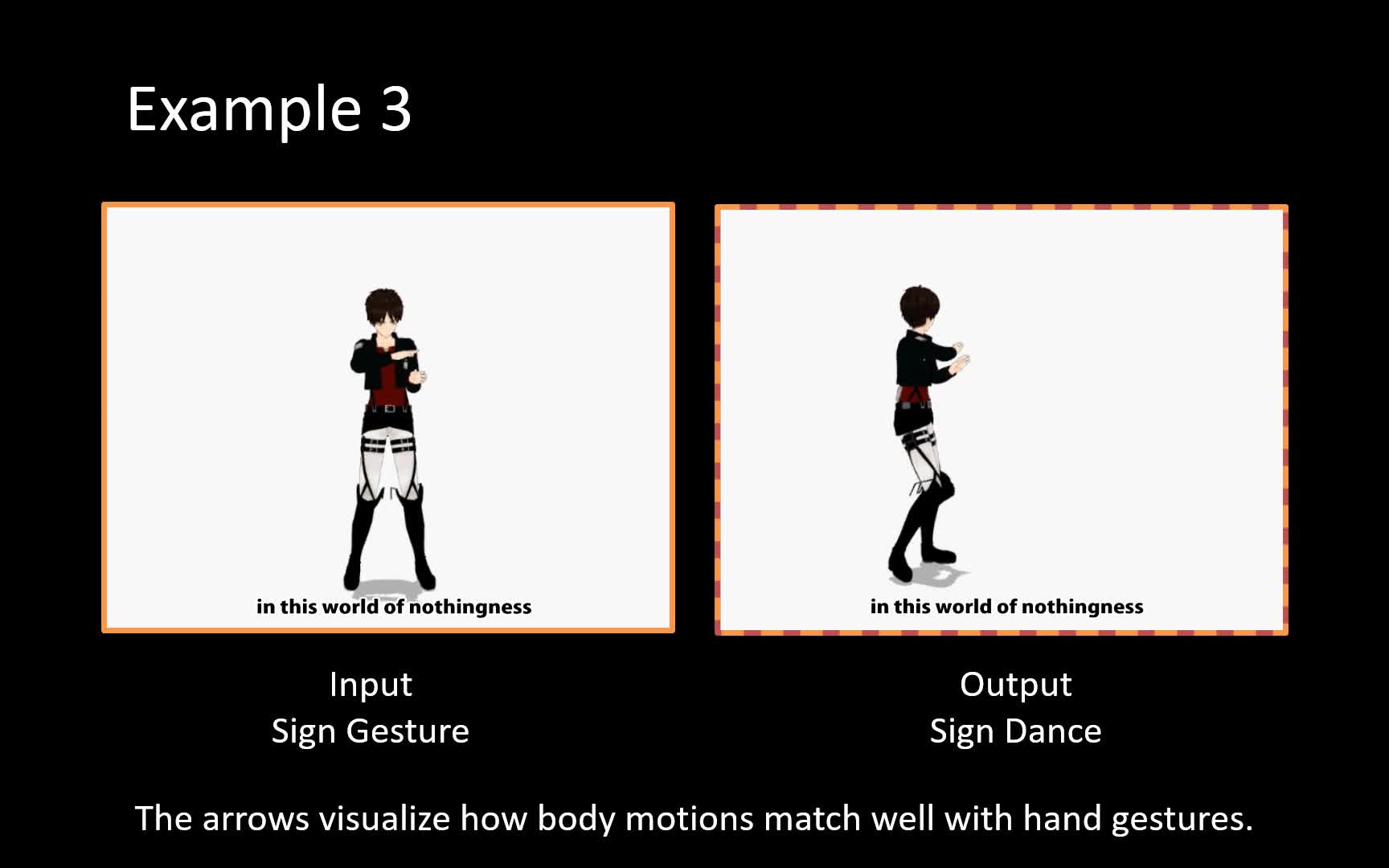

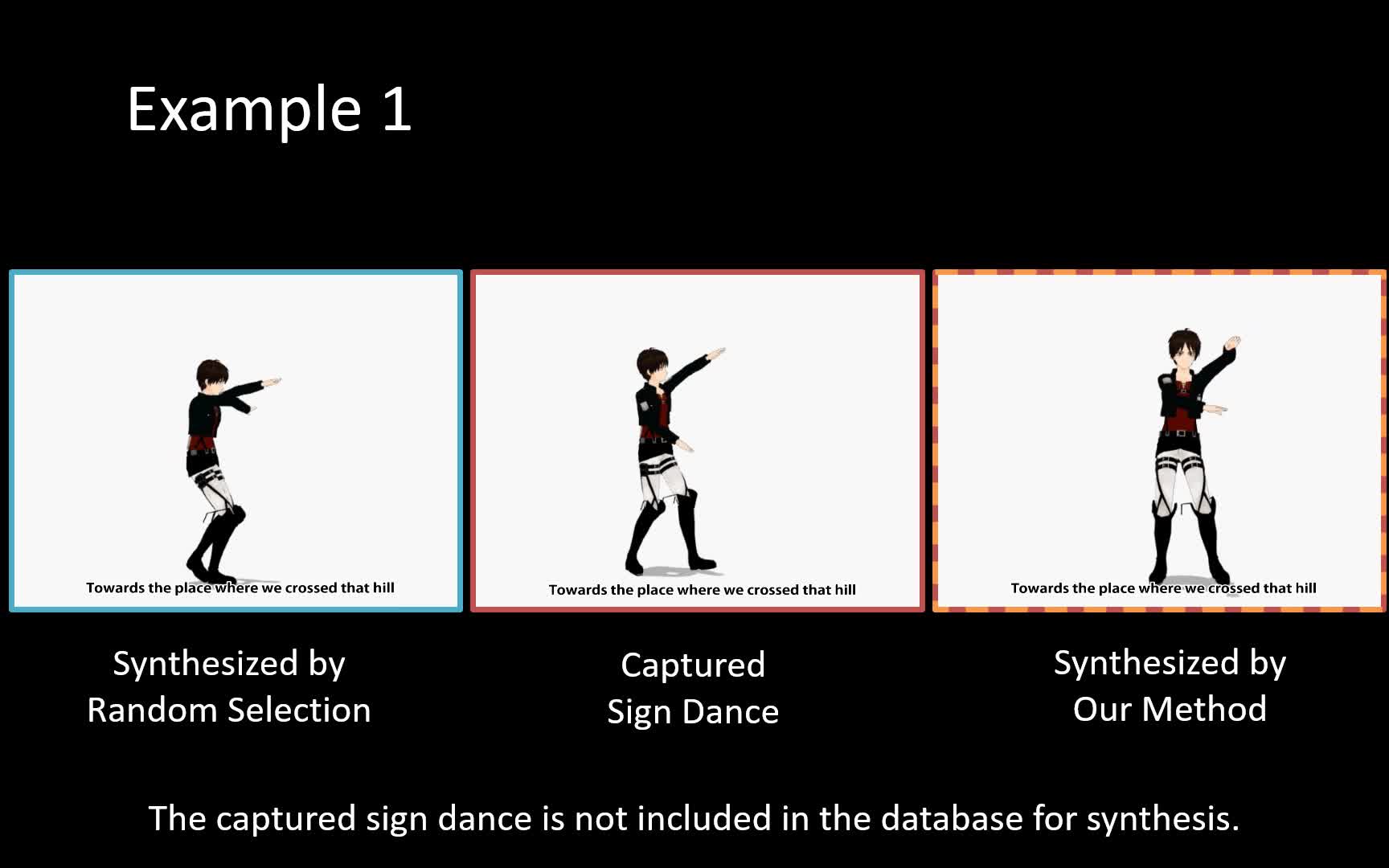

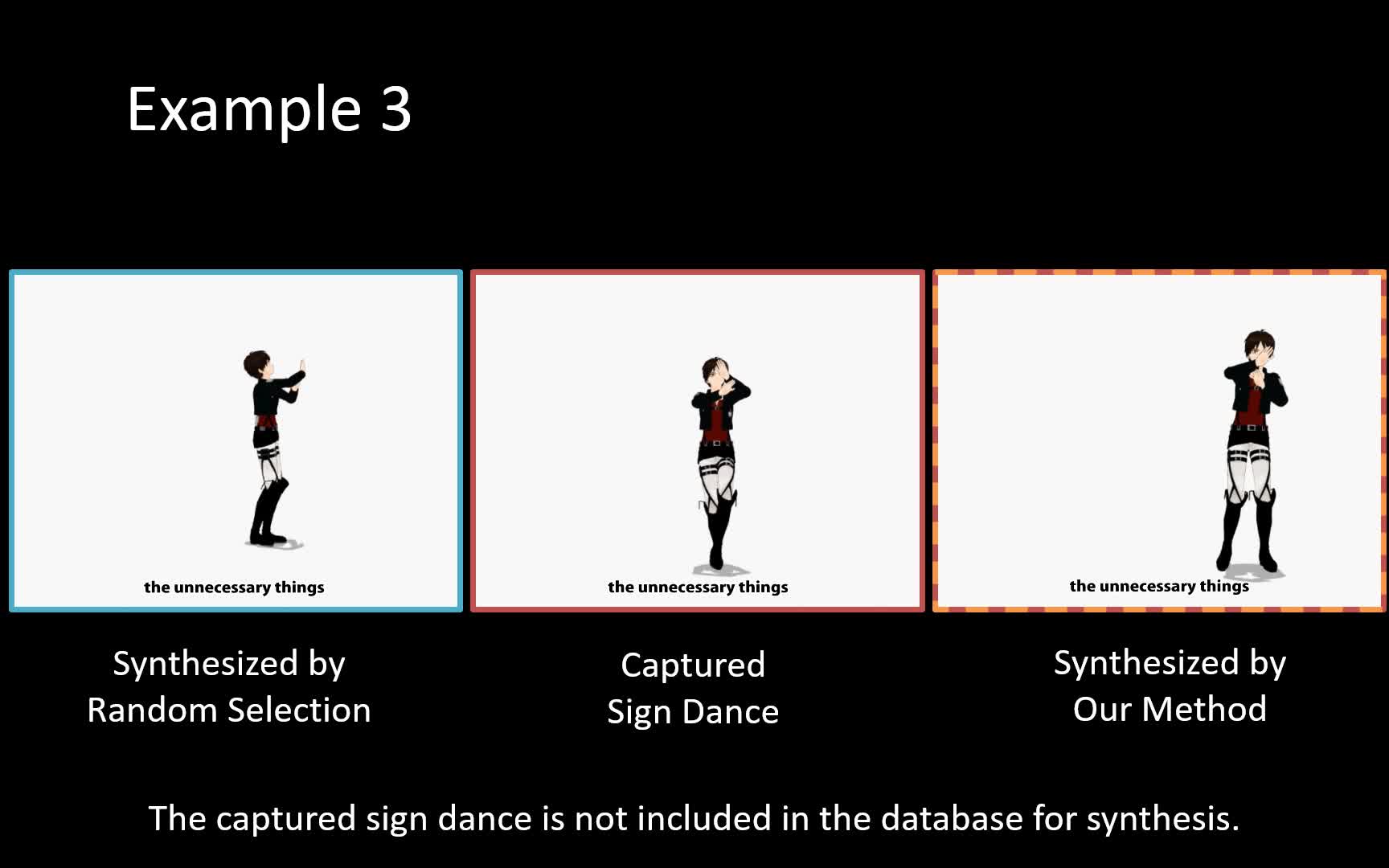

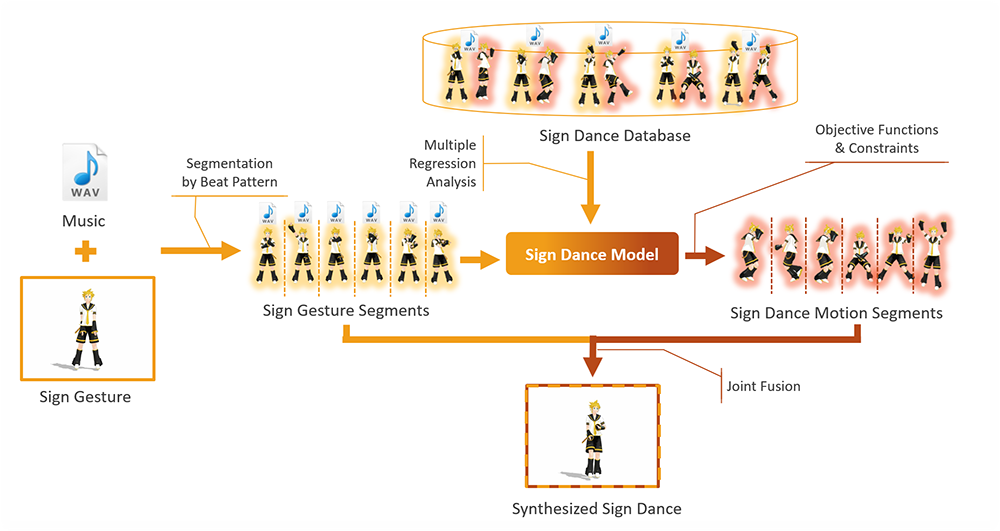

Automatic dance synthesis has become more and more popular due to the increasing demand in computer games and animations. Existing research generates dance motions without much consideration for the context of the music. In reality, professional dancers make choreography according to the lyrics and music features. In this research, we focus on a particular genre of dance known as sign dance, which combines gesture-based sign language with full body dance motion. We propose a system to automatically generate sign dance from a piece of music and its corresponding sign gesture. The core of the system is a Sign Dance Model trained by multiple regression analysis to represent the correlations between sign dance and sign gesture/music, as well as a set of objective functions to evaluate the quality of the sign dance. Our system can be applied to music visualization, allowing people with hearing difficulties to understand and enjoy music.

YouTube

Cite This Research

Supporting Grants

Received from The Ministry of Education, Culture, Sports, Science and Technology, 2015-2016

Project Page