UAV-ReID: A Benchmark on Unmanned Aerial Vehicle Re-Identification in Video Imagery

Daniel Organisciak, Matthew Poyser, Aishah Alsehaim, Shanfeng Hu, Brian K. S. Isaac-Medina, Toby P. Breckon and Hubert P. H. Shum

Proceedings of the 2022 International Conference on Computer Vision Theory and Applications (VISAPP), 2022

Abstract

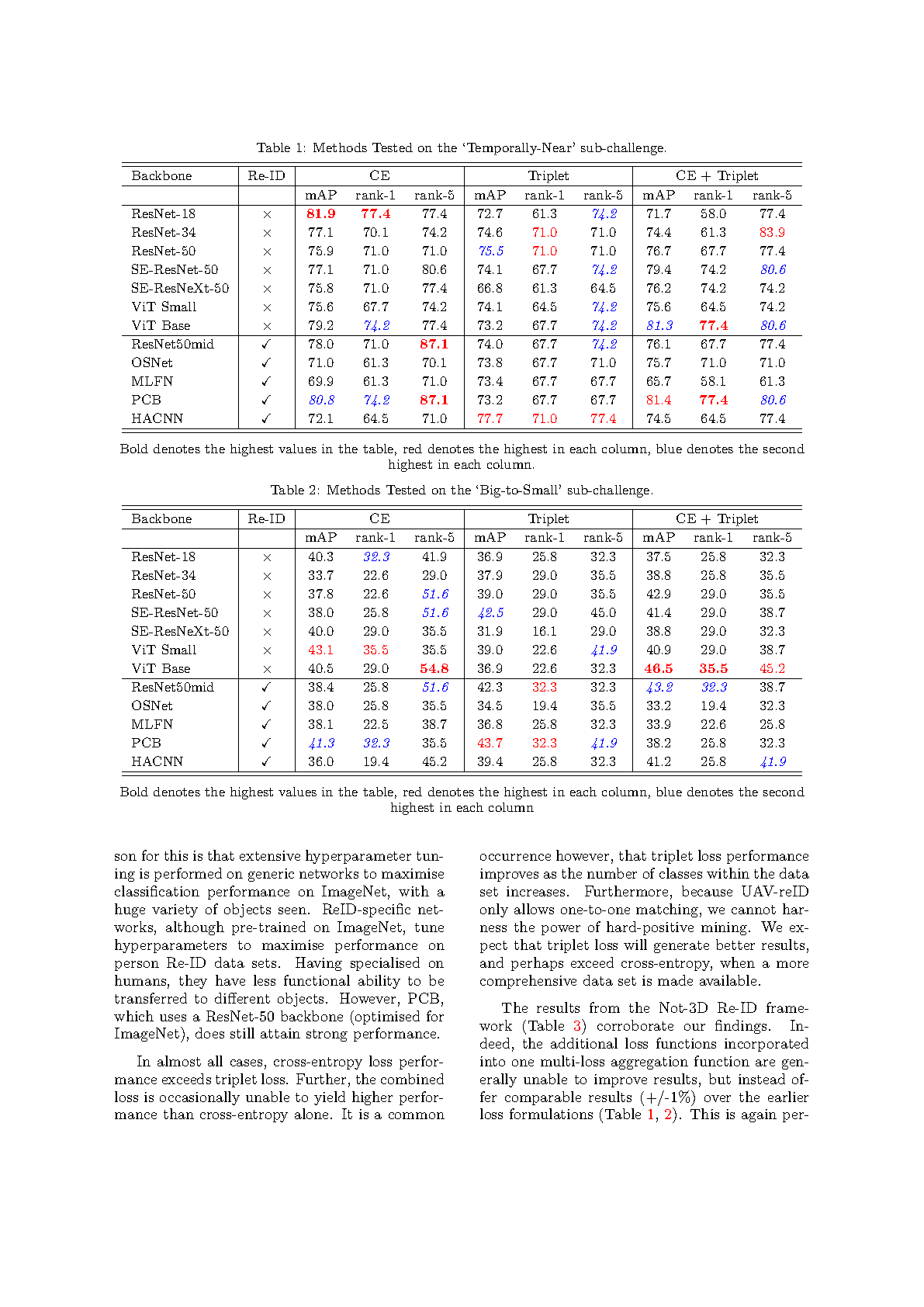

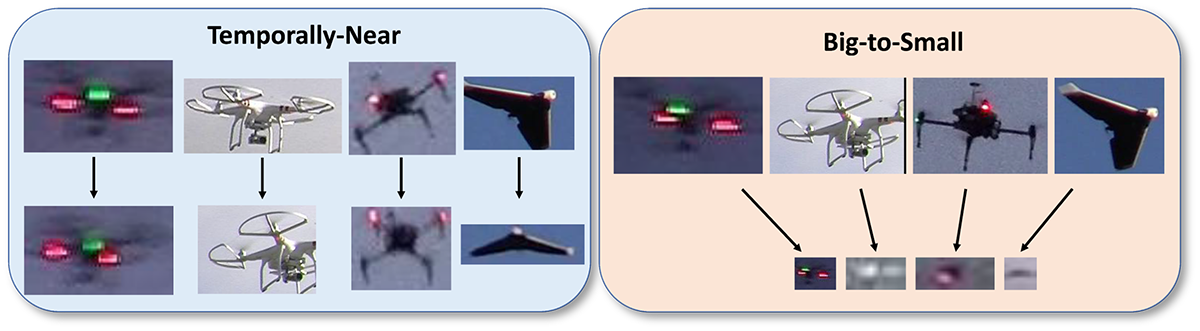

As unmanned aerial vehicles (UAV) become more accessible with a growing range of applications, the risk of UAV disruption increases. Recent development in deep learning allows vision-based counter-UAV systems to detect and track UAVs with a single camera. However, the limited field of view of a single camera necessitates multi-camera configurations to match UAVs across viewpoints -- a problem known as re-identification (Re-ID). While there has been extensive research on person and vehicle Re-ID to match objects across time and viewpoints, to the best of our knowledge, UAV Re-ID remains unresearched but challenging due to great differences in scale and pose. We propose the first UAV re-identification data set, UAV-reID, to facilitate the development of machine learning solutions in multi-camera environments. UAV-reID has two sub-challenges: Temporally-Near and Big-to-Small to evaluate Re-ID performance across viewpoints and scale respectively. We conduct a benchmark study by extensively evaluating different Re-ID deep learning based approaches and their variants, spanning both convolutional and transformer architectures. Under the optimal configuration, such approaches are sufficiently powerful to learn a well-performing representation for UAV (81.9% mAP for Temporally-Near, 46.5% for the more difficult Big-to-Small challenge), while vision transformers are the most robust to extreme variance of scale.

Cite This Research

Supporting Grants

Security Technology Research Innovation Grants Programme (S-TRIG) (Ref: 007CD): £32,727, Principal Investigator

Received from The Catapult Network (S-TRIG), UK, 2020-2021

Project Page

Received from Northumbria University, UK, 2018-2021

Project Page